物体检测数据集处理总结

目录

官方的labelme不支持大图像的打开,比如遥感图像。如果遇到图片打不开的情况,可以使用我修改的labelme。

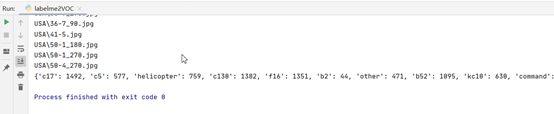

Labelme转VOC,将没有标注的数据生成测试集,并统计每个类别的个数。

对Labelme标注图像,进行90、180、270的旋转,实现标注数据的扩充。

对标注格式为txt的数据集,实现90、180、270度的旋转

标注工具Labelme的安装。

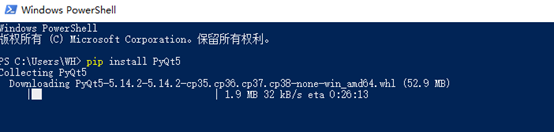

安装pyqt

pip install PyQt5

安装 PIL包

pip install Pillow

安装labelme

1、官方的安装命令。

pip install labelme

官方的labelme不支持大图像的打开,比如遥感图像。如果遇到图片打不开的情况,可以使用我修改的labelme。

labelme-master(修改后支持大图像).zip

https://download.csdn.net/download/hhhhhhhhhhwwwwwwwwww/12047245

VOC格式的数据逆向转为Labelme标注的数据集

import sys

import os.path as osp

import io

from labelme.logger import logger

from labelme import PY2

from labelme import QT4

import PIL.Image

import base64

from labelme import utils

import os

import cv2

import xml.etree.ElementTree as ET

module_path = os.path.abspath(os.path.join('..'))

if module_path not in sys.path:

sys.path.append(module_path)

import json

from PIL import Image

Image.MAX_IMAGE_PIXELS = None

imageroot = 'RSOD/'

listDir = ['aircraft', 'oiltank']

def load_image_file(filename):

try:

image_pil = PIL.Image.open(filename)

except IOError:

logger.error('Failed opening image file: {}'.format(filename))

return

# apply orientation to image according to exif

image_pil = utils.apply_exif_orientation(image_pil)

with io.BytesIO() as f:

ext = osp.splitext(filename)[1].lower()

if PY2 and QT4:

format = 'PNG'

elif ext in ['.jpg', '.jpeg']:

format = 'JPEG'

else:

format = 'PNG'

image_pil.save(f, format=format)

f.seek(0)

return f.read()

def dict_json(flags, imageData, shapes, imagePath, fillColor=None, lineColor=None, imageHeight=100, imageWidth=100):

'''

:param imageData: str

:param shapes: list

:param imagePath: str

:param fillColor: list

:param lineColor: list

:return: dict

'''

return {"version": "3.16.4", "flags": flags, "shapes": shapes, 'lineColor': lineColor, "fillColor": fillColor,

'imagePath': imagePath.split('/')[1], "imageData": imageData, 'imageHeight': imageHeight,

'imageWidth': imageWidth}

data = json.load(open('1.json'))

for subPath in listDir:

xmlpathName = imageroot + subPath + '/Annotation/xml'

imagepath = imageroot + subPath + '/JPEGImages'

resultFile = os.listdir(xmlpathName)

for file in resultFile:

print(file)

imagePH = imagepath + '/' + file.split('.')[0] + '.jpg'

print(imagePH)

tree = ET.parse(xmlpathName + '/' + file)

image = cv2.imread(imagePH)

shapes = data["shapes"]

version = data["version"]

flags = data["flags"]

lineColor = data["lineColor"]

fillColor = data['fillColor']

newshapes = []

for elem in tree.iter():

if 'object' in elem.tag:

name = ''

xminNode = 0

yminNode = 0

xmaxNode = 0

ymaxNode = 0

for attr in list(elem):

if 'name' in attr.tag:

name = attr.text

if 'bndbox' in attr.tag:

for dim in list(attr):

if 'xmin' in dim.tag:

xminNode = int(round(float(dim.text)))

if 'ymin' in dim.tag:

yminNode = int(round(float(dim.text)))

if 'xmax' in dim.tag:

xmaxNode = int(round(float(dim.text)))

if 'ymax' in dim.tag:

ymaxNode = int(round(float(dim.text)))

line_color = None

fill_color = None

newPoints = [[float(xminNode), float(yminNode)], [float(xmaxNode), float(ymaxNode)]]

shape_type = 'rectangle'

flags = flags

newshapes.append(

{"label": name, "line_color": line_color, "fill_color": fill_color, "points": newPoints,

"shape_type": shape_type, "flags": flags})

imageData_90 = load_image_file(imagePH)

imageData_90 = base64.b64encode(imageData_90).decode('utf-8')

imageHeight = image.shape[0]

imageWidth = image.shape[1]

data_90 = dict_json(flags, imageData_90, newshapes, imagePH, fillColor, lineColor, imageHeight, imageWidth)

json_file = imagePH[:-4] + '.json'

json.dump(data_90, open(json_file, 'w'))

- Labelme标注的数据集转VOC2007格式的数据集。

VOC2007数据文件夹说明

1)JPEGImages文件夹

文件夹里包含了训练图片和测试图片,混放在一起

2)Annatations文件夹

文件夹存放的是xml格式的标签文件,每个xml文件都对应于JPEGImages文件夹的一张图片

3)ImageSets文件夹

Action存放的是人的动作,我们暂时不用

Layout存放的人体部位的数据。我们暂时不用

Main存放的是图像物体识别的数据,Main里面有test.txt, train.txt, val.txt,trainval.txt.这四个文件我们后面会生成

XML说明

<?xml version="1.0" encoding="utf-8"?>

<annotation>

<source>

<image>optic rs image</image>

<annotation>Lmars RSDS2016</annotation>

<flickrid>0</flickrid>

<database>Lmars Detection Dataset of RS</database>

</source>

<object>

<!--bounding box的四个坐标,分别为左上角和右下角的x,y坐标-->

<bndbox>

<xmin>690</xmin>

<ymin>618</ymin>

<ymax>678</ymax>

<xmax>748</xmax>

</bndbox>

<!--是否容易被识别,0表示容易,1表示困难-->

<difficult>0</difficult>

<pose>Left</pose>

<!--物体类别-->

<name>aircraft</name>

<!--是否被裁剪,0表示完整,1表示不完整-->

<truncated>1</truncated>

</object>

<filename>aircraft_773.jpg</filename>

<!--是否用于分割,0表示用于,1表示不用于-->

<segmented>0</segmented>

<!--图片所有者-->

<owner>

<name>Lmars, Wuhan University</name>

<flickrid>I do not know</flickrid>

</owner>

<folder>RSDS2016</folder>

<size>

<width>1044</width>

<depth>3</depth>

<height>915</height>

</size>

</annotation>

完整代码:

import os

from typing import List, Any

import numpy as np

import codecs

import json

from glob import glob

import cv2

import shutil

from sklearn.model_selection import train_test_split

# 1.标签路径

labelme_path = "LabelmeData/" # 原始labelme标注数据路径

saved_path = "VOC2007/" # 保存路径

isUseTest=True#是否创建test集

# 2.创建要求文件夹

if not os.path.exists(saved_path + "Annotations"):

os.makedirs(saved_path + "Annotations")

if not os.path.exists(saved_path + "JPEGImages/"):

os.makedirs(saved_path + "JPEGImages/")

if not os.path.exists(saved_path + "ImageSets/Main/"):

os.makedirs(saved_path + "ImageSets/Main/")

# 3.获取待处理文件

files = glob(labelme_path + "*.json")

files = [i.replace("\\","/").split("/")[-1].split(".json")[0] for i in files]

print(files)

# 4.读取标注信息并写入 xml

for json_file_ in files:

json_filename = labelme_path + json_file_ + ".json"

json_file = json.load(open(json_filename, "r", encoding="utf-8"))

height, width, channels = cv2.imread(labelme_path + json_file_ + ".jpg").shape

with codecs.open(saved_path + "Annotations/" + json_file_ + ".xml", "w", "utf-8") as xml:

xml.write('<annotation>\n')

xml.write('\t<folder>' + 'WH_data' + '</folder>\n')

xml.write('\t<filename>' + json_file_ + ".jpg" + '</filename>\n')

xml.write('\t<source>\n')

xml.write('\t\t<database>WH Data</database>\n')

xml.write('\t\t<annotation>WH</annotation>\n')

xml.write('\t\t<image>flickr</image>\n')

xml.write('\t\t<flickrid>NULL</flickrid>\n')

xml.write('\t</source>\n')

xml.write('\t<owner>\n')

xml.write('\t\t<flickrid>NULL</flickrid>\n')

xml.write('\t\t<name>WH</name>\n')

xml.write('\t</owner>\n')

xml.write('\t<size>\n')

xml.write('\t\t<width>' + str(width) + '</width>\n')

xml.write('\t\t<height>' + str(height) + '</height>\n')

xml.write('\t\t<depth>' + str(channels) + '</depth>\n')

xml.write('\t</size>\n')

xml.write('\t\t<segmented>0</segmented>\n')

for multi in json_file["shapes"]:

points = np.array(multi["points"])

labelName=multi["label"]

xmin = min(points[:, 0])

xmax = max(points[:, 0])

ymin = min(points[:, 1])

ymax = max(points[:, 1])

label = multi["label"]

if xmax <= xmin:

pass

elif ymax <= ymin:

pass

else:

xml.write('\t<object>\n')

xml.write('\t\t<name>' + labelName+ '</name>\n')

xml.write('\t\t<pose>Unspecified</pose>\n')

xml.write('\t\t<truncated>1</truncated>\n')

xml.write('\t\t<difficult>0</difficult>\n')

xml.write('\t\t<bndbox>\n')

xml.write('\t\t\t<xmin>' + str(int(xmin)) + '</xmin>\n')

xml.write('\t\t\t<ymin>' + str(int(ymin)) + '</ymin>\n')

xml.write('\t\t\t<xmax>' + str(int(xmax)) + '</xmax>\n')

xml.write('\t\t\t<ymax>' + str(int(ymax)) + '</ymax>\n')

xml.write('\t\t</bndbox>\n')

xml.write('\t</object>\n')

print(json_filename, xmin, ymin, xmax, ymax, label)

xml.write('</annotation>')

# 5.复制图片到 VOC2007/JPEGImages/下

image_files = glob(labelme_path + "*.jpg")

print("copy image files to VOC007/JPEGImages/")

for image in image_files:

shutil.copy(image, saved_path + "JPEGImages/")

# 6.split files for txt

txtsavepath = saved_path + "ImageSets/Main/"

ftrainval = open(txtsavepath + '/trainval.txt', 'w')

ftest = open(txtsavepath + '/test.txt', 'w')

ftrain = open(txtsavepath + '/train.txt', 'w')

fval = open(txtsavepath + '/val.txt', 'w')

total_files = glob("./VOC2007/Annotations/*.xml")

total_files = [i.replace("\\","/").split("/")[-1].split(".xml")[0] for i in total_files]

trainval_files=[]

test_files=[]

if isUseTest:

trainval_files, test_files = train_test_split(total_files, test_size=0.15, random_state=55)

else:

trainval_files=total_files

for file in trainval_files:

ftrainval.write(file + "\n")

# split

train_files, val_files = train_test_split(trainval_files, test_size=0.15, random_state=55)

# train

for file in train_files:

ftrain.write(file + "\n")

# val

for file in val_files:

fval.write(file + "\n")

for file in test_files:

print(file)

ftest.write(file + "\n")

ftrainval.close()

ftrain.close()

fval.close()

ftest.close()注:训练集和验证集的划分方法是采用 sklearn.model_selection.train_test_split 进行分割的。

Labelme转VOC,将没有标注的数据生成测试集,并统计每个类别的个数。

对标注数据的统计。

将没有标注的数据放在test文件夹下面。

import os

import numpy as np

import codecs

import json

from glob import glob

import cv2

import shutil

from sklearn.model_selection import train_test_split

dicImg={}

imgLis=[]

# 1.标签路径

labelme_path = "USA/" # 原始labelme标注数据路径

saved_path = "VOC2007/" # 保存路径

# 2.创建要求文件夹

if not os.path.exists(saved_path + "Annotations"):

os.makedirs(saved_path + "Annotations")

if not os.path.exists(saved_path + "JPEGImages/"):

os.makedirs(saved_path + "JPEGImages/")

if not os.path.exists(saved_path + "test/"):

os.makedirs(saved_path + "test/")

if not os.path.exists(saved_path + "ImageSets/Main/"):

os.makedirs(saved_path + "ImageSets/Main/")

# 3.获取待处理文件

files = glob(labelme_path + "*.json")

files = [i.replace("\\","/").split("/")[-1].split(".json")[0] for i in files]

print(files)

# 4.读取标注信息并写入 xml

for json_file_ in files:

json_filename = labelme_path + json_file_ + ".json"

json_file = json.load(open(json_filename, "r", encoding="utf-8"))

height, width, channels = cv2.imread(labelme_path + json_file_ + ".jpg").shape

with codecs.open(saved_path + "Annotations/" + json_file_ + ".xml", "w", "utf-8") as xml:

xml.write('<annotation>\n')

xml.write('\t<folder>' + 'UAV_data' + '</folder>\n')

xml.write('\t<filename>' + json_file_ + ".jpg" + '</filename>\n')

xml.write('\t<source>\n')

xml.write('\t\t<database>The UAV autolanding</database>\n')

xml.write('\t\t<annotation>UAV AutoLanding</annotation>\n')

xml.write('\t\t<image>flickr</image>\n')

xml.write('\t\t<flickrid>NULL</flickrid>\n')

xml.write('\t</source>\n')

xml.write('\t<owner>\n')

xml.write('\t\t<flickrid>NULL</flickrid>\n')

xml.write('\t\t<name>wanghao</name>\n')

xml.write('\t</owner>\n')

xml.write('\t<size>\n')

xml.write('\t\t<width>' + str(width) + '</width>\n')

xml.write('\t\t<height>' + str(height) + '</height>\n')

xml.write('\t\t<depth>' + str(channels) + '</depth>\n')

xml.write('\t</size>\n')

xml.write('\t\t<segmented>0</segmented>\n')

for multi in json_file["shapes"]:

points = np.array(multi["points"])

labelName=multi["label"].lower()

if labelName in dicImg:

count=dicImg[labelName]

count=count+1;

dicImg[labelName]=count

else:

dicImg[labelName]=1

xmin = min(points[:, 0])

xmax = max(points[:, 0])

ymin = min(points[:, 1])

ymax = max(points[:, 1])

label = multi["label"]

if xmax <= xmin:

pass

elif ymax <= ymin:

pass

else:

xml.write('\t<object>\n')

xml.write('\t\t<name>' + labelName+ '</name>\n')

xml.write('\t\t<pose>Unspecified</pose>\n')

xml.write('\t\t<truncated>1</truncated>\n')

xml.write('\t\t<difficult>0</difficult>\n')

xml.write('\t\t<bndbox>\n')

xml.write('\t\t\t<xmin>' + str(int(xmin)) + '</xmin>\n')

xml.write('\t\t\t<ymin>' + str(int(ymin)) + '</ymin>\n')

xml.write('\t\t\t<xmax>' + str(int(xmax)) + '</xmax>\n')

xml.write('\t\t\t<ymax>' + str(int(ymax)) + '</ymax>\n')

xml.write('\t\t</bndbox>\n')

xml.write('\t</object>\n')

print(json_filename, xmin, ymin, xmax, ymax, label)

xml.write('</annotation>')

imgLis.append(json_file_+'.jpg')

shutil.copy(labelme_path+json_file_+'.jpg', saved_path + "JPEGImages/")

print(imgLis)

# 5.复制图片到 VOC2007/JPEGImages/下

image_files = glob(labelme_path + "*.jpg")

txtsavepath = saved_path + "ImageSets/Main/"

print("copy image files to VOC007/JPEGImages/")

ftest = open(txtsavepath + '/test.txt', 'w')

for image in image_files:

if image.split('\\')[1] not in imgLis:

print(image)

shutil.copy(image, saved_path + "test/")

ftest.write(image.replace("\\","/").split("/")[-1].split(".jpg")[0] + "\n")

# 6.split files for txt

ftrainval = open(txtsavepath + '/trainval.txt', 'w')

ftrain = open(txtsavepath + '/train.txt', 'w')

fval = open(txtsavepath + '/val.txt', 'w')

total_files = glob("./VOC2007/Annotations/*.xml")

total_files = [i.replace("\\","/").split("/")[-1].split(".xml")[0] for i in total_files]

for file in total_files:

ftrainval.write(file + "\n")

# split

train_files, val_files = train_test_split(total_files, test_size=0.15, random_state=42)

# train

for file in train_files:

ftrain.write(file + "\n")

# val

for file in val_files:

fval.write(file + "\n")

ftrainval.close()

ftrain.close()

fval.close()

print(dicImg)

ftest.close()

将Labelme标注的数据转为txt格式的数据集。

一张图像对应一个txt,txt中每行对应一个标记物体。

格式:类别 xmin ymin xmax ymax

import json

import os

from glob import glob

import shutil

# convert labelme json to DOTA txt format

def custombasename(fullname):

return os.path.basename(os.path.splitext(fullname)[0])

IN_PATH = 'USA'

OUT_PATH = 'labeltxt'

if not os.path.exists(OUT_PATH):

os.makedirs(OUT_PATH)

file_list = glob(IN_PATH + '/*.json')

for i in range(len(file_list)):

with open(file_list[i]) as f:

label_str = f.read()

label_dict = json.loads(label_str) # json文件读入dict

imgepath=file_list[i].split('.')[0]+'.jpg'

# 输出 txt 文件的路径

out_file = OUT_PATH + '/' + custombasename(file_list[i]) + '.txt'

shutil.copy(imgepath, OUT_PATH)

# 写入 poly 四点坐标 和 label

fout = open(out_file, 'w')

out_str = ''

for shape_dict in label_dict['shapes']:

out_str += shape_dict['label'] + ' '

points = shape_dict['points']

for p in points:

out_str += (str(p[0]) + ' ' + str(p[1]) + ' ')

out_str +='\n'

fout.write(out_str)

fout.close()

print('%d/%d' % (i + 1, len(file_list)))

对Labelme标注图像,进行90、180、270的旋转,实现标注数据的扩充。

在制作做遥感图像物体检测数据集的时候,遥感图像的物体都是平面的,有角度的问题,

可以对被检测物体实现不同角度的旋转,丰富数据集同时减少标注的工作量。

比如上图中的飞机,机头的朝向是斜向下的,现实中的飞机可能有各种的朝向,如果不做旋转,就会降低模型的检测能力。下图是旋转90度的效果。

需要安装的包:

labelme

scipy1.0.0版本

pyqt5

旋转最大的难点在于旋转后,需要对标注的点重新计算,保证标注的坐标不出现错乱。

旋转90度后,坐标转化:

points=shapelabel['points']#获取初始的坐标。

newPoints = [[float(points[0][1]), w-float(points[1][0])],

[float(points[1][1]), w-float(points[0][0])]]#旋转90度,重新对应坐标。w表示原始图像的宽度。

选旋转180度后,坐标转化:

points = shapelabel['points']

newPoints = [[w-float(points[1][0]), h - float(points[1][1])],

[w-float(points[0][0]), h - float(points[0][1])]] #旋转180度,重新对应坐标。h表示原始图像的高度。

旋转270度,坐标转化:

points = shapelabel['points']

newPoints = [[h - float(points[1][1]), float(points[0][0])],

[h - float(points[0][1]), float(points[1][0])]]

完整代码如下:

#scipy的版本为1.0.0

import scipy

from scipy import misc

import os

import glob

import PIL.Image

from labelme.logger import logger

from labelme import PY2

from labelme import QT4

import io

import json

import os.path as osp

import PIL.Image

from scipy import ndimage

import base64

from labelme import utils

def load_image_file(filename):

try:

image_pil = PIL.Image.open(filename)

except IOError:

logger.error('Failed opening image file: {}'.format(filename))

return

# apply orientation to image according to exif

image_pil = utils.apply_exif_orientation(image_pil)

with io.BytesIO() as f:

ext = osp.splitext(filename)[1].lower()

if PY2 and QT4:

format = 'PNG'

elif ext in ['.jpg', '.jpeg']:

format = 'JPEG'

else:

format = 'PNG'

image_pil.save(f, format=format)

f.seek(0)

return f.read()

def dict_json(flags,imageData,shapes,imagePath,fillColor=None,lineColor=None,imageHeight=100,imageWidth=100):

'''

:param imageData: str

:param shapes: list

:param imagePath: str

:param fillColor: list

:param lineColor: list

:return: dict

'''

return {"version":"3.16.4","flags":flags,"shapes":shapes,'lineColor':lineColor,"fillColor":fillColor,'imagePath':imagePath.split('\\')[1],"imageData":imageData,'imageHeight':imageHeight,'imageWidth':imageWidth}

def get_image_paths(folder):

return glob.glob(os.path.join(folder, '*.jpg'))

def create_read_img(filename):

data = json.load(open(filename.split('.')[0]+'.json'))

shapes = data["shapes"]

version = data["version"]

flags = data["flags"]

lineColor = data["lineColor"]

fillColor = data['fillColor']

newshapes = []

im = misc.imread(filename)

h,w,d=im.shape

img_rote_90 = ndimage.rotate(im, 90)

img_path_90=filename[:-4]+'_90.jpg'

scipy.misc.imsave(img_path_90,img_rote_90)

imageData_90 = load_image_file(img_path_90)

imageData_90 = base64.b64encode(imageData_90).decode('utf-8')

imageHeight =w

imageWidth = h

for shapelabel in shapes:

newLabel=shapelabel['label']

newline_color=shapelabel['line_color']

newfill_color=shapelabel['fill_color']

points=shapelabel['points']

newPoints = [[float(points[0][1]), w-float(points[1][0])],

[float(points[1][1]), w-float(points[0][0])]]

newshape_type=shapelabel['shape_type']

newflags=shapelabel['flags'] newshapes.append({'label':newLabel,'line_color':newline_color,'fill_color':newfill_color,'points':newPoints,'shape_type':newshape_type,'flags':newflags})

data_90 = dict_json(flags, imageData_90, newshapes, img_path_90, fillColor, lineColor, imageHeight, imageWidth)

json_file = img_path_90[:-4] + '.json'

json.dump(data_90, open(json_file, 'w'))

img_rote_180 = ndimage.rotate(im, 180)

img_path_180=filename[:-4]+'_180.jpg'

scipy.misc.imsave(img_path_180,img_rote_180)

imageData_180 = load_image_file(img_path_180)

imageData_180 = base64.b64encode(imageData_180).decode('utf-8')

imageHeight = h

imageWidth = w

newshapes = []

for shapelabel in shapes:

newLabel = shapelabel['label']

newline_color = shapelabel['line_color']

newfill_color = shapelabel['fill_color']

points = shapelabel['points']

newPoints = [[w-float(points[1][0]), h - float(points[1][1])],

[w-float(points[0][0]), h - float(points[0][1])]]

newshape_type = shapelabel['shape_type']

newflags = shapelabel['flags']

newshapes.append(

{'label': newLabel, 'line_color': newline_color, 'fill_color': newfill_color, 'points': newPoints,

'shape_type': newshape_type, 'flags': newflags})

data_180 = dict_json(flags, imageData_180, newshapes, img_path_180, fillColor, lineColor, imageHeight, imageWidth)

json_file = img_path_180[:-4] + '.json'

json.dump(data_180, open(json_file, 'w'))

img_rote_270 = ndimage.rotate(im, 270)

img_path_270=filename[:-4]+'_270.jpg'

scipy.misc.imsave(img_path_270,img_rote_270)

imageData_270 = load_image_file(img_path_270)

imageData_270 = base64.b64encode(imageData_270).decode('utf-8')

imageHeight = w

imageWidth = h

newshapes = []

for shapelabel in shapes:

newLabel = shapelabel['label']

newline_color = shapelabel['line_color']

newfill_color = shapelabel['fill_color']

points = shapelabel['points']

newPoints = [[h - float(points[1][1]), float(points[0][0])],

[h - float(points[0][1]), float(points[1][0])]]

newshape_type = shapelabel['shape_type']

newflags = shapelabel['flags']

newshapes.append(

{'label': newLabel, 'line_color': newline_color, 'fill_color': newfill_color, 'points': newPoints,

'shape_type': newshape_type, 'flags': newflags})

data_270 = dict_json(flags, imageData_270, newshapes, img_path_270, fillColor, lineColor, imageHeight, imageWidth)

json_file = img_path_270[:-4] + '.json'

json.dump(data_270, open(json_file, 'w'))

print(filename)

img_path = 'USA' #这个路径是所有图片在的位置

imgs = get_image_paths(img_path)

print (imgs)

for i in imgs:

create_read_img(i)

对标注格式为txt的数据集,实现90、180、270度的旋转

#scipy的版本为1.0.0

import scipy

from scipy import misc

import os

import glob

from scipy import ndimage

def get_image_paths(folder):

return glob.glob(os.path.join(folder, '*.jpg'))

def create_read_img(filename):

objectList = []

with open(filename.split('.')[0] + ".txt") as f:

for line in f.readlines():

for aa in line.split(' '):

if aa!='\n':

objectList.append(aa)

im = misc.imread(filename)

h,w,d=im.shape

img_rote_90 = ndimage.rotate(im, 90)

img_path_90=filename[:-4]+'_90.jpg'

scipy.misc.imsave(img_path_90,img_rote_90)

img_path_90_txt=img_path_90[:-4]+'.txt'

outLable = ''

for i in range(int(len(objectList)/5)):

object_label = objectList[i * 5]

outLable+=object_label+' '

object_x1 = objectList[i * 5 + 1]

object_y1 = objectList[i * 5 + 2]

object_x2 = objectList[i * 5 + 3]

object_y2 = objectList[i * 5 + 4]

outLable += object_y1 + ' '

outLable += str(w-float(object_x2)) + ' '

outLable += object_y2 + ' '

outLable += str(w-float(object_x1)) + '\n'

fout = open(img_path_90_txt, 'w')

fout.write(outLable)

fout.close()

img_rote_180 = ndimage.rotate(im, 180)

img_path_180=filename[:-4]+'_180.jpg'

scipy.misc.imsave(img_path_180,img_rote_180)

img_path_180_txt = img_path_180[:-4] + '.txt'

outLable = ''

for i in range(int(len(objectList) / 5)):

object_label = objectList[i * 5]

outLable += object_label + ' '

object_x1 = objectList[i * 5 + 1]

object_y1 = objectList[i * 5 + 2]

object_x2 = objectList[i * 5 + 3]

object_y2 = objectList[i * 5 + 4]

outLable += str(w-float(object_x2)) + ' '

outLable += str(h-float(object_y2) )+ ' '

outLable += str(w-float(object_x1)) + ' '

outLable += str(h - float(object_y1)) + '\n'

fout = open(img_path_180_txt, 'w')

fout.write(outLable)

fout.close()

img_rote_270 = ndimage.rotate(im, 270)

img_path_270=filename[:-4]+'_270.jpg'

scipy.misc.imsave(img_path_270,img_rote_270)

img_path_270_txt = img_path_270[:-4] + '.txt'

outLable = ''

for i in range(int(len(objectList) / 5)):

object_label = objectList[i * 5]

outLable += object_label + ' '

object_x1 = objectList[i * 5 + 1]

object_y1 = objectList[i * 5 + 2]

object_x2 = objectList[i * 5 + 3]

object_y2 = objectList[i * 5 + 4]

outLable += str(h-float(object_y2)) + ' '

outLable += (object_x1) + ' '

outLable +=str (h-float(object_y1)) + ' '

outLable += (object_x2) + '\n'

fout = open(img_path_270_txt, 'w')

fout.write(outLable)

fout.close()

print(filename)

img_path = 'CutResult' #这个路径是所有图片在的位置

imgs = get_image_paths(img_path)

print (imgs)

for i in imgs:

create_read_img(i)

相关文章

- 【黑马Android】(02)短信发送器/布局演示/android下单位/android下Junit/保存数据/android下权限/xml解析和序列化

- 【华为云技术分享】如何处理暗数据?

- java对ORACLE中的于NCHAR数据的处理,查询

- oracle sqlldr使用(导入速度快,但对数据本身的处理功能弱)

- 处理百万级以上的数据提高查询速度的方法(转)

- 面对大数据过分渲染宣传,你需要了解的9件事

- EF大数据批量处理----BulkInsert

- 阿里云大数据计算服务 - MaxCompute (原名 ODPS)

- php----处理从mysql查询返回的数据

- 使用JSP的标准标签库JSTL处理XML格式的数据

- SAP Commerce Cloud WCMS 里的 home 页面和 SAP Spartacus Page API 返回的数据比较

- SAP OData 框架处理 Metadata 元数据请求的实现细节,前后端组件部署在同一台物理服务器试读版

- 信息和数据之间的区别

- ML之FE:在特征工程/数据预处理阶段对【类别型】特征变量进行处理的技术总结、经验技巧、案例应用之详细攻略

- ML之FE:利用【数据分析+数据处理】算法对国内某平台上海2020年6月份房价数据集【12+1】进行特征工程处理(史上最完整,建议收藏)——附录

- Dataset之iGAN:iGAN数据集的简介、安装、使用方法之详细攻略

- 【数据分析】大型ADCP数据集的处理和分析(Matlab代码实现)

- 【阶段四】Python深度学习09篇:深度学习项目实战:循环神经网络处理时序数据项目实战:CNN和RNN组合模型

- sql 最近三条数据

- selenium--数据填充

- 万亿级数据洪峰下的分布式消息引擎

- 数据分析工具Pandas基础 数据清洗--处理缺失数据、处理重复数据、替换数据处理

- 战斗到底:Java vs. Python - 用哪个更适合处理海量数据?

- 【数据分析】大型ADCP数据集的处理和分析(Matlab代码实现)

- 机器学习如何处理和清洗数据?

- 使用wget批量下载geo数据集的全部文件 linux下载geo数据 geo处理的数据不是下载原始数据 Linux如何下载ftp文件 geo ftp geo ftp下载 geo下载