HBase管理、分析、修复和调试的自带工具hbck、hfile

2023-09-11 14:22:28 时间

目录

一、hbck

- hbck 工具用于Hbase底层文件系统的检测与修复,包含Master、RegionServer内存中的状态及HDFS上数据的状态之间的一致性、黑洞问题、定位元数据不一致问题等

- 命令: hbase hbck -help 查看参数帮助选项

Usage: fsck [opts] {only tables}

where [opts] are:

-help Display help options (this)

-details Display full report of all regions.

-timelag <timeInSeconds> Process only regions that have not experienced any metadata updates in the last <timeInSeconds> seconds.

-sleepBeforeRerun <timeInSeconds> Sleep this many seconds before checking if the fix worked if run with -fix

-summary Print only summary of the tables and status.

-metaonly Only check the state of the hbase:meta table.

-sidelineDir <hdfs://> HDFS path to backup existing meta.

-boundaries Verify that regions boundaries are the same between META and store files.

-exclusive Abort if another hbck is exclusive or fixing.

Datafile Repair options: (expert features, use with caution!)

-checkCorruptHFiles Check all Hfiles by opening them to make sure they are valid

-sidelineCorruptHFiles Quarantine corrupted HFiles. implies -checkCorruptHFiles

Replication options

-fixReplication Deletes replication queues for removed peers

Metadata Repair options supported as of version 2.0: (expert features, use with caution!)

-fixVersionFile Try to fix missing hbase.version file in hdfs.

-fixReferenceFiles Try to offline lingering reference store files

-fixHFileLinks Try to offline lingering HFileLinks

-noHdfsChecking Don't load/check region info from HDFS. Assumes hbase:meta region info is good. Won't check/fix any HDFS issue, e.g. hole, orphan, or overlap

-ignorePreCheckPermission ignore filesystem permission pre-check

NOTE: Following options are NOT supported as of HBase version 2.0+.

UNSUPPORTED Metadata Repair options: (expert features, use with caution!)

-fix Try to fix region assignments. This is for backwards compatiblity

-fixAssignments Try to fix region assignments. Replaces the old -fix

-fixMeta Try to fix meta problems. This assumes HDFS region info is good.

-fixHdfsHoles Try to fix region holes in hdfs.

-fixHdfsOrphans Try to fix region dirs with no .regioninfo file in hdfs

-fixTableOrphans Try to fix table dirs with no .tableinfo file in hdfs (online mode only)

-fixHdfsOverlaps Try to fix region overlaps in hdfs.

-maxMerge <n> When fixing region overlaps, allow at most <n> regions to merge. (n=5 by default)

-sidelineBigOverlaps When fixing region overlaps, allow to sideline big overlaps

-maxOverlapsToSideline <n> When fixing region overlaps, allow at most <n> regions to sideline per group. (n=2 by default)

-fixSplitParents Try to force offline split parents to be online.

-removeParents Try to offline and sideline lingering parents and keep daughter regions.

-fixEmptyMetaCells Try to fix hbase:meta entries not referencing any region (empty REGIONINFO_QUALIFIER rows)

UNSUPPORTED Metadata Repair shortcuts

-repair Shortcut for -fixAssignments -fixMeta -fixHdfsHoles -fixHdfsOrphans -fixHdfsOverlaps -fixVersionFile -sidelineBigOverlaps -fixReferenceFiles-fixHFileLinks

-repairHoles Shortcut for -fixAssignments -fixMeta -fixHdfsHoles命令: hbase hbck -details 显示所有Region的完整报告

命令: hbase hbck -metaonly 只检测元数据表的状态,如下图:

快捷修复命令:

- 命令:hbase hbck -repair -ignorePreCheckPermission

- 命令:hbase hbck -repairHoles -ignorePreCheckPermission

二、HFile

- 查看HFile文件内容工具,命令及参数如下:

三、snapshots

- HBase快照功能丰富,有很多特征,创建时不需要关闭集群

- 快照在几秒内就可以完成,几乎对整个集群没有任何性能影响。并且,它只占用一个微不足道的空间

- 启用快速需设置 hbase-site.xml 文件的 hbase.snapshot.enabled 为True

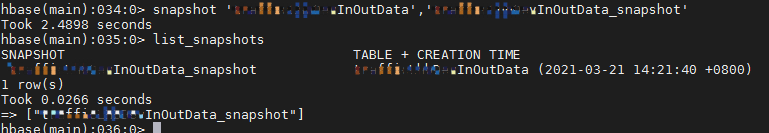

- 命令: snapshot 'PerTest','snapPerTest' 基于表 PerTest 创建名为 snapPerTest 的快照

- 命令: list_snapshots 查看快照列表

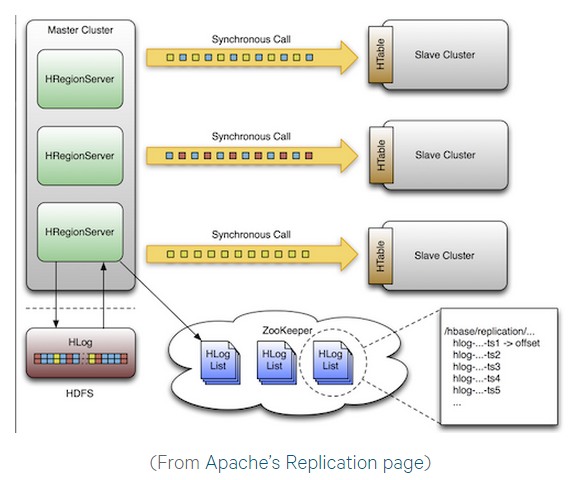

四、Replication

- HBase replication是另外一个负载较轻的备份工具。被定义为列簇级别,可以工作在后台并且保证所有的编辑操作在集群复制链之间的同步

- 复制有三种模式:主->从(master->slave),主<->主(master<->master)和循环(cyclic)。这种方法给你灵活的从任意数据中心获取数据并且确保它能获得在其他数据中心的所有副本。在一个数据中心发生灾难性故障的情况下,客户端应用程序可以利用DNS工具,重定向到另外一个备用位置

- 注:对于一个存在的表,你需要通过本文描述的其他方法,手工的拷贝源表到目的表。复制仅仅在你启动它之后才对新的写/编辑操作有效

- 复制是一个强大的,容错的过程。它提供了“最终一致性”,意味着在任何时刻,最近对一个表的编辑可能无法应用到该表的所有副本,但是最终能够确保一致。

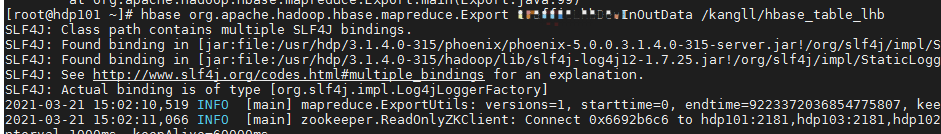

五、Export

- Export是HBase一个内置的实用功能,它使数据很容易将hbase表内容输出成HDFS的SequenceFiles文件

- 使用map reduce任务,通过一系列HBase API来获取指定表格的每一行数据,并且将数据写入指定的HDFS目录中

- 命令语法: hbase org.apache.hadoop.hbase.mapreduce.Export <tablename> <outputdir> 示例如下:

导出到HDFS的文件

六、copyTable

- 和导出功能类似,拷贝表也使用HBase API创建了一个mapreduce任务,以便从源表读取数据。不同的地方是拷贝表的输出是hbase中的另一个表,这个表可以在本地集群,也可以在远程集群

- 它使用独立的“puts”操作来逐行的写入数据到目的表。如果你的表非常大,拷贝表将会导致目标region server上的memstore被填满,会引起flush操作并最终导致合并操作的产生,会有垃圾收集操作等等

- 必须考虑到在HBase上运行mapreduce任务所带来的性能影响。对于大型的数据集,这种方法的效果不太理想

- 命令语法:hbase org.apache.hadoop.hbase.mapreduce.CopyTable --new.name=PerTest2 PerTest (copy名为PerTest的表到集群中的另外一个表PerTest2)

- 注意:若用到--new.name = xxx,首先这个新表要之前就被定义

相关文章

- 报错:关闭HBase时无法找到Master:no hbase master found(完美解决)

- hbase_学习_HBase环境搭建(单机)

- HBase选择Store file做compaction的算法

- 启动hbase时出现HMaster Aborted错误(HMaster启动之(HMaster线程的调用))

- 95 hbase命令

- 92 hbase简介

- Hbase报错:org.apache.hadoop.hbase.ipc.ServerNotRunningYetException: Server is not running yet

- HBase ElasticSearch

- hbase 学习(十六)系统架构图

- 《HBase管理指南》一1.6 修改内核参数设置

- Hbase数据模型 时间戳

- kerberos环境下spark读取kafka写hbase,Spark on YARN + Secured hbase

- HBase统计表行数(RowCount)的四种方法

- Hbase之Spark通过BlukLoad的方式批量加载数据到HBase中

- HBase源代码分析之HRegionServer上MemStore的flush处理流程(一)

- Kettle 整合大数据平台(Hadoop、Hive、HBase)