ML之Xgboost:利用Xgboost模型(7f-CrVa+网格搜索调参)对数据集(比马印第安人糖尿病)进行二分类预测

2023-09-14 09:04:45 时间

ML之Xgboost:利用Xgboost模型(7f-CrVa+网格搜索调参)对数据集(比马印第安人糖尿病)进行二分类预测

目录

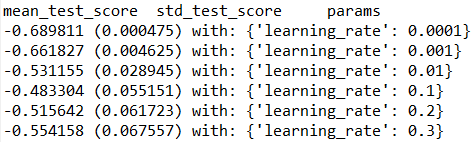

输出结果

![]()

设计思路

核心代码

grid_search = GridSearchCV(model, param_grid, scoring="neg_log_loss", n_jobs=-1, cv=kfold)

grid_result = grid_search.fit(X, Y)

param_grid = dict(learning_rate=learning_rate)

kfold = StratifiedKFold(n_splits=10, shuffle=True, random_state=7)

class GridSearchCV(BaseSearchCV):

"""Exhaustive search over specified parameter values for an estimator.

Important members are fit, predict.

GridSearchCV implements a "fit" and a "score" method.

It also implements "predict", "predict_proba", "decision_function",

"transform" and "inverse_transform" if they are implemented in the

estimator used.

The parameters of the estimator used to apply these methods are

optimized

by cross-validated grid-search over a parameter grid.

Read more in the :ref:`User Guide <grid_search>`.

Parameters

----------

estimator : estimator object.

This is assumed to implement the scikit-learn estimator interface.

Either estimator needs to provide a ``score`` function,

or ``scoring`` must be passed.

param_grid : dict or list of dictionaries

Dictionary with parameters names (string) as keys and lists of

parameter settings to try as values, or a list of such

dictionaries, in which case the grids spanned by each dictionary

in the list are explored. This enables searching over any sequence

of parameter settings.

scoring : string, callable, list/tuple, dict or None, default: None

A single string (see :ref:`scoring_parameter`) or a callable

(see :ref:`scoring`) to evaluate the predictions on the test set.

For evaluating multiple metrics, either give a list of (unique) strings

or a dict with names as keys and callables as values.

NOTE that when using custom scorers, each scorer should return a

single

value. Metric functions returning a list/array of values can be wrapped

into multiple scorers that return one value each.

See :ref:`multimetric_grid_search` for an example.

If None, the estimator's default scorer (if available) is used.

fit_params : dict, optional

Parameters to pass to the fit method.

.. deprecated:: 0.19

``fit_params`` as a constructor argument was deprecated in version

0.19 and will be removed in version 0.21. Pass fit parameters to

the ``fit`` method instead.

n_jobs : int, default=1

Number of jobs to run in parallel.

pre_dispatch : int, or string, optional

Controls the number of jobs that get dispatched during parallel

execution. Reducing this number can be useful to avoid an

explosion of memory consumption when more jobs get dispatched

than CPUs can process. This parameter can be:

- None, in which case all the jobs are immediately

created and spawned. Use this for lightweight and

fast-running jobs, to avoid delays due to on-demand

spawning of the jobs

- An int, giving the exact number of total jobs that are

spawned

- A string, giving an expression as a function of n_jobs,

as in '2*n_jobs'

iid : boolean, default=True

If True, the data is assumed to be identically distributed across

the folds, and the loss minimized is the total loss per sample,

and not the mean loss across the folds.

cv : int, cross-validation generator or an iterable, optional

Determines the cross-validation splitting strategy.

Possible inputs for cv are:

- None, to use the default 3-fold cross validation,

- integer, to specify the number of folds in a `(Stratified)KFold`,

- An object to be used as a cross-validation generator.

- An iterable yielding train, test splits.

For integer/None inputs, if the estimator is a classifier and ``y`` is

either binary or multiclass, :class:`StratifiedKFold` is used. In all

other cases, :class:`KFold` is used.

Refer :ref:`User Guide <cross_validation>` for the various

cross-validation strategies that can be used here.

refit : boolean, or string, default=True

Refit an estimator using the best found parameters on the whole

dataset.

For multiple metric evaluation, this needs to be a string denoting the

scorer is used to find the best parameters for refitting the estimator

at the end.

The refitted estimator is made available at the ``best_estimator_``

attribute and permits using ``predict`` directly on this

``GridSearchCV`` instance.

Also for multiple metric evaluation, the attributes ``best_index_``,

``best_score_`` and ``best_parameters_`` will only be available if

``refit`` is set and all of them will be determined w.r.t this specific

scorer.

See ``scoring`` parameter to know more about multiple metric

evaluation.

verbose : integer

Controls the verbosity: the higher, the more messages.

error_score : 'raise' (default) or numeric

Value to assign to the score if an error occurs in estimator fitting.

If set to 'raise', the error is raised. If a numeric value is given,

FitFailedWarning is raised. This parameter does not affect the refit

step, which will always raise the error.

return_train_score : boolean, optional

If ``False``, the ``cv_results_`` attribute will not include training

scores.

Current default is ``'warn'``, which behaves as ``True`` in addition

to raising a warning when a training score is looked up.

That default will be changed to ``False`` in 0.21.

Computing training scores is used to get insights on how different

parameter settings impact the overfitting/underfitting trade-off.

However computing the scores on the training set can be

computationally

expensive and is not strictly required to select the parameters that

yield the best generalization performance.

Examples

--------

>>> from sklearn import svm, datasets

>>> from sklearn.model_selection import GridSearchCV

>>> iris = datasets.load_iris()

>>> parameters = {'kernel':('linear', 'rbf'), 'C':[1, 10]}

>>> svc = svm.SVC()

>>> clf = GridSearchCV(svc, parameters)

>>> clf.fit(iris.data, iris.target)

... # doctest: +NORMALIZE_WHITESPACE +ELLIPSIS

GridSearchCV(cv=None, error_score=...,

estimator=SVC(C=1.0, cache_size=..., class_weight=..., coef0=...,

decision_function_shape='ovr', degree=..., gamma=...,

kernel='rbf', max_iter=-1, probability=False,

random_state=None, shrinking=True, tol=...,

verbose=False),

fit_params=None, iid=..., n_jobs=1,

param_grid=..., pre_dispatch=..., refit=..., return_train_score=...,

scoring=..., verbose=...)

>>> sorted(clf.cv_results_.keys())

... # doctest: +NORMALIZE_WHITESPACE +ELLIPSIS

['mean_fit_time', 'mean_score_time', 'mean_test_score',...

'mean_train_score', 'param_C', 'param_kernel', 'params',...

'rank_test_score', 'split0_test_score',...

'split0_train_score', 'split1_test_score', 'split1_train_score',...

'split2_test_score', 'split2_train_score',...

'std_fit_time', 'std_score_time', 'std_test_score', 'std_train_score'...]

Attributes

----------

cv_results_ : dict of numpy (masked) ndarrays

A dict with keys as column headers and values as columns, that can be

imported into a pandas ``DataFrame``.

For instance the below given table

+------------+-----------+------------+-----------------+---+---------+

|param_kernel|param_gamma|param_degree|split0_test_score|...

|rank_t...|

+============+===========+============+========

=========+===+=========+

| 'poly' | -- | 2 | 0.8 |...| 2 |

+------------+-----------+------------+-----------------+---+---------+

| 'poly' | -- | 3 | 0.7 |...| 4 |

+------------+-----------+------------+-----------------+---+---------+

| 'rbf' | 0.1 | -- | 0.8 |...| 3 |

+------------+-----------+------------+-----------------+---+---------+

| 'rbf' | 0.2 | -- | 0.9 |...| 1 |

+------------+-----------+------------+-----------------+---+---------+

will be represented by a ``cv_results_`` dict of::

{

'param_kernel': masked_array(data = ['poly', 'poly', 'rbf', 'rbf'],

mask = [False False False False]...)

'param_gamma': masked_array(data = [-- -- 0.1 0.2],

mask = [ True True False False]...),

'param_degree': masked_array(data = [2.0 3.0 -- --],

mask = [False False True True]...),

'split0_test_score' : [0.8, 0.7, 0.8, 0.9],

'split1_test_score' : [0.82, 0.5, 0.7, 0.78],

'mean_test_score' : [0.81, 0.60, 0.75, 0.82],

'std_test_score' : [0.02, 0.01, 0.03, 0.03],

'rank_test_score' : [2, 4, 3, 1],

'split0_train_score' : [0.8, 0.9, 0.7],

'split1_train_score' : [0.82, 0.5, 0.7],

'mean_train_score' : [0.81, 0.7, 0.7],

'std_train_score' : [0.03, 0.03, 0.04],

'mean_fit_time' : [0.73, 0.63, 0.43, 0.49],

'std_fit_time' : [0.01, 0.02, 0.01, 0.01],

'mean_score_time' : [0.007, 0.06, 0.04, 0.04],

'std_score_time' : [0.001, 0.002, 0.003, 0.005],

'params' : [{'kernel': 'poly', 'degree': 2}, ...],

}

NOTE

The key ``'params'`` is used to store a list of parameter

settings dicts for all the parameter candidates.

The ``mean_fit_time``, ``std_fit_time``, ``mean_score_time`` and

``std_score_time`` are all in seconds.

For multi-metric evaluation, the scores for all the scorers are

available in the ``cv_results_`` dict at the keys ending with that

scorer's name (``'_<scorer_name>'``) instead of ``'_score'`` shown

above. ('split0_test_precision', 'mean_train_precision' etc.)

best_estimator_ : estimator or dict

Estimator that was chosen by the search, i.e. estimator

which gave highest score (or smallest loss if specified)

on the left out data. Not available if ``refit=False``.

See ``refit`` parameter for more information on allowed values.

best_score_ : float

Mean cross-validated score of the best_estimator

For multi-metric evaluation, this is present only if ``refit`` is

specified.

best_params_ : dict

Parameter setting that gave the best results on the hold out data.

For multi-metric evaluation, this is present only if ``refit`` is

specified.

best_index_ : int

The index (of the ``cv_results_`` arrays) which corresponds to the best

candidate parameter setting.

The dict at ``search.cv_results_['params'][search.best_index_]`` gives

the parameter setting for the best model, that gives the highest

mean score (``search.best_score_``).

For multi-metric evaluation, this is present only if ``refit`` is

specified.

scorer_ : function or a dict

Scorer function used on the held out data to choose the best

parameters for the model.

For multi-metric evaluation, this attribute holds the validated

``scoring`` dict which maps the scorer key to the scorer callable.

n_splits_ : int

The number of cross-validation splits (folds/iterations).

Notes

------

The parameters selected are those that maximize the score of the left

out

data, unless an explicit score is passed in which case it is used instead.

If `n_jobs` was set to a value higher than one, the data is copied for

each

point in the grid (and not `n_jobs` times). This is done for efficiency

reasons if individual jobs take very little time, but may raise errors if

the dataset is large and not enough memory is available. A

workaround in

this case is to set `pre_dispatch`. Then, the memory is copied only

`pre_dispatch` many times. A reasonable value for `pre_dispatch` is `2 *

n_jobs`.

See Also

---------

:class:`ParameterGrid`:

generates all the combinations of a hyperparameter grid.

:func:`sklearn.model_selection.train_test_split`:

utility function to split the data into a development set usable

for fitting a GridSearchCV instance and an evaluation set for

its final evaluation.

:func:`sklearn.metrics.make_scorer`:

Make a scorer from a performance metric or loss function.

"""

def __init__(self, estimator, param_grid, scoring=None,

fit_params=None,

n_jobs=1, iid=True, refit=True, cv=None, verbose=0,

pre_dispatch='2*n_jobs', error_score='raise',

return_train_score="warn"):

super(GridSearchCV, self).__init__(estimator=estimator,

scoring=scoring, fit_params=fit_params, n_jobs=n_jobs, iid=iid,

refit=refit, cv=cv, verbose=verbose, pre_dispatch=pre_dispatch,

error_score=error_score, return_train_score=return_train_score)

self.param_grid = param_grid

_check_param_grid(param_grid)

def _get_param_iterator(self):

"""Return ParameterGrid instance for the given param_grid"""

return ParameterGrid(self.param_grid)class StratifiedKFold(_BaseKFold):

"""Stratified K-Folds cross-validator

Provides train/test indices to split data in train/test sets.

This cross-validation object is a variation of KFold that returns

stratified folds. The folds are made by preserving the percentage of

samples for each class.

Read more in the :ref:`User Guide <cross_validation>`.

Parameters

----------

n_splits : int, default=3

Number of folds. Must be at least 2.

shuffle : boolean, optional

Whether to shuffle each stratification of the data before splitting

into batches.

random_state : int, RandomState instance or None, optional,

default=None

If int, random_state is the seed used by the random number

generator;

If RandomState instance, random_state is the random number

generator;

If None, the random number generator is the RandomState

instance used

by `np.random`. Used when ``shuffle`` == True.

Examples

--------

>>> from sklearn.model_selection import StratifiedKFold

>>> X = np.array([[1, 2], [3, 4], [1, 2], [3, 4]])

>>> y = np.array([0, 0, 1, 1])

>>> skf = StratifiedKFold(n_splits=2)

>>> skf.get_n_splits(X, y)

2

>>> print(skf) # doctest: +NORMALIZE_WHITESPACE

StratifiedKFold(n_splits=2, random_state=None, shuffle=False)

>>> for train_index, test_index in skf.split(X, y):

... print("TRAIN:", train_index, "TEST:", test_index)

... X_train, X_test = X[train_index], X[test_index]

... y_train, y_test = y[train_index], y[test_index]

TRAIN: [1 3] TEST: [0 2]

TRAIN: [0 2] TEST: [1 3]

Notes

-----

All the folds have size ``trunc(n_samples / n_splits)``, the last one has

the complementary.

See also

--------

RepeatedStratifiedKFold: Repeats Stratified K-Fold n times.

"""

def __init__(self, n_splits=3, shuffle=False, random_state=None):

super(StratifiedKFold, self).__init__(n_splits, shuffle,

random_state)

def _make_test_folds(self, X, y=None):

rng = self.random_state

y = np.asarray(y)

type_of_target_y = type_of_target(y)

allowed_target_types = 'binary', 'multiclass'

if type_of_target_y not in allowed_target_types:

raise ValueError(

'Supported target types are: {}. Got {!r} instead.'.format(

allowed_target_types, type_of_target_y))

y = column_or_1d(y)

n_samples = y.shape[0]

unique_y, y_inversed = np.unique(y, return_inverse=True)

y_counts = np.bincount(y_inversed)

min_groups = np.min(y_counts)

if np.all(self.n_splits > y_counts):

raise ValueError(

"n_splits=%d cannot be greater than the"

" number of members in each class." %

(self.n_splits))

if self.n_splits > min_groups:

warnings.warn(("The least populated class in y has only %d"

" members, which is too few. The minimum"

" number of members in any class cannot"

" be less than n_splits=%d." %

(min_groups, self.n_splits)), Warning) # pre-assign each

sample to a test fold index using individual KFold

# splitting strategies for each class so as to respect the

balance of

# classes

# NOTE: Passing the data corresponding to ith class say X

[y==class_i]

# will break when the data is not 100% stratifiable for all

classes.

# So we pass np.zeroes(max(c, n_splits)) as data to the

KFold

per_cls_cvs = [KFold(self.n_splits, shuffle=self.shuffle,

random_state=rng).split(np.zeros(max(count, self.n_splits))) for count

in y_counts]

test_folds = np.zeros(n_samples, dtype=np.int)

for test_fold_indices, per_cls_splits in enumerate(zip

(*per_cls_cvs)):

for cls, (_, test_split) in zip(unique_y, per_cls_splits):

cls_test_folds = test_folds[y == cls]

# the test split can be too big because we used

# KFold(...).split(X[:max(c, n_splits)]) when data is not 100%

# stratifiable for all the classes

# (we use a warning instead of raising an exception)

# If this is the case, let's trim it:

test_split = test_split[test_split < len(cls_test_folds)]

cls_test_folds[test_split] = test_fold_indices

test_folds[y == cls] = cls_test_folds

return test_folds

def _iter_test_masks(self, X, y=None, groups=None):

test_folds = self._make_test_folds(X, y)

for i in range(self.n_splits):

yield test_folds == i

def split(self, X, y, groups=None):

"""Generate indices to split data into training and test set.

Parameters

----------

X : array-like, shape (n_samples, n_features)

Training data, where n_samples is the number of samples

and n_features is the number of features.

Note that providing ``y`` is sufficient to generate the splits and

hence ``np.zeros(n_samples)`` may be used as a placeholder for

``X`` instead of actual training data.

y : array-like, shape (n_samples,)

The target variable for supervised learning problems.

Stratification is done based on the y labels.

groups : object

Always ignored, exists for compatibility.

Returns

-------

train : ndarray

The training set indices for that split.

test : ndarray

The testing set indices for that split.

Notes

-----

Randomized CV splitters may return different results for each call

of

split. You can make the results identical by setting

``random_state``

to an integer.

"""

y = check_array(y, ensure_2d=False, dtype=None)

return super(StratifiedKFold, self).split(X, y, groups)

相关文章

- destoon修改搜索页面标题方法

- Discuz常见小问题2-如何在数据库搜索指定关键字

- Java实现 LeetCode 235 二叉搜索树的最近公共祖先

- Java实现 LeetCode 99 恢复二叉搜索树

- 使用Vitamio打造自己的Android万能播放器(4)——本地播放(快捷搜索、数据存储)

- 深入理解空间搜索算法 ——数百万数据中的瞬时搜索

- My Account应用里Account主数据搜索的FromDate是如何在后台生成的

- SAP CRM产品主数据无法根据产品描述字段进行搜索的原因

- Atitit 知识搜索 信息检索的方法总结 目录 1. 目录搜索1 1.1. 向下同级搜索1 1.2. 向上目录抽象搜索1 2. hash搜索模式1 2.1. 关键词搜索 主题搜索1 2

- Paip.论语义分析与语义搜索技术.attilax(艾龙)总结

- Elasticsearch 数据搜索篇

- My Account应用里Account主数据搜索的FromDate是如何在后台生成的

- ML之PySpark:基于PySpark框架针对adult人口普查收入数据集结合Pipeline利用LoR/DT/RF算法(网格搜索+交叉验证评估+特征重要性)实现二分类预测(年收入是否超50k)案例

- ML之SVM:利用SVM算法(超参数组合进行单线程网格搜索+3fCrVa)对20类新闻文本数据集进行分类预测、评估

- erlang 小程序:整数序列,搜索和为正的最长子序列

- 2364. 统计坏数对的数目-数学推导转化+快速排序-搜索相同元素集合题解

- 【Android 逆向】修改运行中的 Android 进程的内存数据 ( 使用 IDA 分析要修改的内存特征 | 根据内存特征搜索修改点 | 修改进程内存 )

- 百度举办移动搜索全国巡回沙龙,为移动互联网注入新活力

- lucene4之后的近实时搜索实现

- 【SQL开发实战技巧】系列(三十三):数仓报表场景☞从不固定位置提取字符串的元素以及搜索满足字母在前数字在后等条件的数据

- 力扣-DFS深度优先搜索

- 【LeetCode】79. 单词搜索

- airodump-ng搜索5G频段