[Machine Learning] Learning Curves

learning MACHINE

2023-09-14 08:59:13 时间

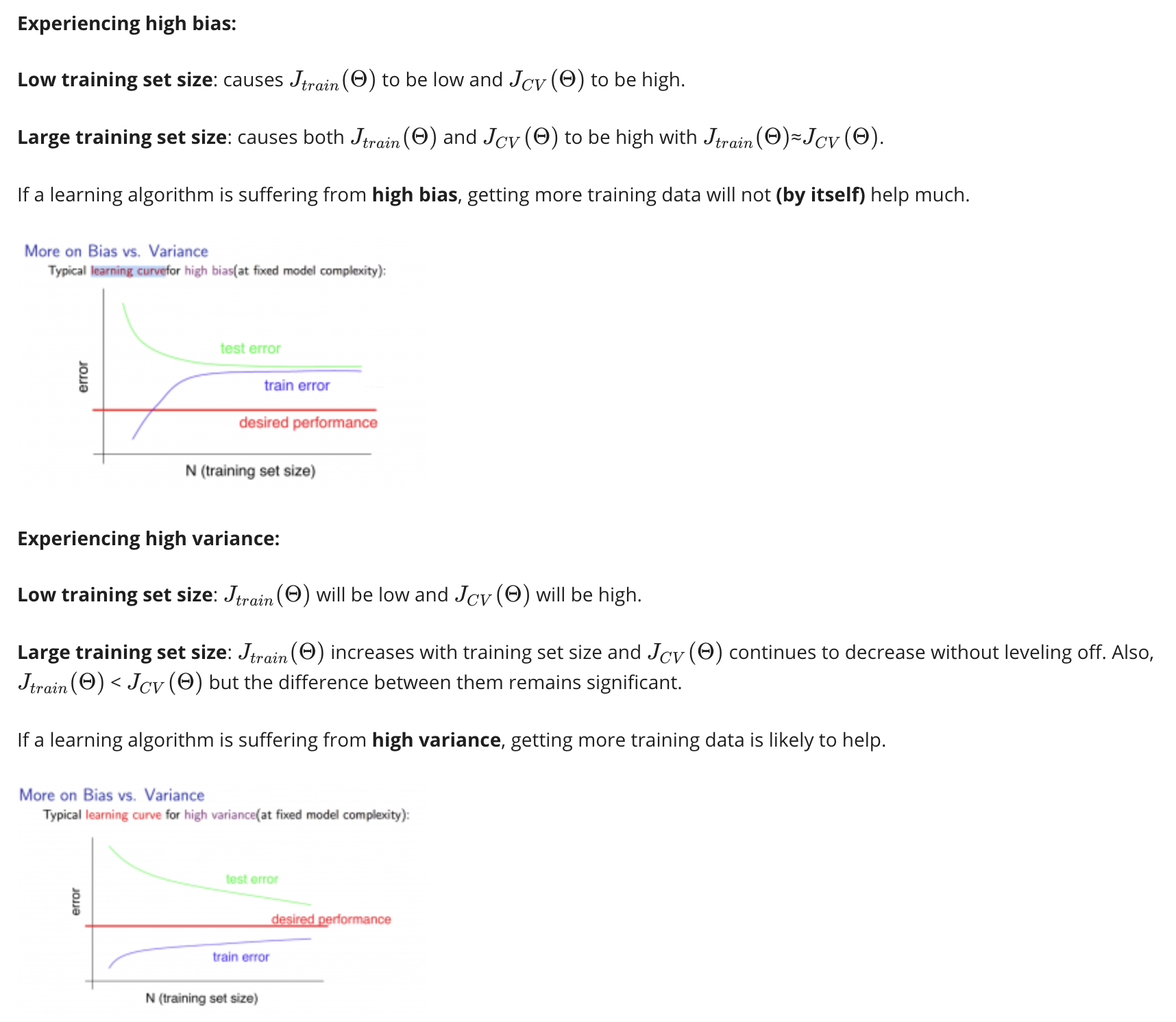

Training an algorithm on a very few number of data points (such as 1, 2 or 3) will easily have 0 errors because we can always find a quadratic curve that touches exactly those number of points. Hence:

- As the training set gets larger, the error for a quadratic function increases.

- The error value will plateau out after a certain m, or training set size.

相关文章

- ≪统计学习精要(The Elements of Statistical Learning)≫课堂笔记(二)

- deep learning 资源汇总

- On the Bias/Variance tradeoff in Machine Learning

- [Machine Learning] Regularization and Bias/Variance

- Deep Learning系统实训之一:深度学习基础知识

- [Machine Learning] Solve overfitting, Regularization

- [Machine Learning] Multivariate Linear Regeression

- 《MATLAB Deep Learning:With Machine Learning,Neural Networks and Artificial Intelligence》选记

- Inside Spring - learning notes - Jerry Wang的Spring学习笔记

- 应用机器学习的建议(Advice for Applying Machine Learning)

- 论文解读(GMI)《Graph Representation Learning via Graphical Mutual Information Maximization》

- 论文解读(SUGRL)《Simple Unsupervised Graph Representation Learning》

- 论文解读(GraphSAGE)《Inductive Representation Learning on Large Graphs》

- Data Mining and Machine Learning in Cybersecurity PDF

- 【文献学习】RoemNet: Robust Meta Learning based Channel Estimation in OFDM Systems

- 《论文阅读》Cluster-Level Contrastive Learning for Emotion Recognition in Conversations

- 论文阅读《TriggerNER: Learning with Entity Triggers as Explanations for Named Entity Recognition?》

- Machine Learning with Python Part One

- CutPaste:Self-Supervised Learning for Anomaly Detection and Localization 论文解读(缺陷检测)

- 迁移学习(transfer learning)与finetune的关系?【finetune只是transfer learning的一种手段】