opencv 基于GMS的图像拼接

Opencv 基于 图像 拼接

2023-09-27 14:25:49 时间

gms_matcher.h

#pragma once

#pragma once

#include <opencv2/opencv.hpp>

#include <vector>

#include <iostream>

#include <ctime>

using namespace std;

using namespace cv;

#define THRESH_FACTOR 6

// 8 possible rotation and each one is 3 X 3

const int mRotationPatterns[8][9] = {

1,2,3,

4,5,6,

7,8,9,

4,1,2,

7,5,3,

8,9,6,

7,4,1,

8,5,2,

9,6,3,

8,7,4,

9,5,1,

6,3,2,

9,8,7,

6,5,4,

3,2,1,

6,9,8,

3,5,7,

2,1,4,

3,6,9,

2,5,8,

1,4,7,

2,3,6,

1,5,9,

4,7,8

};

// 5 level scales

const double mScaleRatios[5] = { 1.0, 1.0 / 2, 1.0 / sqrt(2.0), sqrt(2.0), 2.0 };

class gms_matcher

{

public:

// OpenCV Keypoints & Correspond Image Size & Nearest Neighbor Matches

gms_matcher(const vector<KeyPoint>& vkp1, const Size size1, const vector<KeyPoint>& vkp2, const Size size2, const vector<DMatch>& vDMatches)

{

// Input initialize

NormalizePoints(vkp1, size1, mvP1);

NormalizePoints(vkp2, size2, mvP2);

mNumberMatches = vDMatches.size();

ConvertMatches(vDMatches, mvMatches);

// Grid initialize

mGridSizeLeft = Size(20, 20);

mGridNumberLeft = mGridSizeLeft.width * mGridSizeLeft.height;

// Initialize the neihbor of left grid

mGridNeighborLeft = Mat::zeros(mGridNumberLeft, 9, CV_32SC1);

InitalizeNiehbors(mGridNeighborLeft, mGridSizeLeft);

};

~gms_matcher() {};

private:

// Normalized Points

vector<Point2f> mvP1, mvP2;

// Matches

vector<pair<int, int> > mvMatches;

// Number of Matches

size_t mNumberMatches;

// Grid Size

Size mGridSizeLeft, mGridSizeRight;

int mGridNumberLeft;

int mGridNumberRight;

// x : left grid idx

// y : right grid idx

// value : how many matches from idx_left to idx_right

Mat mMotionStatistics;

//

vector<int> mNumberPointsInPerCellLeft;

// Inldex : grid_idx_left

// Value : grid_idx_right

vector<int> mCellPairs;

// Every Matches has a cell-pair

// first : grid_idx_left

// second : grid_idx_right

vector<pair<int, int> > mvMatchPairs;

// Inlier Mask for output

vector<bool> mvbInlierMask;

//

Mat mGridNeighborLeft;

Mat mGridNeighborRight;

public:

// Get Inlier Mask

// Return number of inliers

int GetInlierMask(vector<bool>& vbInliers, bool WithScale = false, bool WithRotation = false);

private:

// Normalize Key Points to Range(0 - 1)

void NormalizePoints(const vector<KeyPoint>& kp, const Size& size, vector<Point2f>& npts) {

const size_t numP = kp.size();

const int width = size.width;

const int height = size.height;

npts.resize(numP);

for (size_t i = 0; i < numP; i++)

{

npts[i].x = kp[i].pt.x / width;

npts[i].y = kp[i].pt.y / height;

}

}

// Convert OpenCV DMatch to Match (pair<int, int>)

void ConvertMatches(const vector<DMatch>& vDMatches, vector<pair<int, int> >& vMatches) {

vMatches.resize(mNumberMatches);

for (size_t i = 0; i < mNumberMatches; i++)

{

vMatches[i] = pair<int, int>(vDMatches[i].queryIdx, vDMatches[i].trainIdx);

}

}

int GetGridIndexLeft(const Point2f& pt, int type) {

int x = 0, y = 0;

if (type == 1) {

x = floor(pt.x * mGridSizeLeft.width);

y = floor(pt.y * mGridSizeLeft.height);

if (y >= mGridSizeLeft.height || x >= mGridSizeLeft.width) {

return -1;

}

}

if (type == 2) {

x = floor(pt.x * mGridSizeLeft.width + 0.5);

y = floor(pt.y * mGridSizeLeft.height);

if (x >= mGridSizeLeft.width || x < 1) {

return -1;

}

}

if (type == 3) {

x = floor(pt.x * mGridSizeLeft.width);

y = floor(pt.y * mGridSizeLeft.height + 0.5);

if (y >= mGridSizeLeft.height || y < 1) {

return -1;

}

}

if (type == 4) {

x = floor(pt.x * mGridSizeLeft.width + 0.5);

y = floor(pt.y * mGridSizeLeft.height + 0.5);

if (y >= mGridSizeLeft.height || y < 1 || x >= mGridSizeLeft.width || x < 1) {

return -1;

}

}

return x + y * mGridSizeLeft.width;

}

int GetGridIndexRight(const Point2f& pt) {

int x = floor(pt.x * mGridSizeRight.width);

int y = floor(pt.y * mGridSizeRight.height);

return x + y * mGridSizeRight.width;

}

// Assign Matches to Cell Pairs

void AssignMatchPairs(int GridType);

// Verify Cell Pairs

void VerifyCellPairs(int RotationType);

// Get Neighbor 9

vector<int> GetNB9(const int idx, const Size& GridSize) {

vector<int> NB9(9, -1);

int idx_x = idx % GridSize.width;

int idx_y = idx / GridSize.width;

for (int yi = -1; yi <= 1; yi++)

{

for (int xi = -1; xi <= 1; xi++)

{

int idx_xx = idx_x + xi;

int idx_yy = idx_y + yi;

if (idx_xx < 0 || idx_xx >= GridSize.width || idx_yy < 0 || idx_yy >= GridSize.height)

continue;

NB9[xi + 4 + yi * 3] = idx_xx + idx_yy * GridSize.width;

}

}

return NB9;

}

void InitalizeNiehbors(Mat& neighbor, const Size& GridSize) {

for (int i = 0; i < neighbor.rows; i++)

{

vector<int> NB9 = GetNB9(i, GridSize);

int* data = neighbor.ptr<int>(i);

memcpy(data, &NB9[0], sizeof(int) * 9);

}

}

void SetScale(int Scale) {

// Set Scale

mGridSizeRight.width = mGridSizeLeft.width * mScaleRatios[Scale];

mGridSizeRight.height = mGridSizeLeft.height * mScaleRatios[Scale];

mGridNumberRight = mGridSizeRight.width * mGridSizeRight.height;

// Initialize the neihbor of right grid

mGridNeighborRight = Mat::zeros(mGridNumberRight, 9, CV_32SC1);

InitalizeNiehbors(mGridNeighborRight, mGridSizeRight);

}

// Run

int run(int RotationType);

};

int gms_matcher::GetInlierMask(vector<bool>& vbInliers, bool WithScale, bool WithRotation) {

int max_inlier = 0;

if (!WithScale && !WithRotation)

{

SetScale(0);

max_inlier = run(1);

vbInliers = mvbInlierMask;

return max_inlier;

}

if (WithRotation && WithScale)

{

for (int Scale = 0; Scale < 5; Scale++)

{

SetScale(Scale);

for (int RotationType = 1; RotationType <= 8; RotationType++)

{

int num_inlier = run(RotationType);

if (num_inlier > max_inlier)

{

vbInliers = mvbInlierMask;

max_inlier = num_inlier;

}

}

}

return max_inlier;

}

if (WithRotation && !WithScale)

{

SetScale(0);

for (int RotationType = 1; RotationType <= 8; RotationType++)

{

int num_inlier = run(RotationType);

if (num_inlier > max_inlier)

{

vbInliers = mvbInlierMask;

max_inlier = num_inlier;

}

}

return max_inlier;

}

if (!WithRotation && WithScale)

{

for (int Scale = 0; Scale < 5; Scale++)

{

SetScale(Scale);

int num_inlier = run(1);

if (num_inlier > max_inlier)

{

vbInliers = mvbInlierMask;

max_inlier = num_inlier;

}

}

return max_inlier;

}

return max_inlier;

}

void gms_matcher::AssignMatchPairs(int GridType) {

for (size_t i = 0; i < mNumberMatches; i++)

{

Point2f& lp = mvP1[mvMatches[i].first];

Point2f& rp = mvP2[mvMatches[i].second];

int lgidx = mvMatchPairs[i].first = GetGridIndexLeft(lp, GridType);

int rgidx = -1;

if (GridType == 1)

{

rgidx = mvMatchPairs[i].second = GetGridIndexRight(rp);

}

else

{

rgidx = mvMatchPairs[i].second;

}

if (lgidx < 0 || rgidx < 0) continue;

mMotionStatistics.at<int>(lgidx, rgidx)++;

mNumberPointsInPerCellLeft[lgidx]++;

}

}

void gms_matcher::VerifyCellPairs(int RotationType) {

const int* CurrentRP = mRotationPatterns[RotationType - 1];

for (int i = 0; i < mGridNumberLeft; i++)

{

if (sum(mMotionStatistics.row(i))[0] == 0)

{

mCellPairs[i] = -1;

continue;

}

int max_number = 0;

for (int j = 0; j < mGridNumberRight; j++)

{

int* value = mMotionStatistics.ptr<int>(i);

if (value[j] > max_number)

{

mCellPairs[i] = j;

max_number = value[j];

}

}

int idx_grid_rt = mCellPairs[i];

const int* NB9_lt = mGridNeighborLeft.ptr<int>(i);

const int* NB9_rt = mGridNeighborRight.ptr<int>(idx_grid_rt);

int score = 0;

double thresh = 0;

int numpair = 0;

for (size_t j = 0; j < 9; j++)

{

int ll = NB9_lt[j];

int rr = NB9_rt[CurrentRP[j] - 1];

if (ll == -1 || rr == -1) continue;

score += mMotionStatistics.at<int>(ll, rr);

thresh += mNumberPointsInPerCellLeft[ll];

numpair++;

}

thresh = THRESH_FACTOR * sqrt(thresh / numpair);

if (score < thresh)

mCellPairs[i] = -2;

}

}

int gms_matcher::run(int RotationType) {

mvbInlierMask.assign(mNumberMatches, false);

// Initialize Motion Statisctics

mMotionStatistics = Mat::zeros(mGridNumberLeft, mGridNumberRight, CV_32SC1);

mvMatchPairs.assign(mNumberMatches, pair<int, int>(0, 0));

for (int GridType = 1; GridType <= 4; GridType++)

{

// initialize

mMotionStatistics.setTo(0);

mCellPairs.assign(mGridNumberLeft, -1);

mNumberPointsInPerCellLeft.assign(mGridNumberLeft, 0);

AssignMatchPairs(GridType);

VerifyCellPairs(RotationType);

// Mark inliers

for (size_t i = 0; i < mNumberMatches; i++)

{

if (mvMatchPairs[i].first >= 0) {

if (mCellPairs[mvMatchPairs[i].first] == mvMatchPairs[i].second)

{

mvbInlierMask[i] = true;

}

}

}

}

int num_inlier = sum(mvbInlierMask)[0];

return num_inlier;

}

stitch.cpp

#include "gms_matcher.h"

//#define USE_GPU

#ifdef USE_GPU

#include <opencv2/cudafeatures2d.hpp>

using cuda::GpuMat;

#endif

void GmsMatch(Mat& img1, Mat& img2);

//Mat DrawInlier(Mat& src1, Mat& src2, vector<KeyPoint>& kpt1, vector<KeyPoint>& kpt2, vector<DMatch>& inlier, int type);

Mat DrawInlier(Mat& src1, Mat& src2, vector<KeyPoint>& kpt1, vector<KeyPoint>& kpt2, vector<DMatch>& inlier, int type, vector<Point2f>& kpLeft, vector<Point2f>& kpRight);

void CalcCorners(const Mat& H, const Mat& src);

Mat stitchImages(vector<Point2f>& kpLeft, vector<Point2f>& kpRight, Mat& img_left, Mat& img_right);

typedef struct

{

Point2f left_top;

Point2f left_bottom;

Point2f right_top;

Point2f right_bottom;

}four_corners_t;

four_corners_t corners;

void runImagePair() {

Mat img_left = imread("../data/flowerL.jpg");

Mat img_right = imread("../data/flowerR.jpg");

//Mat img_left = imread("../data/SL.jpg");

//Mat img_right = imread("../data/SR.jpg");

//Mat img_left = imread("../data/Image_02.jpg");

//Mat img_right = imread("../data/Image_01.jpg");

GmsMatch(img_left, img_right);

}

int main()

{

#ifdef USE_GPU

int flag = cuda::getCudaEnabledDeviceCount();

if (flag != 0) { cuda::setDevice(0); }

#endif // USE_GPU

runImagePair();

return 0;

}

void GmsMatch(Mat& img_left, Mat& img_right) {

vector<KeyPoint> kp1, kp2;

Mat d1, d2;

vector<DMatch> matches_all, matches_gms;

Ptr<ORB> orb = ORB::create(10000);

orb->setFastThreshold(0);

orb->detectAndCompute(img_left, Mat(), kp1, d1);

orb->detectAndCompute(img_right, Mat(), kp2, d2);

#ifdef USE_GPU

GpuMat gd1(d1), gd2(d2);

Ptr<cuda::DescriptorMatcher> matcher = cv::cuda::DescriptorMatcher::createBFMatcher(NORM_HAMMING);

matcher->match(gd1, gd2, matches_all);

#else

BFMatcher matcher(NORM_HAMMING);

matcher.match(d1, d2, matches_all);

#endif

// GMS filter

std::vector<bool> vbInliers;

gms_matcher gms(kp1, img_left.size(), kp2, img_right.size(), matches_all);

int num_inliers = gms.GetInlierMask(vbInliers, false, false);

cout << "Get total " << num_inliers << " matches." << endl;

// collect matches

for (size_t i = 0; i < vbInliers.size(); ++i)

{

if (vbInliers[i] == true)

{

matches_gms.push_back(matches_all[i]);

}

}

// draw matching

vector<Point2f> kpLeft, kpRight;

//Mat show = DrawInlier(img1, img2, kp1, kp2, matches_gms, 1);

Mat show = DrawInlier(img_left, img_right, kp1, kp2, matches_gms, 1, kpLeft, kpRight);

//namedWindow("show", 2);

imshow("matchingshow", show);

// stitch images

Mat stitch_image = stitchImages(kpLeft, kpRight, img_left, img_right);

namedWindow("stitch_image", 2);

imshow("stitch_image", stitch_image);

waitKey();

}

Mat stitchImages(vector<Point2f>& kpLeft, vector<Point2f>& kpRight, Mat& img_left, Mat& img_right)

{

//获取图像1到图像2的投影映射矩阵 尺寸为3*3

Mat homo = findHomography(kpRight, kpLeft, RANSAC);

cout << "变换矩阵为:\n" << homo << endl << endl; //输出映射矩阵

//计算配准图的四个顶点坐标

CalcCorners(homo, img_right);

//图像配准

Mat imageTransform1, imageTransform2;

warpPerspective(img_right, imageTransform1, homo, Size(MAX(corners.right_top.x, corners.right_bottom.x), img_left.rows));

//namedWindow("transformedImage", 2);

//imshow("transformedImage", imageTransform1); //直接经过透视矩阵变换

waitKey();

//创建拼接后的图,需提前计算图的大小

int dst_width = imageTransform1.cols; //取最右点的长度为拼接图的长度

//int dst_width = imageLeft.cols + imageRight.cols; //取最右点的长度为拼接图的长度

int dst_height = img_left.rows;

Mat dst_stitch(dst_height, dst_width, CV_8UC3);

dst_stitch.setTo(0);

imageTransform1.copyTo(dst_stitch(Rect(0, 0, imageTransform1.cols, imageTransform1.rows)));

//namedWindow("transform1", 2);

//imshow("transform1", dst_stitch);

//waitKey();

img_left.copyTo(dst_stitch(Rect(0, 0, img_left.cols, img_left.rows)));

return dst_stitch;

}

Mat DrawInlier(Mat& src1, Mat& src2, vector<KeyPoint>& kpt1, vector<KeyPoint>& kpt2, vector<DMatch>& inlier, int type, vector<Point2f>& kpLeft, vector<Point2f>& kpRight) {

const int height = max(src1.rows, src2.rows);

const int width = src1.cols + src2.cols;

Mat output(height, width, CV_8UC3, Scalar(0, 0, 0));

src1.copyTo(output(Rect(0, 0, src1.cols, src1.rows)));

src2.copyTo(output(Rect(src1.cols, 0, src2.cols, src2.rows)));

if (type == 1)

{

for (size_t i = 0; i < inlier.size(); i++)

{

Point2f left = kpt1[inlier[i].queryIdx].pt;

Point2f right = (kpt2[inlier[i].trainIdx].pt + Point2f((float)src1.cols, 0.f));

kpLeft.push_back(left);

kpRight.push_back(kpt2[inlier[i].trainIdx].pt);

line(output, left, right, Scalar(0, 255, 255));

}

}

else if (type == 2)

{

for (size_t i = 0; i < inlier.size(); i++)

{

Point2f left = kpt1[inlier[i].queryIdx].pt;

Point2f right = (kpt2[inlier[i].trainIdx].pt + Point2f((float)src1.cols, 0.f));

kpLeft.push_back(left);

kpRight.push_back(kpt2[inlier[i].trainIdx].pt);

line(output, left, right, Scalar(255, 0, 0));

}

for (size_t i = 0; i < inlier.size(); i++)

{

Point2f left = kpt1[inlier[i].queryIdx].pt;

Point2f right = (kpt2[inlier[i].trainIdx].pt + Point2f((float)src1.cols, 0.f));

kpLeft.push_back(left);

kpRight.push_back(kpt2[inlier[i].trainIdx].pt);

circle(output, left, 1, Scalar(0, 255, 255), 2);

circle(output, right, 1, Scalar(0, 255, 0), 2);

}

}

return output;

}

void CalcCorners(const Mat& H, const Mat& src)

{

double v2[] = { 0, 0, 1 };//左上角

double v1[3];//变换后的坐标值

Mat V2 = Mat(3, 1, CV_64FC1, v2); //列向量

Mat V1 = Mat(3, 1, CV_64FC1, v1); //列向量

V1 = H * V2;

//左上角(0,0,1)

cout << "V2: " << V2 << endl;

cout << "V1: " << V1 << endl;

corners.left_top.x = v1[0] / v1[2];

corners.left_top.y = v1[1] / v1[2];

//左下角(0,src.rows,1)

v2[0] = 0;

v2[1] = src.rows;

v2[2] = 1;

V2 = Mat(3, 1, CV_64FC1, v2); //列向量

V1 = Mat(3, 1, CV_64FC1, v1); //列向量

V1 = H * V2;

corners.left_bottom.x = v1[0] / v1[2];

corners.left_bottom.y = v1[1] / v1[2];

//右上角(src.cols,0,1)

v2[0] = src.cols;

v2[1] = 0;

v2[2] = 1;

V2 = Mat(3, 1, CV_64FC1, v2); //列向量

V1 = Mat(3, 1, CV_64FC1, v1); //列向量

V1 = H * V2;

corners.right_top.x = v1[0] / v1[2];

corners.right_top.y = v1[1] / v1[2];

//右下角(src.cols,src.rows,1)

v2[0] = src.cols;

v2[1] = src.rows;

v2[2] = 1;

V2 = Mat(3, 1, CV_64FC1, v2); //列向量

V1 = Mat(3, 1, CV_64FC1, v1); //列向量

V1 = H * V2;

corners.right_bottom.x = v1[0] / v1[2];

corners.right_bottom.y = v1[1] / v1[2];

}

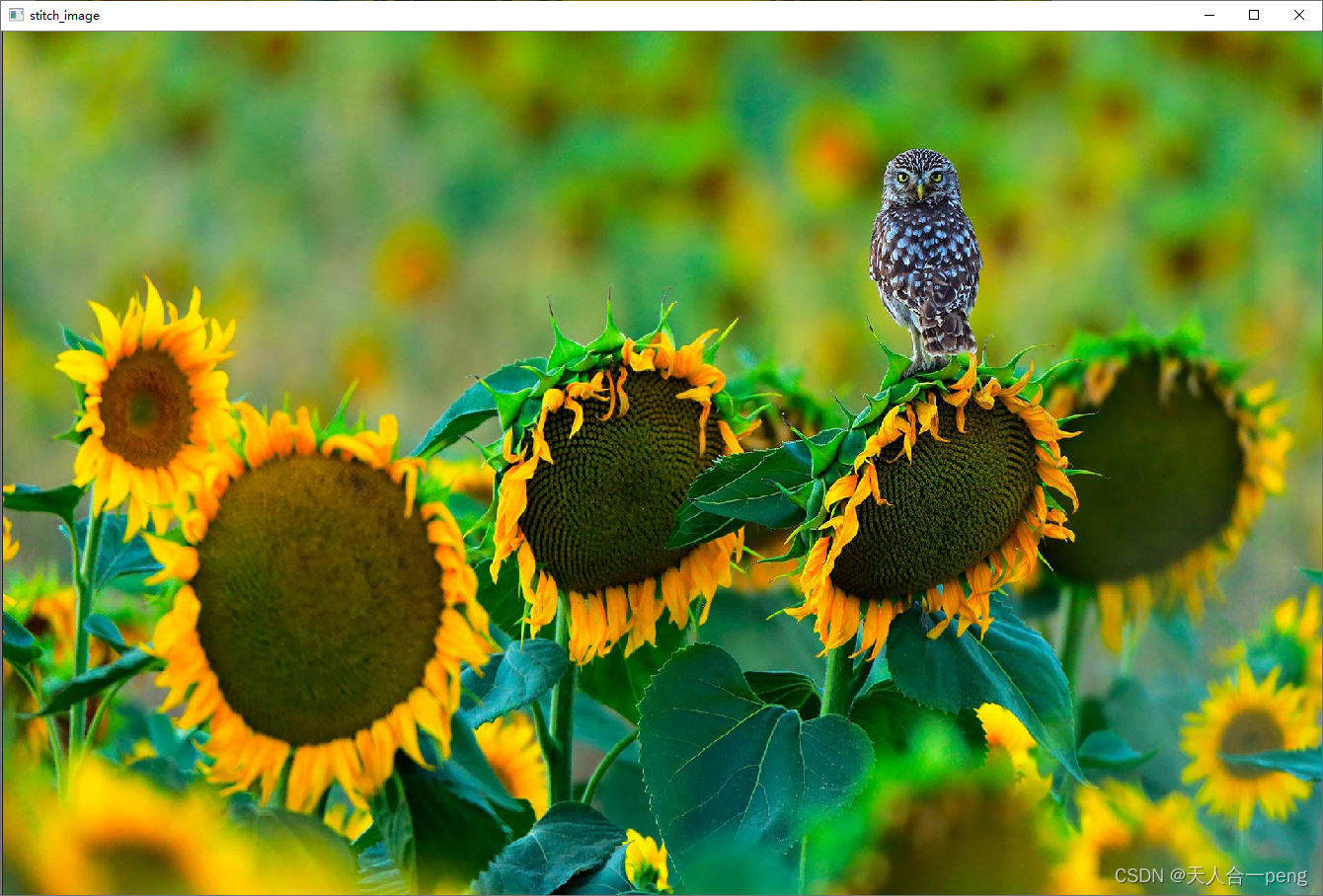

GMS特征点匹配

匹配结果

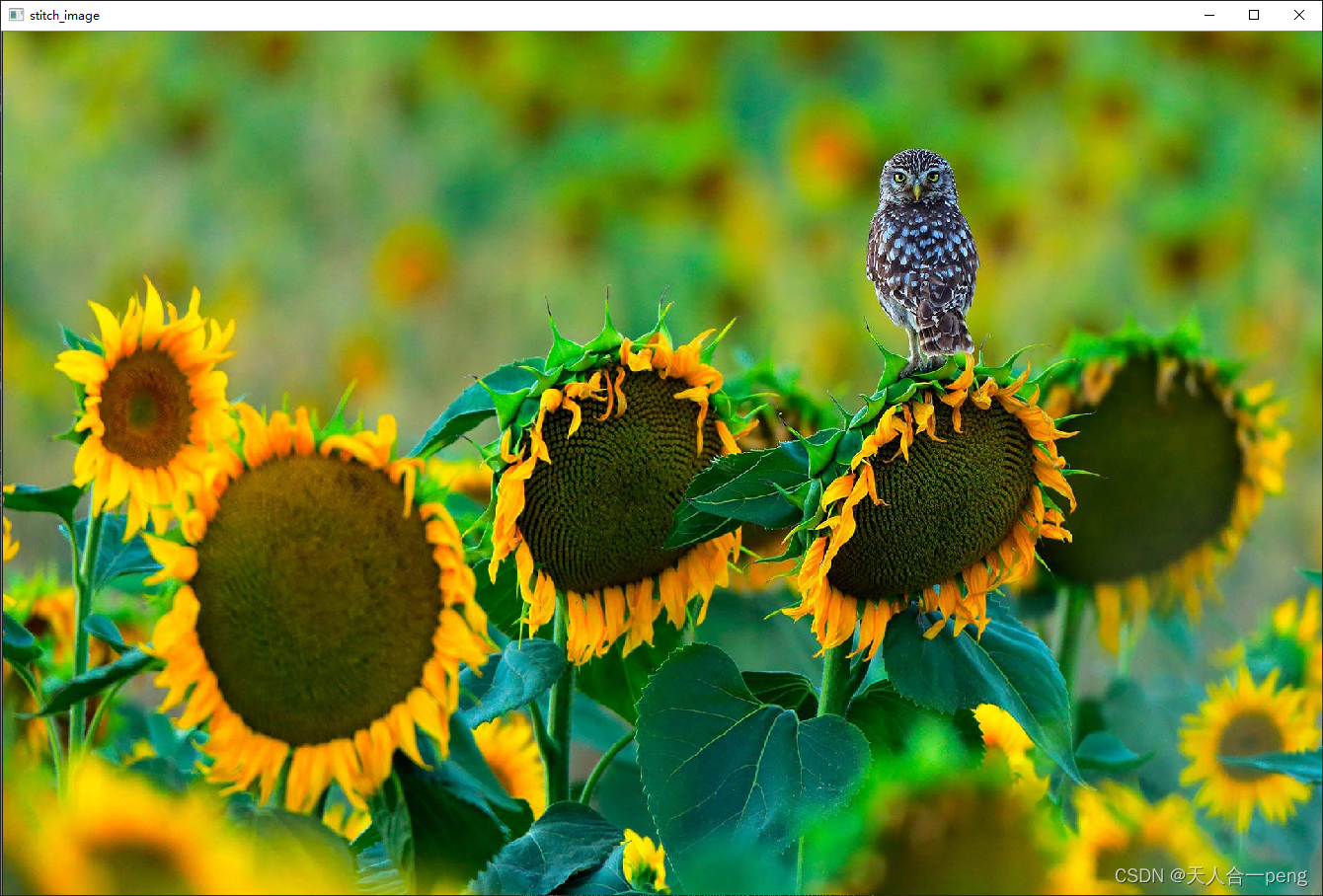

只处理可能重复的部分

stitch.cpp

#include "gms_matcher.h"

//#define USE_GPU

#ifdef USE_GPU

#include <opencv2/cudafeatures2d.hpp>

using cuda::GpuMat;

#endif

void GmsMatch(Mat& img1, Mat& img2, Mat& rect_l, Mat& rect_r);

void GmsMatch(Mat& img1, Mat& img2);

//Mat DrawInlier(Mat& src1, Mat& src2, vector<KeyPoint>& kpt1, vector<KeyPoint>& kpt2, vector<DMatch>& inlier, int type);

Mat DrawInlier(Mat& src1, Mat& src2, vector<KeyPoint>& kpt1, vector<KeyPoint>& kpt2, vector<DMatch>& inlier, int type, vector<Point2f>& kpLeft, vector<Point2f>& kpRight);

void CalcCorners(const Mat& H, const Mat& src);

Mat stitchImages(vector<Point2f>& kpLeft, vector<Point2f>& kpRight, Mat& img_left, Mat& img_right);

typedef struct

{

Point2f left_top;

Point2f left_bottom;

Point2f right_top;

Point2f right_bottom;

}four_corners_t;

four_corners_t corners;

void runImagePair() {

Mat img_left = imread("../data/flowerL.jpg");

Mat img_right = imread("../data/flowerR.jpg");

//Mat img_left = imread("../data/SL.jpg");

//Mat img_right = imread("../data/SR.jpg");

//Mat img_left = imread("../data/Image_02.jpg");

//Mat img_right = imread("../data/Image_01.jpg");

//--------------------------

//直接从可能重复的区域提取特征点匹配 当前是左右图在拼接处大概有1/3是重复的

Rect rect_right = Rect(0, 0, img_right.cols / 3, img_right.rows);

Rect rect_left = Rect(2 * img_left.cols / 3, 0, (img_left.cols / 3) - 1, img_left.rows);

Mat image_r_rect = img_right(Rect(rect_right));

Mat image_l_rect = img_left(Rect(rect_left));

GmsMatch(img_left, img_right, image_l_rect, image_r_rect);

}

int main()

{

#ifdef USE_GPU

int flag = cuda::getCudaEnabledDeviceCount();

if (flag != 0) { cuda::setDevice(0); }

#endif // USE_GPU

runImagePair();

return 0;

}

void GmsMatch(Mat& img_left, Mat& img_right, Mat& rect_l, Mat& rect_r) {

vector<KeyPoint> kp1, kp2;

Mat d1, d2;

vector<DMatch> matches_all, matches_gms;

Ptr<ORB> orb = ORB::create(10000);

orb->setFastThreshold(0);

orb->detectAndCompute(rect_l, Mat(), kp1, d1);

orb->detectAndCompute(rect_r, Mat(), kp2, d2);

#ifdef USE_GPU

GpuMat gd1(d1), gd2(d2);

Ptr<cuda::DescriptorMatcher> matcher = cv::cuda::DescriptorMatcher::createBFMatcher(NORM_HAMMING);

matcher->match(gd1, gd2, matches_all);

#else

BFMatcher matcher(NORM_HAMMING);

matcher.match(d1, d2, matches_all);

#endif

// GMS filter

std::vector<bool> vbInliers;

gms_matcher gms(kp1, rect_l.size(), kp2, rect_r.size(), matches_all);

int num_inliers = gms.GetInlierMask(vbInliers, false, false);

cout << "Get total " << num_inliers << " matches." << endl;

// collect matches

for (size_t i = 0; i < vbInliers.size(); ++i)

{

if (vbInliers[i] == true)

{

matches_gms.push_back(matches_all[i]);

}

}

// draw matching

vector<Point2f> kpLeft, kpRight;

//Mat show = DrawInlier(img1, img2, kp1, kp2, matches_gms, 1);

Mat show = DrawInlier(rect_l, rect_r, kp1, kp2, matches_gms, 1, kpLeft, kpRight);

//namedWindow("show", 2);

imshow("matchingshow", show);

waitKey();

// stitch images

Mat stitch_image = stitchImages(kpLeft, kpRight, img_left, img_right);

namedWindow("stitch_image", 2);

imshow("stitch_image", stitch_image);

waitKey();

}

void GmsMatch(Mat& img_left, Mat& img_right) {

vector<KeyPoint> kp1, kp2;

Mat d1, d2;

vector<DMatch> matches_all, matches_gms;

Ptr<ORB> orb = ORB::create(10000);

orb->setFastThreshold(0);

orb->detectAndCompute(img_left, Mat(), kp1, d1);

orb->detectAndCompute(img_right, Mat(), kp2, d2);

#ifdef USE_GPU

GpuMat gd1(d1), gd2(d2);

Ptr<cuda::DescriptorMatcher> matcher = cv::cuda::DescriptorMatcher::createBFMatcher(NORM_HAMMING);

matcher->match(gd1, gd2, matches_all);

#else

BFMatcher matcher(NORM_HAMMING);

matcher.match(d1, d2, matches_all);

#endif

// GMS filter

std::vector<bool> vbInliers;

gms_matcher gms(kp1, img_left.size(), kp2, img_right.size(), matches_all);

int num_inliers = gms.GetInlierMask(vbInliers, false, false);

cout << "Get total " << num_inliers << " matches." << endl;

// collect matches

for (size_t i = 0; i < vbInliers.size(); ++i)

{

if (vbInliers[i] == true)

{

matches_gms.push_back(matches_all[i]);

}

}

// draw matching

vector<Point2f> kpLeft, kpRight;

//Mat show = DrawInlier(img1, img2, kp1, kp2, matches_gms, 1);

Mat show = DrawInlier(img_left, img_right, kp1, kp2, matches_gms, 1, kpLeft, kpRight);

//namedWindow("show", 2);

imshow("matchingshow", show);

// stitch images

Mat stitch_image = stitchImages(kpLeft, kpRight, img_left, img_right);

namedWindow("stitch_image", 2);

imshow("stitch_image", stitch_image);

waitKey();

}

Mat stitchImages(vector<Point2f>& kpLeft, vector<Point2f>& kpRight, Mat& img_left, Mat& img_right)

{

//坐标原还至原图

for (int i = 0; i < kpLeft.size(); ++i)

{

kpLeft[i].x += 2*img_left.cols/3;

}

//获取图像1到图像2的投影映射矩阵 尺寸为3*3

Mat homo = findHomography(kpRight, kpLeft, RANSAC);

cout << "变换矩阵为:\n" << homo << endl << endl; //输出映射矩阵

//计算配准图的四个顶点坐标

CalcCorners(homo, img_right);

//图像配准

Mat imageTransform1, imageTransform2;

warpPerspective(img_right, imageTransform1, homo, Size(MAX(corners.right_top.x, corners.right_bottom.x), img_left.rows));

//namedWindow("transformedImage", 2);

//imshow("transformedImage", imageTransform1); //直接经过透视矩阵变换

waitKey();

//创建拼接后的图,需提前计算图的大小

int dst_width = imageTransform1.cols; //取最右点的长度为拼接图的长度

//int dst_width = imageLeft.cols + imageRight.cols; //取最右点的长度为拼接图的长度

int dst_height = img_left.rows;

Mat dst_stitch(dst_height, dst_width, CV_8UC3);

dst_stitch.setTo(0);

imageTransform1.copyTo(dst_stitch(Rect(0, 0, imageTransform1.cols, imageTransform1.rows)));

//namedWindow("transform1", 2);

//imshow("transform1", dst_stitch);

//waitKey();

img_left.copyTo(dst_stitch(Rect(0, 0, img_left.cols, img_left.rows)));

return dst_stitch;

}

Mat DrawInlier(Mat& src1, Mat& src2, vector<KeyPoint>& kpt1, vector<KeyPoint>& kpt2, vector<DMatch>& inlier, int type, vector<Point2f>& kpLeft, vector<Point2f>& kpRight) {

const int height = max(src1.rows, src2.rows);

const int width = src1.cols + src2.cols;

Mat output(height, width, CV_8UC3, Scalar(0, 0, 0));

src1.copyTo(output(Rect(0, 0, src1.cols, src1.rows)));

src2.copyTo(output(Rect(src1.cols, 0, src2.cols, src2.rows)));

if (type == 1)

{

for (size_t i = 0; i < inlier.size(); i++)

{

Point2f left = kpt1[inlier[i].queryIdx].pt;

Point2f right = (kpt2[inlier[i].trainIdx].pt + Point2f((float)src1.cols, 0.f));

kpLeft.push_back(left);

kpRight.push_back(kpt2[inlier[i].trainIdx].pt);

line(output, left, right, Scalar(0, 255, 255));

}

}

else if (type == 2)

{

for (size_t i = 0; i < inlier.size(); i++)

{

Point2f left = kpt1[inlier[i].queryIdx].pt;

Point2f right = (kpt2[inlier[i].trainIdx].pt + Point2f((float)src1.cols, 0.f));

kpLeft.push_back(left);

kpRight.push_back(kpt2[inlier[i].trainIdx].pt);

line(output, left, right, Scalar(255, 0, 0));

}

for (size_t i = 0; i < inlier.size(); i++)

{

Point2f left = kpt1[inlier[i].queryIdx].pt;

Point2f right = (kpt2[inlier[i].trainIdx].pt + Point2f((float)src1.cols, 0.f));

kpLeft.push_back(left);

kpRight.push_back(kpt2[inlier[i].trainIdx].pt);

circle(output, left, 1, Scalar(0, 255, 255), 2);

circle(output, right, 1, Scalar(0, 255, 0), 2);

}

}

return output;

}

void CalcCorners(const Mat& H, const Mat& src)

{

double v2[] = { 0, 0, 1 };//左上角

double v1[3];//变换后的坐标值

Mat V2 = Mat(3, 1, CV_64FC1, v2); //列向量

Mat V1 = Mat(3, 1, CV_64FC1, v1); //列向量

V1 = H * V2;

//左上角(0,0,1)

cout << "V2: " << V2 << endl;

cout << "V1: " << V1 << endl;

corners.left_top.x = v1[0] / v1[2];

corners.left_top.y = v1[1] / v1[2];

//左下角(0,src.rows,1)

v2[0] = 0;

v2[1] = src.rows;

v2[2] = 1;

V2 = Mat(3, 1, CV_64FC1, v2); //列向量

V1 = Mat(3, 1, CV_64FC1, v1); //列向量

V1 = H * V2;

corners.left_bottom.x = v1[0] / v1[2];

corners.left_bottom.y = v1[1] / v1[2];

//右上角(src.cols,0,1)

v2[0] = src.cols;

v2[1] = 0;

v2[2] = 1;

V2 = Mat(3, 1, CV_64FC1, v2); //列向量

V1 = Mat(3, 1, CV_64FC1, v1); //列向量

V1 = H * V2;

corners.right_top.x = v1[0] / v1[2];

corners.right_top.y = v1[1] / v1[2];

//右下角(src.cols,src.rows,1)

v2[0] = src.cols;

v2[1] = src.rows;

v2[2] = 1;

V2 = Mat(3, 1, CV_64FC1, v2); //列向量

V1 = Mat(3, 1, CV_64FC1, v1); //列向量

V1 = H * V2;

corners.right_bottom.x = v1[0] / v1[2];

corners.right_bottom.y = v1[1] / v1[2];

}

相关文章

- 基于OpenCV的算式识别与批改系统 设想

- 基于opencv批量读取文件夹中的图像裁剪兴趣区域并保存

- 基于 OpenCV 和 GStreamer 显示 RTSP 视频流

- 【计算机视觉】基于OpenCV的人脸识别

- 【OpenCV】 像素加减乘除 & 逻辑运算

- 基于opencv+python实现数独

- 基于opencv的模板匹配【C++/Qt】【附源码】

- 基于c++/opencv实现的机器视觉标准函数,可以快速应用到qt/MFC等框架

- 【工程应用五】 opencv中linemod模板匹配算法诸多疑惑和自我解读。

- 实战 | 计算器/数码管数字识别 基于OpenCV和EasyOCR/PaddleOCR(附源码)

- C# OpenCV-EmguCV找圆应用实例(二) 基于霍夫圆变换

- C# OpenCV-EmguCV找圆应用实例(一) 基于轮廓拟合

- OpenCV DNN人脸检测模块使用步骤演示(基于OpenCV4.5.4)

- Python,OpenCV基于支持向量机SVM的手写数字OCR

- OpenCV中的Shi-Tomasi角点检测器

- Python,OpenCV直方图均衡化以提高图像对比度

- 【OpenCV】- 多边形将轮廓包围

- extract digits subordinary image from original image with OpenCV

- opencv-absdiff两个数组差的绝对值