Python 下载 图片、音乐、视频 和 断点续传

Python3 使用 requests 模块显示下载进度: http://blog.csdn.net/supercooly/article/details/51046561

python编写断点续传下载软件:

https://www.leavesongs.com/PYTHON/resume-download-from-break-point-tool-by-python.html

Python实现下载界面(带进度条,断点续传,多线程多任务下载等):https://blog.51cto.com/eddy72/2106091

视频下载以及断点续传( 使用 aiohttp 并发 ):https://www.cnblogs.com/baili-luoyun/p/10507608.html

关键字:python编写断点续传下载软件:

Python 下载文件的三种方法

当然你也可以利用 ftplib 从 ftp 站点下载文件。此外 Python 还提供了另外一种方法 requests。

下面来看看三种方法是如何来下载文件的:

方法 1 : ( 使用 urlopen() 方法 )

urlopen(url[, data[, proxies]]) :创建一个表示远程 url 的类文件对象,然后像本地文件一样操作这个类文件对象来获取远程数据。

- url 表示远程数据的路径,一般是网址;

- data 表示以 post 方式提交到 url 的数据(提交数据的两种方式:post 与 get )

- proxies 用于设置代理。

urlopen 返回 一个类文件对象,它提供了如下方法:

- read() , readline() , readlines() , fileno() , close() :这些方法的使用方式与文件对象完全一样;

- info():返回一个 httplib.HTTPMessage 对象,表示远程服务器返回的头信息

- getcode():返回 Http 状态码。如果是 http 请求,200 表示请求成功完成,404 表示网址未找到;

- geturl():返回请求的 url;

示例代码 1:

# -*- coding: utf-8 -*-

from urllib.request import urlopen

response = urlopen('https://www.baidu.com/')

print(response.info()) # get header information from remote server

print(response.getcode()) # get status code

print(response.geturl()) # get request url

# get all content on url page

html_byte = urlopen('https://www.baidu.com/').read()

html_str = html_byte.decode('utf-8')

print(html_str)

img_url = 'https://img9.doubanio.com/view/celebrity/s_ratio_celebrity/public/p28424.webp'

response = urlopen(img_url)

with open('./img.jpg', 'wb') as f:

f.write(response.read())

pass示例代码 2:

# -*- coding: utf-8 -*-

import urllib.request

import urllib.parse

import json

"""

利用有道翻译进行在线翻译

"""

def translate(word=None):

# 目标URL

target_url = "http://fanyi.youdao.com/translate?smartresult=dict&smartresult=rule&smartresult=ugc&sessionFrom=null"

# 用户自定义表单,word 表示的是用户要翻译的内容。这里使用的是dict类型,也可以使用元组列表(已经试过的)。

data = dict()

data['type'] = 'AUTO'

data['i'] = words

data['doctype'] = 'json'

data['xmlVersion'] = '1.8'

data['keyfrom'] = 'fanyi.web'

data['ue'] = 'UTF-8'

data['action'] = 'FY_BY_CLICKBUTTON'

data['typoResult'] = 'true'

# 将自定义 data 转换成标准格式

data = urllib.parse.urlencode(data).encode('utf-8')

# 发送用户请求

html = urllib.request.urlopen(target_url, data)

# 读取并解码内容

rst = html.read().decode("utf-8")

rst_dict = json.loads(rst)

return rst_dict['translateResult'][0][0]['tgt']

pass

if __name__ == "__main__":

print("输入字母q表示退出")

while True:

words = input("请输入要查询的单词或句子:\n")

if words == 'q':

break

result = translate(words)

print(f"翻译结果是:{result}")

方法 2 : ( 使用 urlretrieve 方法 )

urlretrieve() 方法直接将远程数据下载到本地。

urlretrieve(url, filename=None, reporthook=None, data=None)

- url:下载链接地址

- filename:指定了保存本地路径(如果参数未指定,urllib会生成一个临时文件保存数据。)

- reporthook:一个回调函数,当连接上服务器、以及相应的数据块传输完毕时会触发该回调,可以利用这个回调函数来显示当前的下载进度。

- data:指 post 到服务器的数据,该方法返回一个包含两个元素的(filename, headers) 元组,filename 表示保存到本地的路径,header 表示服务器的响应头

- header:表示服务器的响应头。

示例代码 1:下载并保存图片

# -*- coding: utf-8 -*-

from urllib.request import urlretrieve

print("downloading with urllib")

img_url = 'https://img9.doubanio.com/view/celebrity/s_ratio_celebrity/public/p28424.webp'

urlretrieve(img_url, "./img.jpg")示例代码 2:将 baidu 的 html 抓取到本地,保存在 ''./baidu.html" 文件中,同时显示下载的进度。

# -*- coding: utf-8 -*-

import os

from urllib.request import urlretrieve

def loading(block_num, block_size, total_size):

"""

回调函数: 数据传输时自动调用

:param block_num: 已经传输的数据块数目

:param block_size: 每个数据块字节

:param total_size: 总字节

:return:

"""

percent = int(100 * block_num * block_size / total_size)

if percent > 100:

percent = 100

print("正在下载>>>{}%".format(percent))

import time

time.sleep(0.5)

if __name__ == '__main__':

url = 'http://www.baidu.com'

current_path = os.path.abspath('.')

work_path = os.path.join(current_path, 'baidu.html')

urlretrieve(url, work_path, loading)

pass

示例代码 3:爬取百度贴吧一些小图片

# -*- coding: utf-8 -*-

import re

from urllib.request import urlretrieve, urlopen

def download_img(html=None):

reg_str = re.compile(r'img.*?src="(.*?)"')

img_url_list = re.findall(reg_str, html)

index = 0

for img_url in img_url_list:

if 'tiebapic' in img_url:

index += 1

print(img_url)

urlretrieve(img_url, f'./{index}.jpg')

if __name__ == '__main__':

# input_url = input('input url:')

input_url = 'https://tieba.baidu.com/p/6679682935'

response = urlopen(input_url)

html_str = response.read().decode('utf-8')

download_img(html_str)

方法 3 : ( 使用 requests 包 )

Python requests 库 的 用法:Python 的 requests 库的用法_freeking101的博客-CSDN博客

# -*- coding: utf-8 -*-

import requests

def download_img():

print("downloading with requests")

# test_url = 'http://www.pythontab.com/test/demo.zip'

# r = requests.get(test_url)

# with open("./demo.zip", "wb") as ff:

# ff.write(r.content)

img_url = 'https://img9.doubanio.com/view/celebrity/s_ratio_celebrity/public/p28424.webp'

r = requests.get(img_url)

with open("./img.jpg", "wb") as ff:

ff.write(r.content)

if __name__ == '__main__':

download_img()

方法 4 : ( 使用 you-get 包 )

python 示例代码( you-get 多线程 下载视频 ):

import os

import subprocess

from concurrent.futures import ThreadPoolExecutor, wait

def download(url):

video_data_dir = './vide_data_dir'

try:

os.makedirs(video_data_dir)

except BaseException as be:

pass

video_id = url.split('/')[-1]

video_name = f'{video_data_dir}/{video_id}'

command = f'you-get -o ./video_data -O {video_name} ' + url

print(command)

subprocess.call(command, shell=True)

print(f"退出线程 ---> {url}")

def main():

url_list = [

'https://www.bilibili.com/video/BV1Xz4y127Yo',

'https://www.bilibili.com/video/BV1yt4y1Q7SS',

'https://www.bilibili.com/video/BV1bW411n7fY',

]

with ThreadPoolExecutor(max_workers=3) as pool:

thread_id_list = [pool.submit(download, url) for url in url_list]

wait(thread_id_list)

if __name__ == '__main__':

main()

you-get 帮助

D:\> you-get --help

you-get: version 0.4.1555, a tiny downloader that scrapes the web.

usage: you-get [OPTION]... URL...

A tiny downloader that scrapes the web

optional arguments:

-V, --version Print version and exit

-h, --help Print this help message and exit

Dry-run options:

(no actual downloading)

-i, --info Print extracted information

-u, --url Print extracted information with URLs

--json Print extracted URLs in JSON format

Download options:

-n, --no-merge Do not merge video parts

--no-caption Do not download captions (subtitles, lyrics, danmaku, ...)

-f, --force Force overwriting existing files

--skip-existing-file-size-check

Skip existing file without checking file size

-F STREAM_ID, --format STREAM_ID

Set video format to STREAM_ID

-O FILE, --output-filename FILE

Set output filename

-o DIR, --output-dir DIR

Set output directory

-p PLAYER, --player PLAYER

Stream extracted URL to a PLAYER

-c COOKIES_FILE, --cookies COOKIES_FILE

Load cookies.txt or cookies.sqlite

-t SECONDS, --timeout SECONDS

Set socket timeout

-d, --debug Show traceback and other debug info

-I FILE, --input-file FILE

Read non-playlist URLs from FILE

-P PASSWORD, --password PASSWORD

Set video visit password to PASSWORD

-l, --playlist Prefer to download a playlist

-a, --auto-rename Auto rename same name different files

-k, --insecure ignore ssl errors

Playlist optional options:

--first FIRST the first number

--last LAST the last number

--size PAGE_SIZE, --page-size PAGE_SIZE

the page size number

Proxy options:

-x HOST:PORT, --http-proxy HOST:PORT

Use an HTTP proxy for downloading

-y HOST:PORT, --extractor-proxy HOST:PORT

Use an HTTP proxy for extracting only

--no-proxy Never use a proxy

-s HOST:PORT or USERNAME:PASSWORD@HOST:PORT, --socks-proxy HOST:PORT or USERNAME:PASSWORD@HOST:PORT

Use an SOCKS5 proxy for downloading

D:\>命令行下载视频:you-get https://www.bilibili.com/video/BV1Xz4y127Yo

探测视频真实的播放地址:

-i, --info Print extracted information

-u, --url Print extracted information with URLs

--json Print extracted URLs in JSON format- you-get -u https://www.bilibili.com/video/BV1Xz4y127Yo

- you-get --json https://www.bilibili.com/video/BV1Xz4y127Yo

方法 5 : ( 使用 pycurl )

linux 安装 curl: yum install curl

Python 安装模块:pip install pycurl

方法 6 : ( 使用 wget 或者 python 的 wget 模块 )

使用 wget 命令:wget http://www.robots.ox.ac.uk/~ankush/data.tar.gz

python 调用 wget 命令实现下载

使用 python 的 wget 模块:pip install wget

import wget

import tempfile

url = 'https://p0.ifengimg.com/2019_30/1106F5849B0A2A2A03AAD4B14374596C76B2BDAB_w1000_h626.jpg'

# 获取文件名

file_name = wget.filename_from_url(url)

print(file_name) #1106F5849B0A2A2A03AAD4B14374596C76B2BDAB_w1000_h626.jpg

# 下载文件,使用默认文件名,结果返回文件名

file_name = wget.download(url)

print(file_name) #1106F5849B0A2A2A03AAD4B14374596C76B2BDAB_w1000_h626.jpg

# 下载文件,重新命名输出文件名

target_name = 't1.jpg'

file_name = wget.download(url, out=target_name)

print(file_name) #t1.jpg

# 创建临时文件夹,下载到临时文件夹里

tmpdir = tempfile.gettempdir()

target_name = 't2.jpg'

file_name = wget.download(url, out=os.path.join(tmpdir, target_name))

print(file_name) #/tmp/t2.jpg

方法 7 : ( 使用 ffmpeg )

ffmpeg -ss 00:00:00 -i "https://vd4.bdstatic.com/mda-na67uu3bf6v85cnm/sc/cae_h264/1641533845968105062/mda-na67uu3bf6v85cnm.mp4?v_from_s=hkapp-haokan-hbe&auth_key=1641555906-0-0-642c8f9b47d4c37cc64d307be88df29d&bcevod_channel=searchbox_feed&pd=1&pt=3&logid=0906397151&vid=8050108300345362998&abtest=17376_2&klogid=0906397151" -t 00:05:00 -c copy "test.mp4"

ffmpeg 如何设置 header 信息

ffmpeg -user_agent "User-Agent: Mozilla/5.0 (Macintosh; Intel Mac OS X 10_12_6) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/88.0.4324.182 Safari/537.36" -headers "sec-ch-ua: 'Chromium';v='88', 'Google Chrome';v='88', ';Not A Brand';v='99'"$'\r\n'"sec-ch-ua-mobile: ?0"$"Upgrade-Insecure-Requests: 1" -i http://127.0.0.1:3000

如果只需要 ua 只加上 -user_agent 就可以。如果需要设置 -headers 其他选项时,多个选项用 $'\r\n' 链接起来。服务端接收数据格式正常,如图

ffmpeg 设置 header 请求头 UA 文件最大大小

ffmpeg -headers $'Origin: https://xxx.com\r\nUser-Agent: Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/79.0.3945.130 Safari/537.36\r\nReferer: https://xxx.com' -threads 0 -i '地址' -c copy -y -f mpegts '文件名.ts' -v trace

使用-headers $’头一\r\n头二’添加header

注意顺序 ,放在命令行最后面无法生效!!!!!

后来输出了一下信息才发现问题

-v trace 用于输出当前的header信息方便调试

设置 UA 可以使用单独的 -user-agent 指令

在输出文件名前使用 -fs 1024K 限制为 1024K

ffmpeg 帮助信息:ffmpeg --help

Getting help:

-h -- print basic options

-h long -- print more options

-h full -- print all options (including all format and codec specific options, very long)

-h type=name -- print all options for the named decoder/encoder/demuxer/muxer/filter/bsf/protocol

See man ffmpeg for detailed description of the options.

Print help / information / capabilities:

-L show license

-h topic show help

-? topic show help

-help topic show help

--help topic show help

-version show version

-buildconf show build configuration

-formats show available formats

-muxers show available muxers

-demuxers show available demuxers

-devices show available devices

-codecs show available codecs

-decoders show available decoders

-encoders show available encoders

-bsfs show available bit stream filters

-protocols show available protocols

-filters show available filters

-pix_fmts show available pixel formats

-layouts show standard channel layouts

-sample_fmts show available audio sample formats

-dispositions show available stream dispositions

-colors show available color names

-sources device list sources of the input device

-sinks device list sinks of the output device

-hwaccels show available HW acceleration methods

Global options (affect whole program instead of just one file):

-loglevel loglevel set logging level

-v loglevel set logging level

-report generate a report

-max_alloc bytes set maximum size of a single allocated block

-y overwrite output files

-n never overwrite output files

-ignore_unknown Ignore unknown stream types

-filter_threads number of non-complex filter threads

-filter_complex_threads number of threads for -filter_complex

-stats print progress report during encoding

-max_error_rate maximum error rate ratio of decoding errors (0.0: no errors, 1.0: 100% errors) above which ffmpeg returns an error instead of success.

-vol volume change audio volume (256=normal)

Per-file main options:

-f fmt force format

-c codec codec name

-codec codec codec name

-pre preset preset name

-map_metadata outfile[,metadata]:infile[,metadata] set metadata information of outfile from infile

-t duration record or transcode "duration" seconds of audio/video

-to time_stop record or transcode stop time

-fs limit_size set the limit file size in bytes

-ss time_off set the start time offset

-sseof time_off set the start time offset relative to EOF

-seek_timestamp enable/disable seeking by timestamp with -ss

-timestamp time set the recording timestamp ('now' to set the current time)

-metadata string=string add metadata

-program title=string:st=number... add program with specified streams

-target type specify target file type ("vcd", "svcd", "dvd", "dv" or "dv50" with optional prefixes "pal-", "ntsc-" or "film-")

-apad audio pad

-frames number set the number of frames to output

-filter filter_graph set stream filtergraph

-filter_script filename read stream filtergraph description from a file

-reinit_filter reinit filtergraph on input parameter changes

-discard discard

-disposition disposition

Video options:

-vframes number set the number of video frames to output

-r rate set frame rate (Hz value, fraction or abbreviation)

-fpsmax rate set max frame rate (Hz value, fraction or abbreviation)

-s size set frame size (WxH or abbreviation)

-aspect aspect set aspect ratio (4:3, 16:9 or 1.3333, 1.7777)

-vn disable video

-vcodec codec force video codec ('copy' to copy stream)

-timecode hh:mm:ss[:;.]ff set initial TimeCode value.

-pass n select the pass number (1 to 3)

-vf filter_graph set video filters

-ab bitrate audio bitrate (please use -b:a)

-b bitrate video bitrate (please use -b:v)

-dn disable data

Audio options:

-aframes number set the number of audio frames to output

-aq quality set audio quality (codec-specific)

-ar rate set audio sampling rate (in Hz)

-ac channels set number of audio channels

-an disable audio

-acodec codec force audio codec ('copy' to copy stream)

-vol volume change audio volume (256=normal)

-af filter_graph set audio filters

Subtitle options:

-s size set frame size (WxH or abbreviation)

-sn disable subtitle

-scodec codec force subtitle codec ('copy' to copy stream)

-stag fourcc/tag force subtitle tag/fourcc

-fix_sub_duration fix subtitles duration

-canvas_size size set canvas size (WxH or abbreviation)

-spre preset set the subtitle options to the indicated preset

使用 requests 模块显示下载进度

1. 相关资料

请求关键参数:stream=True。默认情况下,当你进行网络请求后,响应体会立即被下载。你可以通过 stream 参数覆盖这个行为,推迟下载响应体直到访问 Response.content 属性。

import json

import requests

tarball_url = 'https://github.com/kennethreitz/requests/tarball/master'

r = requests.get(tarball_url, stream=True) # 此时仅有响应头被下载下来了,连接保持打开状态,响应体并没有下载。

print(json.dumps(dict(r.headers), ensure_ascii=False, indent=4))

# if int(r.headers['content-length']) < TOO_LONG:

# content = r.content # 只要访问 Response.content 属性,就开始下载响应体

# # ...

# pass进一步使用 Response.iter_content 和 Response.iter_lines 方法来控制工作流,或者以 Response.raw 从底层 urllib3 的 urllib3.HTTPResponse

from contextlib import closing

with closing(requests.get('http://httpbin.org/get', stream=True)) as r:

# Do things with the response here.

pass保持活动状态(持久连接)

归功于 urllib3,同一会话内的持久连接是完全自动处理的,同一会话内发出的任何请求都会自动复用恰当的连接!

注意:只有当响应体的所有数据被读取完毕时,连接才会被释放到连接池;所以确保将 stream 设置为 False 或读取 Response 对象的 content 属性。

2. 下载文件并显示进度条

在 Python3 中,print()方法的默认结束符(end=’\n’),当调用完之后,光标自动切换到下一行,此时就不能更新原有输出。

将结束符改为 “\r” ,输出完成之后,光标会回到行首,并不换行。此时再次调用 print() 方法,就会更新这一行输出了。

结束符也可以使用 “\d”,为退格符,光标回退一格,可以使用多个,按需求回退。

在结束这一行输出时,将结束符改回 “\n” 或者不指定使用默认

下面是一个格式化的进度条显示模块。代码如下:

#!/usr/bin/env python3

import requests

from contextlib import closing

"""

作者:微微寒

链接:https://www.zhihu.com/question/41132103/answer/93438156

来源:知乎

著作权归作者所有。商业转载请联系作者获得授权,非商业转载请注明出处。

"""

class ProgressBar(object):

def __init__(

self, title, count=0.0, run_status=None, fin_status=None,

total=100.0, unit='', sep='/', chunk_size=1.0

):

super(ProgressBar, self).__init__()

self.info = "[%s] %s %.2f %s %s %.2f %s"

self.title = title

self.total = total

self.count = count

self.chunk_size = chunk_size

self.status = run_status or ""

self.fin_status = fin_status or " " * len(self.status)

self.unit = unit

self.seq = sep

def __get_info(self):

# 【名称】状态 进度 单位 分割线 总数 单位

_info = self.info % (

self.title, self.status, self.count/self.chunk_size,

self.unit, self.seq, self.total/self.chunk_size, self.unit

)

return _info

def refresh(self, count=1, status=None):

self.count += count

# if status is not None:

self.status = status or self.status

end_str = "\r"

if self.count >= self.total:

end_str = '\n'

self.status = status or self.fin_status

print(self.__get_info(), end=end_str)

def main():

with closing(requests.get("http://www.futurecrew.com/skaven/song_files/mp3/razorback.mp3", stream=True)) as response:

chunk_size = 1024

content_size = int(response.headers['content-length'])

progress = ProgressBar(

"razorback", total=content_size, unit="KB",

chunk_size=chunk_size, run_status="正在下载", fin_status="下载完成"

)

# chunk_size = chunk_size < content_size and chunk_size or content_size

with open('./file.mp3', "wb") as file:

for data in response.iter_content(chunk_size=chunk_size):

file.write(data)

progress.refresh(count=len(data))

if __name__ == '__main__':

main()

Python 编写断点续传下载软件

另一种方法是调用 curl 之类支持断点续传的下载工具。后续补充

一、HTTP 断点续传原理

其实 HTTP 断点续传原理比较简单,在 HTTP 数据包中,可以增加 Range 头,这个头以字节为单位指定请求的范围,来下载范围内的字节流。如:

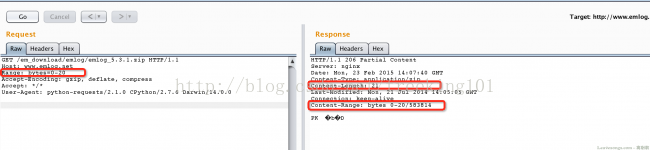

如上图勾下来的地方,我们发送数据包时选定请求的内容的范围,返回包即获得相应长度的内容。所以,我们在下载的时候,可以将目标文件分成很多“小块”,每次下载一小块(用Range标明小块的范围),直到把所有小块下载完。

当网络中断,或出错导致下载终止时,我们只需要记录下已经下载了哪些“小块”,还没有下载哪些。下次下载的时候在Range处填写未下载的小块的范围即可,这样就能构成一个断点续传。

其实像迅雷这种多线程下载器也是同样的原理。将目标文件分成一些小块,再分配给不同线程去下载,最后整合再检查完整性即可。

二、Python 下载文件实现方式

我们仍然使用之前介绍过的 requests 库作为 HTTP 请求库。

先看看这段文档:Advanced Usage — Requests 2.27.1 documentation,当请求时设置steam=True的时候就不会立即关闭连接,而我们以流的形式读取body,直到所有信息读取完全或者调用Response.close关闭连接。

所以,如果要下载大文件的话,就将 steam 设置为True,慢慢下载,而不是等整个文件下载完才返回。

stackoverflow上有同学给出了一个简单的下载 demo:

#!/usr/bin/env python3

import requests

def download_file(url):

local_filename = url.split('/')[-1]

# NOTE the stream=True parameter

r = requests.get(url, stream=True)

with open(local_filename, 'wb') as f:

for chunk in r.iter_content(chunk_size=1024):

if chunk: # filter out keep-alive new chunks

f.write(chunk)

f.flush()

return local_filename这基本上就是我们核心的下载代码了。

- 当使用 requests 的 get 下载大文件/数据时,建议使用使用 stream 模式。

- 当把 get 函数的 stream 参数设置成 False 时,它会立即开始下载文件并放到内存中,如果文件过大,有可能导致内存不足。

- 当把 get 函数的 stream 参数设置成 True 时,它不会立即开始下载,当你使用 iter_content 或 iter_lines 遍历内容或访问内容属性时才开始下载。需要注意一点:文件没有下载之前,它也需要保持连接。

iter_content:一块一块的遍历要下载的内容

iter_lines:一行一行的遍历要下载的内容

使用上面两个函数下载大文件可以防止占用过多的内存,因为每次只下载小部分数据。

示例代码:

r = requests.get(url_file, stream=True)

f = open("file_path", "wb")

for chunk in r.iter_content(chunk_size=512):

if chunk:

f.write(chunk)

三、断点续传结合大文件下载

现在结合这两个知识点写个小程序:支持断点续传的下载器。

我们可以先考虑一下需要注意的有哪些点,或者可以添加的功能有哪些:

1. 用户自定义性:可以定义cookie、referer、user-agent。如某些下载站检查用户登录才允许下载等情况。

2. 很多服务端不支持断点续传,如何判断?

3. 怎么去表达进度条?

4. 如何得知文件的总大小?使用HEAD请求?那么服务器不支持HEAD请求怎么办?

5. 下载后的文件名怎么处理?还要考虑windows不允许哪些字符做文件名。

(header中可能有filename,url中也有filename,用户还可以自己指定filename)

6. 如何去分块,是否加入多线程。其实想一下还是有很多疑虑,而且有些地方可能一时还解决不了。先大概想一下各个问题的答案:

1. headers可以由用户自定义

2. 正式下载之前先HEAD请求,得到服务器status code是否是206,

header中是否有Range-content等标志,判断是否支持断点续传。

3. 可以先不使用进度条,只显示当前下载大小和总大小

4. 在HEAD请求中匹配出Range-content中的文件总大小,或获得content-length大小(当不支持断点续传的

时候会返回总content-length)。如果不支持HEAD请求或没有content-type就设置总大小为0.

(总之不会妨碍下载即可)

5. 文件名优先级:用户自定义 > header中content-disposition > url中的定义,为了避免麻烦,

我这里和linux下的wget一样,忽略content-disposition的定义。

如果用户不指定保存的用户名的话,就以url中最后一个“/”后的内容作为用户名。

6. 为了稳定和简单,不做多线程了。如果不做多线程的话,我们分块就可以按照很小来分,如1KB,然后从头

开始下载,一K一K这样往后填充。这样避免了很多麻烦。当下载中断的时候,我们只需要简单查看

当前已经下载了多少字节,就可以从这个字节后一个开始继续下载。

解决了这些疑问,我们就开始动笔了。实际上,疑问并不是在未动笔的时候干想出来的,

基本都是我写了一半突然发现的问题。解决了这些疑问,我们就开始动笔了。实际上,疑问并不是在未动笔的时候干想出来的,基本都是我写了一半突然发现的问题。

def download(self, url, filename, headers = {}):

finished = False

block = self.config['block']

local_filename = self.remove_nonchars(filename)

tmp_filename = local_filename + '.downtmp'

if self.support_continue(url): # 支持断点续传

try:

with open(tmp_filename, 'rb') as fin:

self.size = int(fin.read()) + 1

except:

self.touch(tmp_filename)

finally:

headers['Range'] = "bytes=%d-" % (self.size, )

else:

self.touch(tmp_filename)

self.touch(local_filename)

size = self.size

total = self.total

r = requests.get(url, stream = True, verify = False, headers = headers)

if total > 0:

print "[+] Size: %dKB" % (total / 1024)

else:

print "[+] Size: None"

start_t = time.time()

with open(local_filename, 'ab') as f:

try:

for chunk in r.iter_content(chunk_size = block):

if chunk:

f.write(chunk)

size += len(chunk)

f.flush()

sys.stdout.write('\b' * 64 + 'Now: %d, Total: %s' % (size, total))

sys.stdout.flush()

finished = True

os.remove(tmp_filename)

spend = int(time.time() - start_t)

speed = int(size / 1024 / spend)

sys.stdout.write('\nDownload Finished!\nTotal Time: %ss, Download Speed: %sk/s\n' % (spend, speed))

sys.stdout.flush()

except:

import traceback

print traceback.print_exc()

print "\nDownload pause.\n"

finally:

if not finished:

with open(tmp_filename, 'wb') as ftmp:

ftmp.write(str(size))这是下载的方法。首先if语句调用 self.support_continue(url) 判断是否支持断点续传。如果支持则从一个临时文件中读取当前已经下载了多少字节,如果不存在这个文件则会抛出错误,那么size默认=0,说明一个字节都没有下载。

然后就请求url,获得下载连接,for循环下载。这个时候我们得抓住异常,一旦出现异常,不能让程序退出,而是正常将当前已下载字节size写入临时文件中。下次再次下载的时候读取这个文件,将Range设置成bytes=(size+1)-,也就是从当前字节的后一个字节开始到结束的范围。从这个范围开始下载,来实现一个断点续传。

判断是否支持断点续传的方法还兼顾了一个获得目标文件大小的功能:

def support_continue(self, url):

headers = {

'Range': 'bytes=0-4'

}

try:

r = requests.head(url, headers = headers)

crange = r.headers['content-range']

self.total = int(re.match(ur'^bytes 0-4/(\d+)$', crange).group(1))

return True

except:

pass

try:

self.total = int(r.headers['content-length'])

except:

self.total = 0

return False用正则匹配出大小,获得直接获取 headers['content-length'],获得将其设置为0.

核心代码基本上就是这些,再就是一些设置。github:py-wget/py-wget.py at master · phith0n/py-wget · GitHub

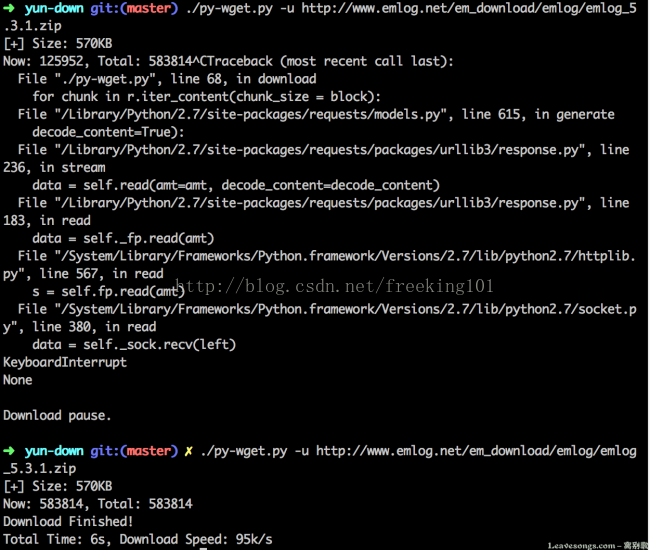

运行程序,获取 emlog 最新的安装包:

中间我按 Ctrl + C人工打断了下载进程,但之后还是继续下载,实现了“断点续传”。

但在我实际测试过程中,并不是那么多请求可以断点续传的,所以我对于不支持断点续传的文件这样处理:重新下载。

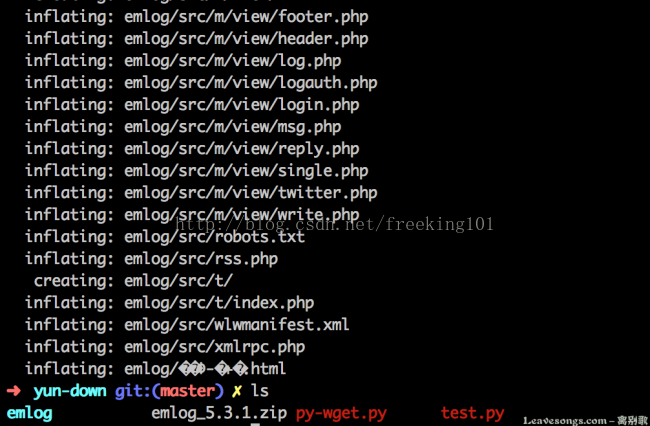

下载后的压缩包正常解压,也充分证明了下载的完整性:

动态图演示

github 地址:一个支持断点续传的小下载器:py-wget:GitHub - phith0n/py-wget: small wget by python

多线程下载文件

示例代码:

# 在python3下测试

import sys

import requests

import threading

import datetime

# 传入的命令行参数,要下载文件的url

url = sys.argv[1]

def Handler(start, end, url, filename):

headers = {'Range': 'bytes=%d-%d' % (start, end)}

r = requests.get(url, headers=headers, stream=True)

# 写入文件对应位置

with open(filename, "r+b") as fp:

fp.seek(start)

var = fp.tell()

fp.write(r.content)

def download_file(url, num_thread = 5):

r = requests.head(url)

try:

file_name = url.split('/')[-1]

file_size = int(r.headers['content-length']) # Content-Length获得文件主体的大小,当http服务器使用Connection:keep-alive时,不支持Content-Length

except:

print("检查URL,或不支持对线程下载")

return

# 创建一个和要下载文件一样大小的文件

fp = open(file_name, "wb")

fp.truncate(file_size)

fp.close()

# 启动多线程写文件

part = file_size // num_thread # 如果不能整除,最后一块应该多几个字节

for i in range(num_thread):

start = part * i

if i == num_thread - 1: # 最后一块

end = file_size

else:

end = start + part

t = threading.Thread(target=Handler, kwargs={'start': start, 'end': end, 'url': url, 'filename': file_name})

t.setDaemon(True)

t.start()

# 等待所有线程下载完成

main_thread = threading.current_thread()

for t in threading.enumerate():

if t is main_thread:

continue

t.join()

print('%s 下载完成' % file_name)

if __name__ == '__main__':

start = datetime.datetime.now().replace(microsecond=0)

download_file(url)

end = datetime.datetime.now().replace(microsecond=0)

print("用时: ", end='')

print(end-start)

Python下载图片、音乐、视频

示例代码:

# -*- coding:utf-8 -*-

import re

import requests

from contextlib import closing

from lxml import etree

class Spider(object):

""" crawl image """

def __init__(self):

self.index = 0

self.url = "http://www.xiaohuar.com"

self.proxies = {"http": "http://172.17.18.80:8080", "https": "https://172.17.18.80:8080"}

pass

def download_image(self, image_url):

real_url = self.url + image_url

print "downloading the {0} image".format(self.index)

with open("{0}.jpg".format(self.index), 'wb') as f:

self.index += 1

f.write(requests.get(real_url, proxies=self.proxies).content)

pass

pass

def start_crawl(self):

start_url = "http://www.xiaohuar.com/hua/"

r = requests.get(start_url, proxies=self.proxies)

if r.status_code == 200:

temp = r.content.decode("gbk")

html = etree.HTML(temp)

links = html.xpath('//div[@class="item_t"]//img/@src')

map(self.download_image, links)

# next_page_url = html.xpath('//div[@class="page_num"]//a/text()')

# print next_page_url[-1]

# print next_page_url[-2]

# print next_page_url[-3]

next_page_url = html.xpath(u'//div[@class="page_num"]//a[contains(text(),"下一页")]/@href')

page_num = 2

while next_page_url:

print "download {0} page images".format(page_num)

r_next = requests.get(next_page_url[0], proxies=self.proxies)

if r_next.status_code == 200:

html = etree.HTML(r_next.content.decode("gbk"))

links = html.xpath('//div[@class="item_t"]//img/@src')

map(self.download_image, links)

try:

next_page_url = html.xpath(u'//div[@class="page_num"]//a[contains(text(),"下一页")]/@href')

except BaseException as e:

next_page_url = None

print e

page_num += 1

pass

else:

print "response status code : {0}".format(r_next.status_code)

pass

else:

print "response status code : {0}".format(r.status_code)

pass

class ProgressBar(object):

def __init__(self, title, count=0.0, run_status=None, fin_status=None, total=100.0, unit='', sep='/', chunk_size=1.0):

super(ProgressBar, self).__init__()

self.info = "[%s] %s %.2f %s %s %.2f %s"

self.title = title

self.total = total

self.count = count

self.chunk_size = chunk_size

self.status = run_status or ""

self.fin_status = fin_status or " " * len(self.status)

self.unit = unit

self.seq = sep

def __get_info(self):

# 【名称】状态 进度 单位 分割线 总数 单位

_info = self.info % (self.title, self.status,

self.count / self.chunk_size, self.unit, self.seq, self.total / self.chunk_size, self.unit)

return _info

def refresh(self, count=1, status=None):

self.count += count

# if status is not None:

self.status = status or self.status

end_str = "\r"

if self.count >= self.total:

end_str = '\n'

self.status = status or self.fin_status

print self.__get_info(), end_str

def download_mp4(video_url):

print video_url

try:

with closing(requests.get(video_url.strip().decode(), stream=True)) as response:

chunk_size = 1024

with open('./{0}'.format(video_url.split('/')[-1]), "wb") as f:

for data in response.iter_content(chunk_size=chunk_size):

f.write(data)

f.flush()

except BaseException as e:

print e

return

def mp4():

proxies = {"http": "http://172.17.18.80:8080", "https": "https://172.17.18.80:8080"}

url = "http://www.budejie.com/video/"

r = requests.get(url)

print r.url

if r.status_code == 200:

print "status_code:{0}".format(r.status_code)

content = r.content

video_urls_compile = re.compile("http://.*?\.mp4")

video_urls = re.findall(video_urls_compile, content)

print len(video_urls)

# print video_urls

map(download_mp4, video_urls)

else:

print "status_code:{0}".format(r.status_code)

def mp3():

proxies = {"http": "http://172.17.18.80:8080", "https": "https://172.17.18.80:8080"}

with closing(requests.get("http://www.futurecrew.com/skaven/song_files/mp3/razorback.mp3", proxies=proxies, stream=True)) as response:

chunk_size = 1024

content_size = int(response.headers['content-length'])

progress = ProgressBar("razorback", total=content_size, unit="KB", chunk_size=chunk_size, run_status="正在下载",

fin_status="下载完成")

# chunk_size = chunk_size < content_size and chunk_size or content_size

with open('./file.mp3', "wb") as f:

for data in response.iter_content(chunk_size=chunk_size):

f.write(data)

progress.refresh(count=len(data))

if __name__ == "__main__":

t = Spider()

t.start_crawl()

mp3()

mp4()

pass下载视频的效果

另一个下载图片示例代码:

( github 地址:https://github.com/injetlee/Python/blob/master/爬虫集合/meizitu.py )

包括了创建文件夹,利用多线程爬取,设置的是5个线程,可以根据自己机器自己来设置一下。

import requests

import os

import time

import threading

from bs4 import BeautifulSoup

def download_page(url):

'''

用于下载页面

'''

headers = {"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:61.0) Gecko/20100101 Firefox/61.0"}

r = requests.get(url, headers=headers)

r.encoding = 'gb2312'

return r.text

def get_pic_list(html):

'''

获取每个页面的套图列表,之后循环调用get_pic函数获取图片

'''

soup = BeautifulSoup(html, 'html.parser')

pic_list = soup.find_all('li', class_='wp-item')

for i in pic_list:

a_tag = i.find('h3', class_='tit').find('a')

link = a_tag.get('href')

text = a_tag.get_text()

get_pic(link, text)

def get_pic(link, text):

'''

获取当前页面的图片,并保存

'''

html = download_page(link) # 下载界面

soup = BeautifulSoup(html, 'html.parser')

pic_list = soup.find('div', id="picture").find_all('img') # 找到界面所有图片

headers = {"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:61.0) Gecko/20100101 Firefox/61.0"}

create_dir('pic/{}'.format(text))

for i in pic_list:

pic_link = i.get('src') # 拿到图片的具体 url

r = requests.get(pic_link, headers=headers) # 下载图片,之后保存到文件

with open('pic/{}/{}'.format(text, link.split('/')[-1]), 'wb') as f:

f.write(r.content)

time.sleep(1) # 休息一下,不要给网站太大压力,避免被封

def create_dir(name):

if not os.path.exists(name):

os.makedirs(name)

def execute(url):

page_html = download_page(url)

get_pic_list(page_html)

def main():

create_dir('pic')

queue = [i for i in range(1, 72)] # 构造 url 链接 页码。

threads = []

while len(queue) > 0:

for thread in threads:

if not thread.is_alive():

threads.remove(thread)

while len(threads) < 5 and len(queue) > 0: # 最大线程数设置为 5

cur_page = queue.pop(0)

url = 'http://meizitu.com/a/more_{}.html'.format(cur_page)

thread = threading.Thread(target=execute, args=(url,))

thread.setDaemon(True)

thread.start()

print('{}正在下载{}页'.format(threading.current_thread().name, cur_page))

threads.append(thread)

if __name__ == '__main__':

main()

Python 使用 requests 爬取图片

爬取 校花网:http://www.xueshengmai.com/hua/ 大学校花 的图片

Python使用Scrapy爬虫框架全站爬取图片并保存本地(@妹子图@):Python使用Scrapy爬虫框架全站爬取图片并保存本地(@妹子图@) - William126 - 博客园

单线程版本

代码:

# -*- coding: utf-8 -*-

import os

import requests

# from PIL import Image

from lxml import etree

class Spider(object):

""" crawl image """

def __init__(self):

self.index = 0

self.url = "http://www.xueshengmai.com"

# self.proxies = {

# "http": "http://172.17.18.80:8080",

# "https": "https://172.17.18.80:8080"

# }

pass

def download_image(self, image_url):

real_url = self.url + image_url

print("downloading the {0} image".format(self.index))

with open("./{0}.jpg".format(self.index), 'wb') as f:

self.index += 1

try:

r = requests.get(

real_url,

# proxies=self.proxies

)

if 200 == r.status_code:

f.write(r.content)

except BaseException as e:

print(e)

pass

def add_url_prefix(self, image_url):

return self.url + image_url

def start_crawl(self):

start_url = "http://www点xueshengmai点com/hua/"

r = requests.get(

start_url,

# proxies=self.proxies

)

if 200 == r.status_code:

temp = r.content.decode("gbk")

html = etree.HTML(temp)

links = html.xpath('//div[@class="item_t"]//img/@src')

# url_list = list(map(lambda image_url=None: self.url + image_url, links))

###################################################################

# python2

# map(self.download_image, links)

# python3 返回的是一个 map object ,所以需要 使用 list 包括下

list(map(self.download_image, links))

###################################################################

next_page_url = html.xpath(u'//div[@class="page_num"]//a[contains(text(),"下一页")]/@href')

page_num = 2

while next_page_url:

print("download {0} page images".format(page_num))

r_next = requests.get(

next_page_url[0],

# proxies=self.proxies

)

if r_next.status_code == 200:

html = etree.HTML(r_next.content.decode("gbk"))

links = html.xpath('//div[@class="item_t"]//img/@src')

# python3 返回的是一个 map object ,所以需要 使用 list 包括下

list(map(self.download_image, links))

try:

t_x_string = u'//div[@class="page_num"]//a[contains(text(),"下一页")]/@href'

next_page_url = html.xpath(t_x_string)

except BaseException as e:

next_page_url = None

# print e

page_num += 1

pass

else:

print("response status code : {0}".format(r_next.status_code))

pass

else:

print("response status code : {0}".format(r.status_code))

pass

if __name__ == "__main__":

t = Spider()

t.start_crawl()

pause = input("press any key to continue")

pass

抓取 "妹子图" 代码:

# coding=utf-8

import requests

import os

from lxml import etree

import sys

'''

reload(sys)

sys.setdefaultencoding('utf-8')

'''

platform = 'Windows' if os.name == 'nt' else 'Linux'

print(f'当前系统是 【{platform}】 系统')

# http请求头

header = {

# ':authority': 'www点mzitu点com',

# ':method': 'GET',

'accept': '*/*',

'accept-encoding': 'gzip, deflate, br',

'referer': 'https://www点mzitu点com',

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) '

'Chrome/75.0.3770.90 Safari/537.36'

}

site_url = 'http://www点mzitu点com'

url_prefix = 'http://www点mzitu点com/page/'

img_save_path = 'C:/mzitu/'

def get_page_max_num(page_html=None, flag=1):

"""

:param page_html: 页面的 HTML 文本

:param flag: 表示是 那个页面,1:所有妹子的列表页面。2:每个妹子单独的图片页面。

:return:

"""

# 找寻最大页数

s_html = etree.HTML(page_html)

xpath_string = '//div[@class="nav-links"]//a' if 1 == flag \

else '//div[@class="pagenavi"]//a//span'

display_page_link = s_html.xpath(xpath_string)

# print(display_page_link[-1].text)

max_num = display_page_link[-2].text if '下一页»' == display_page_link[-1].text \

else display_page_link[-1].text

return int(max_num)

def main():

site_html = requests.get(site_url, headers=header).text

page_max_num_1 = get_page_max_num(site_html)

for page_num in range(1, page_max_num_1 + 1):

page_url = f'{url_prefix}{page_num}'

page_html = requests.get(page_url, headers=header).text

s_page_html = etree.HTML(text=page_html)

every_page_mm_url_list = s_page_html.xpath(

'//ul[@id="pins"]//li[not(@class="box")]/span/a'

)

for tag_a in every_page_mm_url_list:

mm_url = tag_a.get('href')

title = tag_a.text.replace('\\', '').replace('/', '').replace(':', '')

title = title.replace('*', '').replace('?', '').replace('"', '')

title = title.replace('<', '').replace('>', '').replace('|', '')

mm_dir = f'{img_save_path}{title}'

if not os.path.exists(mm_dir):

os.makedirs(mm_dir)

print(f'【{title}】开始下载')

mm_page_html = requests.get(mm_url, headers=header).text

mm_page_max_num = get_page_max_num(mm_page_html, flag=2)

for index in range(1, mm_page_max_num + 1):

photo_url = f'{mm_url}/{index}'

photo_html = requests.get(photo_url, headers=header).text

s_photo_html = etree.HTML(text=photo_html)

img_url = s_photo_html.xpath('//div[@class="main-image"]//img')[0].get('src')

# print(img_url)

r = requests.get(img_url, headers=header)

if r.status_code == 200:

with open(f'{mm_dir}/{index}.jpg', 'wb') as f:

f.write(r.content)

else:

print(f'status code : {r.status_code}')

else:

print(f'【{title}】下载完成')

print(f'第【{page_num}】页完成')

if __name__ == '__main__':

main()

pass运行成功后,会在脚本所在的目录 生成对应目录,每个目录里面都有对应的图片。。。。。

多线程版本

从 Python3.2开始,Python 标准库提供了 concurrent.futures 模块, concurrent.futures 模块可以利用 multiprocessing 实现真正的平行计算。python3 自带,python2 需要安装。

代码:

# coding=utf-8

import requests

import os

from lxml import etree

import sys

from concurrent import futures

'''

reload(sys)

sys.setdefaultencoding('utf-8')

'''

platform = 'Windows' if os.name == 'nt' else 'Linux'

print(f'当前系统是 【{platform}】 系统')

# http请求头

header = {

# ':authority': 'www点mzitu点com',

# ':method': 'GET',

'accept': '*/*',

'accept-encoding': 'gzip, deflate, br',

'referer': 'https://www点mzitu点com',

'user-agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 '

'(KHTML, like Gecko) Chrome/75.0.3770.90 Safari/537.36'

}

site_url = 'http://www点mzitu点com'

url_prefix = 'http://www点mzitu点com/page/'

img_save_path = 'C:/mzitu/'

def get_page_max_num(page_html=None, flag=1):

"""

:param page_html: 页面的 HTML 文本

:param flag: 表示是 那个页面,1:所有妹子的列表页面。2:每个妹子单独的图片页面。

:return:

"""

# 找寻最大页数

s_html = etree.HTML(page_html)

xpath_string = '//div[@class="nav-links"]//a' if 1 == flag \

else '//div[@class="pagenavi"]//a//span'

display_page_link = s_html.xpath(xpath_string)

# print(display_page_link[-1].text)

max_num = display_page_link[-2].text if '下一页»' == display_page_link[-1].text \

else display_page_link[-1].text

return int(max_num)

def download_img(args_info):

img_url, mm_dir, index = args_info

r = requests.get(img_url, headers=header)

if r.status_code == 200:

with open(f'{mm_dir}/{index}.jpg', 'wb') as f:

f.write(r.content)

else:

print(f'status code : {r.status_code}')

def main():

# 线程池中线程数

with futures.ProcessPoolExecutor() as process_pool_executor:

site_html = requests.get(site_url, headers=header).text

page_max_num_1 = get_page_max_num(site_html)

for page_num in range(1, page_max_num_1 + 1):

page_url = f'{url_prefix}{page_num}'

page_html = requests.get(page_url, headers=header).text

s_page_html = etree.HTML(text=page_html)

every_page_mm_url_list = s_page_html.xpath(

'//ul[@id="pins"]//li[not(@class="box")]/span/a'

)

for tag_a in every_page_mm_url_list:

mm_url = tag_a.get('href')

title = tag_a.text.replace('\\', '').replace('/', '').replace(':', '')

title = title.replace('*', '').replace('?', '').replace('"', '')

title = title.replace('<', '').replace('>', '').replace('|', '')

mm_dir = f'{img_save_path}{title}'

if not os.path.exists(mm_dir):

os.makedirs(mm_dir)

print(f'【{title}】开始下载')

mm_page_html = requests.get(mm_url, headers=header).text

mm_page_max_num = get_page_max_num(mm_page_html, flag=2)

for index in range(1, mm_page_max_num + 1):

photo_url = f'{mm_url}/{index}'

photo_html = requests.get(photo_url, headers=header).text

s_photo_html = etree.HTML(text=photo_html)

img_url = s_photo_html.xpath('//div[@class="main-image"]//img')[0].get('src')

# 提交一个可执行的回调 task,它返回一个 Future 对象

process_pool_executor.submit(download_img, (img_url, mm_dir, index))

else:

print(f'【{title}】下载完成')

print(f'第【{page_num}】页完成')

if __name__ == '__main__':

main()

pass

相关文章

- python安装python-lzf包,报错lzf_module.c:3:20: fatal error: Python.h: No such file or directory

- 快速上手python的简单web框架flask

- 如何下载python软件

- 错过会后悔的行业,Python可以做哪些副业

- 人生苦短,我用Python!为什么现在越来越多的人转行python?

- 《Python和Pygame游戏开发指南》——2.17 动画

- python学习之基于Python的人脸识别技术学习

- Python趣味实用小工具

- python print的多种使用

- Python 数据分析教程之如何验证线性回归的假设,线性回归的假设是什么?以及如何用python验证它们?

- python 利用 PIL 生成随机验证码图片【附:使用DOS命令更换pip下载源】

- Python爬虫:如何自动化下载网站图片

- Python实例---爬取下载喜马拉雅音频文件

- Python 基础 之 多任务 gevent 协程应用的简单案例,简单实现下载网上文件的功能(urllib,gevent 等)

- 第七周:Python

- 【数字信号处理】——Python频谱绘制

- python爬虫之多线程threading、多进程multiprocessing、协程aiohttp 批量下载图片

- 电商 Python 淘宝主图下载

- Python excel转换为json

- 在Win7下正确使用Python自带的pip管理工具下载

- python将h264文件视频转为mp4格式