HBase API 之旅

2023-09-14 09:14:50 时间

HBase API

环境准备

项目后在 pom.xml 中添加依赖

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-server</artifactId>

<version>2.0.5</version>

</dependency>

<dependency>

<groupId>org.apache.hbase</groupId>

<artifactId>hbase-client</artifactId>

<version>2.0.5</version>

</dependency>

DDL

创建 HBase_DDL 类

判断表是否存在

package com.cpucode.hbase.table.exist;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.*;

import org.apache.hadoop.hbase.client.*;

import java.io.IOException;

/**

* 判断表是否存在

* @author : cpucode

* @date : 2022/1/26 17:26

* @github : https://github.com/CPU-Code

* @csdn : https://blog.csdn.net/qq_44226094

*/

public class IsTableExist {

private static Connection connection ;

static{

Configuration conf = HBaseConfiguration.create();

conf.set("hbase.zookeeper.quorum","cpucode101,cpucode102,cpucode103");

try {

connection = ConnectionFactory.createConnection(conf);

} catch (IOException e) {

e.printStackTrace();

}

}

public static void main(String[] args) throws IOException {

String tableName = "test";

boolean tableExist = isTableExist(tableName);

System.out.println(tableExist);

}

public static boolean isTableExist(String tableName) throws IOException {

//3.获取DDL操作对象

Admin admin = connection.getAdmin();

//4.判断表是否存在操作

boolean b = admin.tableExists(TableName.valueOf(tableName));

//5.关闭连接

admin.close();

connection.close();

//6.返回结果

return b;

}

}

https://github.com/CPU-Code/Hadoop

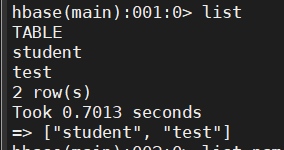

创建表

package com.cpucode.hbase.create.table;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.*;

import org.apache.hadoop.hbase.util.Bytes;

import java.io.IOException;

/**

* 创建表

*

* @author : cpucode

* @date : 2022/1/26 20:51

* @github : https://github.com/CPU-Code

* @csdn : https://blog.csdn.net/qq_44226094

*/

public class CreateTable {

private static Connection connection ;

static{

//创建配置信息并配置

Configuration conf = HBaseConfiguration.create();

conf.set("hbase.zookeeper.quorum","cpucode101,cpucode102,cpucode103");

try {

// 获取与HBase的连接

connection = ConnectionFactory.createConnection(conf);

} catch (IOException e) {

e.printStackTrace();

}

}

public static void main(String[] args) throws IOException {

String tableName = "test";

createTable(tableName, "info1", "info2");

}

public static void createTable(String tableName, String... cfs) throws IOException {

//1.判断是否存在列族信息

if (cfs.length <= 0){

System.err.println("请设置列族信息!");

return;

}

//2.判断表是否存在

if (isTableExist(tableName)){

System.err.println("需要创建的表已存在!");

return;

}

//5.获取DDL操作对象

Admin admin = connection.getAdmin();

//6.创建表描述器构造器

TableDescriptorBuilder tableDescriptorBuilder = TableDescriptorBuilder.newBuilder(TableName.valueOf(tableName));

//7.循环添加列族信息

for (String cf : cfs) {

ColumnFamilyDescriptorBuilder columnFamilyDescriptorBuilder = ColumnFamilyDescriptorBuilder.newBuilder(Bytes.toBytes(cf));

tableDescriptorBuilder.setColumnFamily(columnFamilyDescriptorBuilder.build());

}

//8.执行创建表的操作

admin.createTable(tableDescriptorBuilder.build());

//9.关闭资源

admin.close();

connection.close();

}

public static boolean isTableExist(String tableName) throws IOException {

//3.获取DDL操作对象

Admin admin = connection.getAdmin();

//4.判断表是否存在操作

boolean b = admin.tableExists(TableName.valueOf(tableName));

//5.关闭连接

admin.close();

//6.返回结果

return b;

}

}

https://github.com/CPU-Code/Hadoop

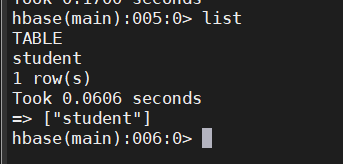

删除表

package com.cpucode.hbase.drop.table;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.Admin;

import org.apache.hadoop.hbase.client.Connection;

import org.apache.hadoop.hbase.client.ConnectionFactory;

import java.io.IOException;

/**

* 删除表

*

* @author : cpucode

* @date : 2022/1/26 21:33

* @github : https://github.com/CPU-Code

* @csdn : https://blog.csdn.net/qq_44226094

*/

public class DropTable {

private static Connection connection ;

static{

//创建配置信息并配置

Configuration conf = HBaseConfiguration.create();

conf.set("hbase.zookeeper.quorum","cpucode101,cpucode102,cpucode103");

try {

// 获取与HBase的连接

connection = ConnectionFactory.createConnection(conf);

} catch (IOException e) {

e.printStackTrace();

}

}

public static void main(String[] args) throws IOException {

String tableName = "test";

dropTable(tableName);

}

public static void dropTable(String tableName) throws IOException {

//1.判断表是否存在

if (!isTableExist(tableName)){

System.err.println("需要删除的表不存在!");

return;

}

//4.获取DDL操作对象

Admin admin = connection.getAdmin();

//5.使表下线

TableName tableName1 = TableName.valueOf(tableName);

admin.disableTable(tableName1);

//6.执行删除表操作

admin.deleteTable(tableName1);

//7.关闭资源

admin.close();

connection.close();

}

public static boolean isTableExist(String tableName) throws IOException {

//3.获取DDL操作对象

Admin admin = connection.getAdmin();

//4.判断表是否存在操作

boolean b = admin.tableExists(TableName.valueOf(tableName));

//5.关闭连接

admin.close();

//6.返回结果

return b;

}

}

https://github.com/CPU-Code/Hadoop

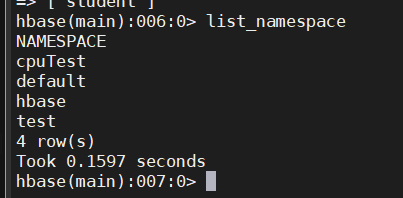

创建命名空间

package com.cpucode.hbase.create.nameSpace;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.NamespaceDescriptor;

import org.apache.hadoop.hbase.NamespaceExistException;

import org.apache.hadoop.hbase.client.Admin;

import org.apache.hadoop.hbase.client.Connection;

import org.apache.hadoop.hbase.client.ConnectionFactory;

import java.io.IOException;

/**

* 创建命名空间

*

* @author : cpucode

* @date : 2022/1/26 21:55

* @github : https://github.com/CPU-Code

* @csdn : https://blog.csdn.net/qq_44226094

*/

public class CreateNameSpace {

private static Connection connection ;

static{

//创建配置信息并配置

Configuration conf = HBaseConfiguration.create();

conf.set("hbase.zookeeper.quorum","cpucode101,cpucode102,cpucode103");

try {

// 获取与HBase的连接

connection = ConnectionFactory.createConnection(conf);

} catch (IOException e) {

e.printStackTrace();

}

}

public static void main(String[] args) throws IOException {

String ns = "cpuTest";

createNameSpace(ns);

}

public static void createNameSpace(String ns) throws IOException {

// 基本的判空操作

if(ns == null || ns.equals("")){

System.err.println("nameSpace名字不能为空");

return ;

}

// 获取DDL操作对象

Admin admin = connection.getAdmin();

// 创建命名空间描述器

NamespaceDescriptor build = NamespaceDescriptor.create(ns).build();

// 执行创建命名空间操作

try {

admin.createNamespace(build);

} catch (NamespaceExistException e) {

System.err.println("命名空间已存在!");

} catch (Exception e) {

e.printStackTrace();

}finally {

// 关闭连接

admin.close();

connection.close();

}

}

}

https://github.com/CPU-Code/Hadoop

DML

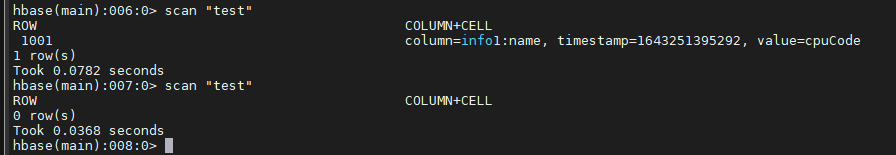

插入数据

package com.cpucode.hbase.dml.put.data;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.Connection;

import org.apache.hadoop.hbase.client.ConnectionFactory;

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.client.Table;

import org.apache.hadoop.hbase.util.Bytes;

import java.io.IOException;

/**

* 插入数据

*

* @author : cpucode

* @date : 2022/1/26 22:24

* @github : https://github.com/CPU-Code

* @csdn : https://blog.csdn.net/qq_44226094

*/

public class PutData {

private static Connection connection ;

static{

//创建配置信息并配置

Configuration conf = HBaseConfiguration.create();

conf.set("hbase.zookeeper.quorum","cpucode101,cpucode102,cpucode103");

try {

// 获取与HBase的连接

connection = ConnectionFactory.createConnection(conf);

} catch (IOException e) {

e.printStackTrace();

}

}

public static void main(String[] args) throws IOException {

String tableName = "test";

putData(tableName, "1001", "info1", "name", "cpuCode");

}

public static void putData(String tableName, String rowKey, String cf, String cn, String value) throws IOException {

//3.获取表的连接

Table table = connection.getTable(TableName.valueOf(tableName));

//4.创建Put对象

Put put = new Put(Bytes.toBytes(rowKey));

//5.放入数据

put.addColumn(Bytes.toBytes(cf), Bytes.toBytes(cn), Bytes.toBytes(value));

//6.执行插入数据操作

table.put(put);

//7.关闭连接

table.close();

connection.close();

}

}

https://github.com/CPU-Code/Hadoop

单条数据查询

package com.cpucode.hbase.dml.get.data;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.Cell;

import org.apache.hadoop.hbase.CellUtil;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.*;

import org.apache.hadoop.hbase.util.Bytes;

import java.io.IOException;

/**

* 单条数据查询(GET)

*

* @author : cpucode

* @date : 2022/1/27 10:46

* @github : https://github.com/CPU-Code

* @csdn : https://blog.csdn.net/qq_44226094

*/

public class GetData {

private static Connection connection ;

static{

//创建配置信息并配置

Configuration conf = HBaseConfiguration.create();

conf.set("hbase.zookeeper.quorum","cpucode101,cpucode102,cpucode103");

try {

// 获取与HBase的连接

connection = ConnectionFactory.createConnection(conf);

} catch (IOException e) {

e.printStackTrace();

}

}

public static void main(String[] args) throws IOException {

String tableName = "test";

getDate(tableName, "1001", "info1", "value");

}

public static void getDate(String tableName, String rowKey, String cf, String cn) throws IOException {

// 获取表的连接

Table table = connection.getTable(TableName.valueOf(tableName));

// 创建Get对象

Get get = new Get(Bytes.toBytes(rowKey));

// 指定列族查询

// get.addFamily(Bytes.toBytes(cf));

// 指定列族:列查询

// get.addColumn(Bytes.toBytes(cf), Bytes.toBytes(cn));

//查询数据

Result result = table.get(get);

// 解析result

for (Cell cell : result.rawCells()) {

System.out.println("ROW:" + Bytes.toString(CellUtil.cloneRow(cell)) +

" CF:" + Bytes.toString(CellUtil.cloneFamily(cell))+

" CL:" + Bytes.toString(CellUtil.cloneQualifier(cell))+

" VALUE:" + Bytes.toString(CellUtil.cloneValue(cell)));

}

// 关闭连接

table.close();

connection.close();

}

}

https://github.com/CPU-Code/Hadoop

扫描数据

package com.cpucode.hbase.dml.scan.table;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.Cell;

import org.apache.hadoop.hbase.CellUtil;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.*;

import org.apache.hadoop.hbase.util.Bytes;

import java.io.IOException;

/**

* 扫描数据(Scan)

*

* @author : cpucode

* @date : 2022/1/27 10:56

* @github : https://github.com/CPU-Code

* @csdn : https://blog.csdn.net/qq_44226094

*/

public class ScanTable {

private static Connection connection ;

static{

//创建配置信息并配置

Configuration conf = HBaseConfiguration.create();

conf.set("hbase.zookeeper.quorum","cpucode101,cpucode102,cpucode103");

try {

// 获取与HBase的连接

connection = ConnectionFactory.createConnection(conf);

} catch (IOException e) {

e.printStackTrace();

}

}

public static void main(String[] args) throws IOException {

String tableName = "test";

scanTable(tableName);

}

public static void scanTable(String tableName) throws IOException {

// 获取表的连接

Table table = connection.getTable(TableName.valueOf(tableName));

//创建Scan对象

Scan scan = new Scan();

// 扫描数据

ResultScanner scanner = table.getScanner(scan);

// 解析results

for (Result result : scanner) {

for (Cell cell : result.rawCells()) {

System.out.println(Bytes.toString(CellUtil.cloneRow(cell)) + ":" +

Bytes.toString(CellUtil.cloneFamily(cell)) + ":" +

Bytes.toString(CellUtil.cloneQualifier(cell)) + ":" +

Bytes.toString(CellUtil.cloneValue(cell)));

}

}

// 关闭资源

table.close();

connection.close();

}

}

https://github.com/CPU-Code/Hadoop

删除数据

package com.cpucode.hbase.dml.deleta.data;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.TableName;

import org.apache.hadoop.hbase.client.Connection;

import org.apache.hadoop.hbase.client.ConnectionFactory;

import org.apache.hadoop.hbase.client.Delete;

import org.apache.hadoop.hbase.client.Table;

import org.apache.hadoop.hbase.util.Bytes;

import java.io.IOException;

/**

* 删除数据

*

* @author : cpucode

* @date : 2022/1/27 11:16

* @github : https://github.com/CPU-Code

* @csdn : https://blog.csdn.net/qq_44226094

*/

public class DeleteData {

private static Connection connection ;

static{

//创建配置信息并配置

Configuration conf = HBaseConfiguration.create();

conf.set("hbase.zookeeper.quorum","cpucode101,cpucode102,cpucode103");

try {

// 获取与HBase的连接

connection = ConnectionFactory.createConnection(conf);

} catch (IOException e) {

e.printStackTrace();

}

}

public static void main(String[] args) throws IOException {

deletaData("test", "1001", "info1", "name");

}

public static void deletaData(String tableName, String rowKey, String cf, String cn) throws IOException {

// 获取表的连接

Table table = connection.getTable(TableName.valueOf(tableName));

// 创建Delete对象

Delete delete = new Delete(Bytes.toBytes(rowKey));

// 指定列族删除数据

// delete.addFamily(Bytes.toBytes(cf));

// 指定列族:列删除数据(所有版本)

// delete.addColumn(Bytes.toBytes(cf), Bytes.toBytes(cn));

// 指定列族:列删除数据(指定版本)

// delete.addColumns(Bytes.toBytes(cf), Bytes.toBytes(cn));

// 执行删除数据操作

table.delete(delete);

//关闭资源

table.close();

connection.close();

}

}

相关文章

- ABP源码分析三十七:ABP.Web.Api Script Proxy API

- org.apache.hadoop.hbase.MasterNotRunningException: Retried 7 times 异常的解决

- 【华为云技术分享】如何整合hive和hbase

- 开源大数据平台HBase对接OBS操作指南

- 大数据应用之HBase数据插入性能优化实测教程

- Spring Boot 2.x :通过 spring-boot-starter-hbase 集成 HBase

- Hbase 学习(五) 调优

- hbase 学习(十三)集群间备份原理

- Jenkins API groovy调用实践: Jenkins Core Api & Job DSL创建项目

- hdfs/hbase报错:Incomplete HDFS URI, no host

- hbase 分页过滤(新老API的差别)

- HBase技术架构介绍

- Atitit Persistence API持久性标准化法总结 目录 1. 持久性对于大多数企业应用程序都非常要害1 2. 持久化api内容2 2.1. 一种声明式地执行O-R映射的方式。2

- Hbase实用技巧:全量+增量数据的迁移方法

- Hbase总结(五)-hbase常识及habse适合什么场景

- 1006-HBase操作实战(JAVA API状态)

- hbase_学习_HBase环境搭建(单机)

- 关闭HBase时 no hbase master found

- 【大数据开发运维解决方案】Hadoop+Hive+HBase+Kylin 伪分布式安装指南