s23.基于 Kubernetes v1.25 (kubeadm) 和 Containerd部署高可用集群

基于 Kubernetes v1.25 和 Containerd 部署高可用集群

1.Kubernetes 高可用集群部署架构

本示例中的Kubernetes集群部署将基于以下环境进行。

表1-1 高可用Kubernetes集群规划

| 角色 | 机器名 | 机器配置 | ip地址 | 安装软件 |

|---|---|---|---|---|

| K8s 集群主节点 1,Master和etcd | k8s-master01.example.local | 2C4G | 172.31.3.101 | chrony-client、containerd、kubeadm 、kubelet、kubectl |

| K8s 集群主节点 2,Master和etcd | k8s-master02.example.local | 2C4G | 172.31.3.102 | chrony-client、containerd、kubeadm 、kubelet、kubectl |

| K8s 集群主节点 3,Master和etcd | k8s-master03.example.local | 2C4G | 172.31.3.103 | chrony-client、containerd、kubeadm 、kubelet、kubectl |

| K8s 主节点访问入口 1,提供高可用及负载均衡 | k8s-ha01.example.local | 2C2G | 172.31.3.104 | chrony-server、haproxy、keepalived |

| K8s 主节点访问入口 2,提供高可用及负载均衡 | k8s-ha02.example.local | 2C2G | 172.31.3.105 | chrony-server、haproxy、keepalived |

| 容器镜像仓库1 | k8s-harbor01.example.local | 2C2G | 172.31.3.106 | chrony-client、docker、docker-compose、harbor |

| 容器镜像仓库2 | k8s-harbor02.example.local | 2C2G | 172.31.3.107 | chrony-client、docker、docker-compose、harbor |

| K8s 集群工作节点 1 | k8s-node01.example.local | 2C4G | 172.31.3.108 | chrony-client、containerd、kubeadm 、kubelet |

| K8s 集群工作节点 2 | k8s-node02.example.local | 2C4G | 172.31.3.109 | chrony-client、containerd、kubeadm 、kubelet |

| K8s 集群工作节点 3 | k8s-node03.example.local | 2C4G | 172.31.3.110 | chrony-client、containerd、kubeadm 、kubelet |

| VIP,在ha01和ha02主机实现 | k8s.example.local | 172.31.3.188 |

软件版本信息和Pod、Service网段规划:

| 配置信息 | 备注 |

|---|---|

| 支持的操作系统版本 | CentOS 7.9/stream 8、Rocky 8、Ubuntu 18.04/20.04 |

| Container Runtime: | containerd v1.6.8 |

| kubeadm版本 | 1.25.0 |

| 宿主机网段 | 172.31.0.0/21 |

| Pod网段 | 192.168.0.0/12 |

| Service网段 | 10.96.0.0/12 |

2.基于Kubeadm 实现 Kubernetes v1.25.0集群部署流程说明

官方说明

https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/

https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/install-kubeadm/

https://kubernetes.io/zh-cn/docs/setup/production-environment/tools/kubeadm/create-cluster-kubeadm/

使用 kubeadm,能创建一个符合最佳实践的最小化 Kubernetes 集群。 事实上,你可以使用 kubeadm配置一个通过 Kubernetes 一致性测试的集群。 kubeadm 还支持其他集群生命周期功能, 例如启动引导令牌和集群升级。

- 每个节点主机的初始环境准备

- Kubernetes集群API访问入口的高可用

- 在所有Master和Node节点都安装容器运行时,实际Kubernetes只使用其中的Containerd

- 在所有Master和Node节点安装kubeadm 、kubelet、kubectl

- 在第一个 master 节点运行 kubeadm init 初始化命令 ,并验证 master 节点状态

- 在第一个 master 节点安装配置网络插件

- 在其它master节点运行kubeadm join 命令加入到控制平面集群中

- 在所有 node 节点使用 kubeadm join 命令加入集群

- 创建 pod 并启动容器测试访问 ,并测试网络通信

3.基于Kubeadm 部署 Kubernetes v1.25.0高可用集群案例

3.1 所有主机初始化

3.1.1 设置ip地址

#CentOS

[root@k8s-master01 ~]# cat /etc/sysconfig/network-scripts/ifcfg-eth0

DEVICE=eth0

NAME=eth0

BOOTPROTO=none

ONBOOT=yes

IPADDR=172.31.3.101

PREFIX=21

GATEWAY=172.31.0.2

DNS1=223.5.5.5

DNS2=180.76.76.76

#Ubuntu

root@k8s-master01:~# cat /etc/netplan/01-netcfg.yaml

network:

version: 2

renderer: networkd

ethernets:

eth0:

addresses: [172.31.3.101/21]

gateway4: 172.31.0.2

nameservers:

addresses: [223.5.5.5, 180.76.76.76]

3.1.2 设置主机名

hostnamectl set-hostname k8s-master01.example.local

hostnamectl set-hostname k8s-master02.example.local

hostnamectl set-hostname k8s-master03.example.local

hostnamectl set-hostname k8s-ha01.example.local

hostnamectl set-hostname k8s-ha02.example.local

hostnamectl set-hostname k8s-harbor01.example.local

hostnamectl set-hostname k8s-harbor02.example.local

hostnamectl set-hostname k8s-node01.example.local

hostnamectl set-hostname k8s-node02.example.local

hostnamectl set-hostname k8s-node03.example.local

3.1.3 配置镜像源

CentOS 7所有节点配置 yum源如下:

rm -f /etc/yum.repos.d/*.repo

cat > /etc/yum.repos.d/base.repo <<EOF

[base]

name=base

baseurl=https://mirrors.cloud.tencent.com/centos/\$releasever/os/\$basearch/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-CentOS-\$releasever

[extras]

name=extras

baseurl=https://mirrors.cloud.tencent.com/centos/\$releasever/extras/\$basearch/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-CentOS-\$releasever

[updates]

name=updates

baseurl=https://mirrors.cloud.tencent.com/centos/\$releasever/updates/\$basearch/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-CentOS-\$releasever

[centosplus]

name=centosplus

baseurl=https://mirrors.cloud.tencent.com/centos/\$releasever/centosplus/\$basearch/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-CentOS-\$releasever

[epel]

name=epel

baseurl=https://mirrors.cloud.tencent.com/epel/\$releasever/\$basearch/

gpgcheck=1

gpgkey=https://mirrors.cloud.tencent.com/epel/RPM-GPG-KEY-EPEL-\$releasever

EOF

Rocky 8所有节点配置 yum源如下:

rm -f /etc/yum.repos.d/*.repo

cat > /etc/yum.repos.d/base.repo <<EOF

[BaseOS]

name=BaseOS

baseurl=https://mirrors.sjtug.sjtu.edu.cn/rocky/\$releasever/BaseOS/\$basearch/os/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-rockyofficial

[AppStream]

name=AppStream

baseurl=https://mirrors.sjtug.sjtu.edu.cn/rocky/\$releasever/AppStream/\$basearch/os/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-rockyofficial

[extras]

name=extras

baseurl=https://mirrors.sjtug.sjtu.edu.cn/rocky/\$releasever/extras/\$basearch/os/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-rockyofficial

enabled=1

[plus]

name=plus

baseurl=https://mirrors.sjtug.sjtu.edu.cn/rocky/\$releasever/plus/\$basearch/os/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-rockyofficial

EOF

CentOS stream 8所有节点配置 yum源如下:

rm -f /etc/yum.repos.d/*.repo

cat > /etc/yum.repos.d/base.repo <<EOF

[BaseOS]

name=BaseOS

baseurl=https://mirrors.cloud.tencent.com/centos/\$releasever-stream/BaseOS/\$basearch/os/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-centosofficial

[AppStream]

name=AppStream

baseurl=https://mirrors.cloud.tencent.com/centos/\$releasever-stream/AppStream/\$basearch/os/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-centosofficial

[extras]

name=extras

baseurl=https://mirrors.cloud.tencent.com/centos/\$releasever-stream/extras/\$basearch/os/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-centosofficial

[centosplus]

name=centosplus

baseurl=https://mirrors.cloud.tencent.com/centos/\$releasever-stream/centosplus/\$basearch/os/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-centosofficial

EOF

Ubuntu 所有节点配置 apt源如下:

cat > /etc/apt/sources.list <<EOF

deb http://mirrors.cloud.tencent.com/ubuntu/ $(lsb_release -cs) main restricted universe multiverse

deb-src http://mirrors.cloud.tencent.com/ubuntu/ $(lsb_release -cs) main restricted universe multiverse

deb http://mirrors.cloud.tencent.com/ubuntu/ $(lsb_release -cs)-security main restricted universe multiverse

deb-src http://mirrors.cloud.tencent.com/ubuntu/ $(lsb_release -cs)-security main restricted universe multiverse

deb http://mirrors.cloud.tencent.com/ubuntu/ $(lsb_release -cs)-updates main restricted universe multiverse

deb-src http://mirrors.cloud.tencent.com/ubuntu/ $(lsb_release -cs)-updates main restricted universe multiverse

deb http://mirrors.cloud.tencent.com/ubuntu/ $(lsb_release -cs)-proposed main restricted universe multiverse

deb-src http://mirrors.cloud.tencent.com/ubuntu/ $(lsb_release -cs)-proposed main restricted universe multiverse

deb http://mirrors.cloud.tencent.com/ubuntu/ $(lsb_release -cs)-backports main restricted universe multiverse

deb-src http://mirrors.cloud.tencent.com/ubuntu/ $(lsb_release -cs)-backports main restricted universe multiverse

EOF

apt update

3.1.4 必备工具安装

#CentOS安装

yum -y install vim tree lrzsz wget jq psmisc net-tools telnet yum-utils device-mapper-persistent-data lvm2 git

#Rocky除了安装上面工具,还需要安装rsync

yum -y install rsync

#Ubuntu安装

apt -y install tree lrzsz jq

3.1.5 配置 ssh key 验证

配置 ssh key 验证,方便后续同步文件

[root@k8s-master01 ~]# cat ssh_key_push.sh

#!/bin/bash

#

#**********************************************************************************************

#Author: Raymond

#QQ: 88563128

#Date: 2021-11-19

#FileName: ssh_key_push.sh

#URL: raymond.blog.csdn.net

#Description: ssh_key_push for CentOS 7/8 & Ubuntu 18.04/24.04 & Rocky 8

#Copyright (C): 2021 All rights reserved

#*********************************************************************************************

COLOR="echo -e \\033[01;31m"

END='\033[0m'

export SSHPASS=123456

HOSTS="

172.31.3.101

172.31.3.102

172.31.3.103

172.31.3.104

172.31.3.105

172.31.3.106

172.31.3.107

172.31.3.108

172.31.3.109

172.31.3.110"

os(){

OS_ID=`sed -rn '/^NAME=/s@.*="([[:alpha:]]+).*"$@\1@p' /etc/os-release`

}

ssh_key_push(){

rm -f ~/.ssh/id_rsa*

ssh-keygen -f /root/.ssh/id_rsa -P '' &> /dev/null

if [ ${OS_ID} == "CentOS" -o ${OS_ID} == "Rocky" ] &> /dev/null;then

rpm -q sshpass &> /dev/null || { ${COLOR}"安装sshpass软件包"${END};yum -y install sshpass &> /dev/null; }

else

dpkg -S sshpass &> /dev/null || { ${COLOR}"安装sshpass软件包"${END};apt -y install sshpass &> /dev/null; }

fi

for i in $HOSTS;do

{

sshpass -e ssh-copy-id -o StrictHostKeyChecking=no -i /root/.ssh/id_rsa.pub $i &> /dev/null

[ $? -eq 0 ] && echo $i is finished || echo $i is false

}&

done

wait

}

main(){

os

ssh_key_push

}

main

[root@k8s-master01 ~]# bash ssh_key_push.sh

安装sshpass软件包

172.31.3.105 is finished

172.31.3.108 is finished

172.31.3.109 is finished

172.31.3.106 is finished

172.31.3.101 is finished

172.31.3.110 is finished

172.31.3.104 is finished

172.31.3.107 is finished

172.31.3.102 is finished

172.31.3.103 is finished

3.1.6 设置域名解析

cat >> /etc/hosts <<EOF

172.31.3.101 k8s-master01.example.local k8s-master01

172.31.3.102 k8s-master02.example.local k8s-master02

172.31.3.103 k8s-master03.example.local k8s-master03

172.31.3.104 k8s-ha01.example.local k8s-ha01

172.31.3.105 k8s-ha02.example.local k8s-ha02

172.31.3.106 k8s-harbor01.example.local k8s-harbor01

172.31.3.107 k8s-harbor02.example.local k8s-harbor02

172.31.3.108 k8s-node01.example.local k8s-node01

172.31.3.109 k8s-node02.example.local k8s-node02

172.31.3.110 k8s-node03.example.local k8s-node03

172.31.3.188 kubeapi.raymonds.cc kubeapi

172.31.3.188 harbor.raymonds.cc

EOF

for i in {102..110};do scp /etc/hosts 172.31.3.$i:/etc/ ;done

3.1.7 关闭防火墙

#CentOS

systemctl disable --now firewalld

#CentOS 7

systemctl disable --now NetworkManager

#Ubuntu

systemctl disable --now ufw

3.1.8 禁用SELinux

#CentOS

setenforce 0

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config

#Ubuntu

Ubuntu没有安装SELinux,不用设置

3.1.9 禁用swap

sed -ri 's/.*swap.*/#&/' /etc/fstab

swapoff -a

#Ubuntu 20.04,执行下面命令

sed -ri 's/.*swap.*/#&/' /etc/fstab

SD_NAME=`lsblk|awk -F"[ └─]" '/SWAP/{printf $3}'`

systemctl mask dev-${SD_NAME}.swap

swapoff -a

3.1.10 时间同步

ha01和ha02上安装chrony-server:

root@k8s-ha01:~# cat install_chrony_server.sh

#!/bin/bash

#

#**********************************************************************************************

#Author: Raymond

#QQ: 88563128

#Date: 2021-11-22

#FileName: install_chrony_server.sh

#URL: raymond.blog.csdn.net

#Description: install_chrony_server for CentOS 7/8 & Ubuntu 18.04/20.04 & Rocky 8

#Copyright (C): 2021 All rights reserved

#*********************************************************************************************

COLOR="echo -e \\033[01;31m"

END='\033[0m'

os(){

OS_ID=`sed -rn '/^NAME=/s@.*="([[:alpha:]]+).*"$@\1@p' /etc/os-release`

}

install_chrony(){

${COLOR}"安装chrony软件包..."${END}

if [ ${OS_ID} == "CentOS" -o ${OS_ID} == "Rocky" ] &> /dev/null;then

yum -y install chrony &> /dev/null

sed -i -e '/^pool.*/d' -e '/^server.*/d' -e '/^# Please consider .*/a\server ntp.aliyun.com iburst\nserver time1.cloud.tencent.com iburst\nserver ntp.tuna.tsinghua.edu.cn iburst' -e 's@^#allow.*@allow 0.0.0.0/0@' -e 's@^#local.*@local stratum 10@' /etc/chrony.conf

systemctl enable --now chronyd &> /dev/null

systemctl is-active chronyd &> /dev/null || { ${COLOR}"chrony 启动失败,退出!"${END} ; exit; }

${COLOR}"chrony安装完成"${END}

else

apt -y install chrony &> /dev/null

sed -i -e '/^pool.*/d' -e '/^# See http:.*/a\server ntp.aliyun.com iburst\nserver time1.cloud.tencent.com iburst\nserver ntp.tuna.tsinghua.edu.cn iburst' /etc/chrony/chrony.conf

echo "allow 0.0.0.0/0" >> /etc/chrony/chrony.conf

echo "local stratum 10" >> /etc/chrony/chrony.conf

systemctl enable --now chronyd &> /dev/null

systemctl is-active chronyd &> /dev/null || { ${COLOR}"chrony 启动失败,退出!"${END} ; exit; }

${COLOR}"chrony安装完成"${END}

fi

}

main(){

os

install_chrony

}

main

[root@k8s-ha01 ~]# bash install_chrony_server.sh

chrony安装完成

[root@k8s-ha02 ~]# bash install_chrony_server.sh

chrony安装完成

[root@k8s-ha01 ~]# chronyc sources -nv

210 Number of sources = 3

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

^* 203.107.6.88 2 6 17 39 -1507us[-8009us] +/- 37ms

^- 139.199.215.251 2 6 17 39 +10ms[ +10ms] +/- 48ms

^? 101.6.6.172 0 7 0 - +0ns[ +0ns] +/- 0ns

[root@k8s-ha02 ~]# chronyc sources -nv

210 Number of sources = 3

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

^* 203.107.6.88 2 6 17 40 +90us[-1017ms] +/- 32ms

^+ 139.199.215.251 2 6 33 37 +13ms[ +13ms] +/- 25ms

^? 101.6.6.172 0 7 0 - +0ns[ +0ns] +/- 0ns

master、node、harbor上安装chrony-client:

root@k8s-master01:~# cat install_chrony_client.sh

#!/bin/bash

#

#**********************************************************************************************

#Author: Raymond

#QQ: 88563128

#Date: 2021-11-22

#FileName: install_chrony_client.sh

#URL: raymond.blog.csdn.net

#Description: install_chrony_client for CentOS 7/8 & Ubuntu 18.04/20.04 & Rocky 8

#Copyright (C): 2021 All rights reserved

#*********************************************************************************************

COLOR="echo -e \\033[01;31m"

END='\033[0m'

SERVER1=172.31.3.104

SERVER2=172.31.3.105

os(){

OS_ID=`sed -rn '/^NAME=/s@.*="([[:alpha:]]+).*"$@\1@p' /etc/os-release`

}

install_chrony(){

${COLOR}"安装chrony软件包..."${END}

if [ ${OS_ID} == "CentOS" -o ${OS_ID} == "Rocky" ] &> /dev/null;then

yum -y install chrony &> /dev/null

sed -i -e '/^pool.*/d' -e '/^server.*/d' -e '/^# Please consider .*/a\server '${SERVER1}' iburst\nserver '${SERVER2}' iburst' /etc/chrony.conf

systemctl enable --now chronyd &> /dev/null

systemctl is-active chronyd &> /dev/null || { ${COLOR}"chrony 启动失败,退出!"${END} ; exit; }

${COLOR}"chrony安装完成"${END}

else

apt -y install chrony &> /dev/null

sed -i -e '/^pool.*/d' -e '/^# See http:.*/a\server '${SERVER1}' iburst\nserver '${SERVER2}' iburst' /etc/chrony/chrony.conf

systemctl enable --now chronyd &> /dev/null

systemctl is-active chronyd &> /dev/null || { ${COLOR}"chrony 启动失败,退出!"${END} ; exit; }

systemctl restart chronyd

${COLOR}"chrony安装完成"${END}

fi

}

main(){

os

install_chrony

}

main

[root@k8s-master01 ~]# for i in k8s-master02 k8s-master03 k8s-harbor01 k8s-harbor02 k8s-node01 k8s-node02 k8s-node03;do scp -o StrictHostKeyChecking=no install_chrony_client.sh $i:/root/ ; done

[root@k8s-master01 ~]# bash install_chrony_client.sh

[root@k8s-master02 ~]# bash install_chrony_client.sh

[root@k8s-master03 ~]# bash install_chrony_client.sh

[root@k8s-harbor01:~]# bash install_chrony_client.sh

[root@k8s-harbor02:~]# bash install_chrony_client.sh

[root@k8s-node01:~]# bash install_chrony_client.sh

[root@k8s-node02:~]# bash install_chrony_client.sh

[root@k8s-node03:~]# bash install_chrony_client.sh

[root@k8s-master01 ~]# chronyc sources -nv

210 Number of sources = 2

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

^+ k8s-ha01 3 6 17 8 +84us[ +74us] +/- 55ms

^* k8s-ha02 3 6 17 8 -82us[ -91us] +/- 45ms

3.1.11 设置时区

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

echo 'Asia/Shanghai' >/etc/timezone

#Ubuntu还要设置下面内容

cat >> /etc/default/locale <<-EOF

LC_TIME=en_DK.UTF-8

EOF

3.1.12 优化资源限制参数

ulimit -SHn 65535

cat >>/etc/security/limits.conf <<EOF

* soft nofile 65536

* hard nofile 131072

* soft nproc 65535

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

EOF

3.1.13 内核升级

CentOS7内核是3.10,kubernetes需要内核是4.18或以上版本,CentOS7必须升级内核才可以安装kubernetes,其它系统根据自己的需求去升级

CentOS7 需要升级内核至4.18+,本地升级的版本为4.19

在master01节点下载内核:

[root@k8s-master01 ~]# wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-devel-4.19.12-1.el7.elrepo.x86_64.rpm

[root@k8s-master01 ~]# wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-4.19.12-1.el7.elrepo.x86_64.rpm

从master01节点传到其他节点:

[root@k8s-master01 ~]# for i in k8s-master02 k8s-master03 k8s-node01 k8s-node02 k8s-node03;do scp -o StrictHostKeyChecking=no kernel-ml-4.19.12-1.el7.elrepo.x86_64.rpm kernel-ml-devel-4.19.12-1.el7.elrepo.x86_64.rpm $i:/root/ ; done

master和node节点安装内核:

cd /root && yum localinstall -y kernel-ml*

master和node节点更改内核启动顺序

grub2-set-default 0 && grub2-mkconfig -o /etc/grub2.cfg

grubby --args="user_namespace.enable=1" --update-kernel="$(grubby --default-kernel)"

检查默认内核是不是4.19

grubby --default-kernel

[root@k8s-master01 ~]# grubby --default-kernel

/boot/vmlinuz-4.19.12-1.el7.elrepo.x86_64

所有节点重启,然后检查内核是不是4.19

reboot

uname -a

[root@k8s-master01 ~]# uname -a

Linux k8s-master01 4.19.12-1.el7.elrepo.x86_64 #1 SMP Fri Dec 21 11:06:36 EST 2018 x86_64 x86_64 x86_64 GNU/Linux

3.1.14 安装ipvs相关工具并优化内核

master和node安装ipvsadm:

#CentOS

yum -y install ipvsadm ipset sysstat conntrack libseccomp

#Ubuntu

apt -y install ipvsadm ipset sysstat conntrack libseccomp-dev

master和node节点配置ipvs模块,在内核4.19+版本nf_conntrack_ipv4已经改为nf_conntrack, 4.18以下使用nf_conntrack_ipv4即可:

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack #内核小于4.18,把这行改成nf_conntrack_ipv4

cat >> /etc/modules-load.d/ipvs.conf <<EOF

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack #内核小于4.18,把这行改成nf_conntrack_ipv4

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

EOF

然后执行systemctl restart systemd-modules-load.service即可

开启一些k8s集群中必须的内核参数,master和node节点配置k8s内核:

cat > /etc/sysctl.d/k8s.conf <<EOF

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

sysctl --system

Kubernetes内核优化常用参数详解:

net.ipv4.ip_forward = 1 #其值为0,说明禁止进行IP转发;如果是1,则说明IP转发功能已经打开。

net.bridge.bridge-nf-call-iptables = 1 #二层的网桥在转发包时也会被iptables的FORWARD规则所过滤,这样有时会出现L3层的iptables rules去过滤L2的帧的问题

net.bridge.bridge-nf-call-ip6tables = 1 #是否在ip6tables链中过滤IPv6包

fs.may_detach_mounts = 1 #当系统有容器运行时,需要设置为1

vm.overcommit_memory=1

#0, 表示内核将检查是否有足够的可用内存供应用进程使用;如果有足够的可用内存,内存申请允许;否则,内存申请失败,并把错误返回给应用进程。

#1, 表示内核允许分配所有的物理内存,而不管当前的内存状态如何。

#2, 表示内核允许分配超过所有物理内存和交换空间总和的内存

vm.panic_on_oom=0

#OOM就是out of memory的缩写,遇到内存耗尽、无法分配的状况。kernel面对OOM的时候,咱们也不能慌乱,要根据OOM参数来进行相应的处理。

#值为0:内存不足时,启动 OOM killer。

#值为1:内存不足时,有可能会触发 kernel panic(系统重启),也有可能启动 OOM killer。

#值为2:内存不足时,表示强制触发 kernel panic,内核崩溃GG(系统重启)。

fs.inotify.max_user_watches=89100 #表示同一用户同时可以添加的watch数目(watch一般是针对目录,决定了同时同一用户可以监控的目录数量)

fs.file-max=52706963 #所有进程最大的文件数

fs.nr_open=52706963 #单个进程可分配的最大文件数

net.netfilter.nf_conntrack_max=2310720 #连接跟踪表的大小,建议根据内存计算该值CONNTRACK_MAX = RAMSIZE (in bytes) / 16384 / (x / 32),并满足nf_conntrack_max=4*nf_conntrack_buckets,默认262144

net.ipv4.tcp_keepalive_time = 600 #KeepAlive的空闲时长,或者说每次正常发送心跳的周期,默认值为7200s(2小时)

net.ipv4.tcp_keepalive_probes = 3 #在tcp_keepalive_time之后,没有接收到对方确认,继续发送保活探测包次数,默认值为9(次)

net.ipv4.tcp_keepalive_intvl =15 #KeepAlive探测包的发送间隔,默认值为75s

net.ipv4.tcp_max_tw_buckets = 36000 #Nginx 之类的中间代理一定要关注这个值,因为它对你的系统起到一个保护的作用,一旦端口全部被占用,服务就异常了。 tcp_max_tw_buckets 能帮你降低这种情况的发生概率,争取补救时间。

net.ipv4.tcp_tw_reuse = 1 #只对客户端起作用,开启后客户端在1s内回收

net.ipv4.tcp_max_orphans = 327680 #这个值表示系统所能处理不属于任何进程的socket数量,当我们需要快速建立大量连接时,就需要关注下这个值了。

net.ipv4.tcp_orphan_retries = 3

#出现大量fin-wait-1

#首先,fin发送之后,有可能会丢弃,那么发送多少次这样的fin包呢?fin包的重传,也会采用退避方式,在2.6.358内核中采用的是指数退避,2s,4s,最后的重试次数是由tcp_orphan_retries来限制的。

net.ipv4.tcp_syncookies = 1 #tcp_syncookies是一个开关,是否打开SYN Cookie功能,该功能可以防止部分SYN攻击。tcp_synack_retries和tcp_syn_retries定义SYN的重试次数。

net.ipv4.tcp_max_syn_backlog = 16384 #进入SYN包的最大请求队列.默认1024.对重负载服务器,增加该值显然有好处.

net.ipv4.ip_conntrack_max = 65536 #表明系统将对最大跟踪的TCP连接数限制默认为65536

net.ipv4.tcp_max_syn_backlog = 16384 #指定所能接受SYN同步包的最大客户端数量,即半连接上限;

net.ipv4.tcp_timestamps = 0 #在使用 iptables 做 nat 时,发现内网机器 ping 某个域名 ping 的通,而使用 curl 测试不通, 原来是 net.ipv4.tcp_timestamps 设置了为 1 ,即启用时间戳

net.core.somaxconn = 16384 #Linux中的一个kernel参数,表示socket监听(listen)的backlog上限。什么是backlog呢?backlog就是socket的监听队列,当一个请求(request)尚未被处理或建立时,他会进入backlog。而socket server可以一次性处理backlog中的所有请求,处理后的请求不再位于监听队列中。当server处理请求较慢,以至于监听队列被填满后,新来的请求会被拒绝。

所有节点配置完内核后,重启服务器,保证重启后内核依旧加载

reboot

lsmod | grep --color=auto -e ip_vs -e nf_conntrack

[root@k8s-master01 ~]# lsmod | grep --color=auto -e ip_vs -e nf_conntrack

ip_vs_ftp 16384 0

nf_nat 32768 1 ip_vs_ftp

ip_vs_sed 16384 0

ip_vs_nq 16384 0

ip_vs_fo 16384 0

ip_vs_sh 16384 0

ip_vs_dh 16384 0

ip_vs_lblcr 16384 0

ip_vs_lblc 16384 0

ip_vs_wrr 16384 0

ip_vs_rr 16384 0

ip_vs_wlc 16384 0

ip_vs_lc 16384 0

ip_vs 151552 24 ip_vs_wlc,ip_vs_rr,ip_vs_dh,ip_vs_lblcr,ip_vs_sh,ip_vs_fo,ip_vs_nq,ip_vs_lblc,ip_vs_wrr,ip_vs_lc,ip_vs_sed,ip_vs_ftp

nf_conntrack 143360 2 nf_nat,ip_vs

nf_defrag_ipv6 20480 1 nf_conntrack

nf_defrag_ipv4 16384 1 nf_conntrack

libcrc32c 16384 4 nf_conntrack,nf_nat,xfs,ip_vs

3.2 部署 Kubernetes 集群 API 访问入口的高可用

(注意:如果不是高可用集群,haproxy和keepalived无需安装)

公有云要用公有云自带的负载均衡,比如阿里云的SLB,腾讯云的ELB,用来替代haproxy和keepalived,因为公有云大部分都是不支持keepalived的,另外如果用阿里云的话,kubectl控制端不能放在master节点,推荐使用腾讯云,因为阿里云的slb有回环的问题,也就是slb代理的服务器不能反向访问SLB,但是腾讯云修复了这个问题。

在172.31.3.104和172.31.3.105上实现如下操作

3.2.1 安装 HAProxy

利用 HAProxy 实现 Kubeapi 服务的负载均衡

[root@k8s-ha01 ~]# cat install_haproxy.sh

#!/bin/bash

#

#**********************************************************************************************

#Author: Raymond

#QQ: 88563128

#Date: 2021-12-29

#FileName: install_haproxy.sh

#URL: raymond.blog.csdn.net

#Description: install_haproxy for CentOS 7/8 & Ubuntu 18.04/20.04 & Rocky 8

#Copyright (C): 2021 All rights reserved

#*********************************************************************************************

SRC_DIR=/usr/local/src

COLOR="echo -e \\033[01;31m"

END='\033[0m'

CPUS=`lscpu |awk '/^CPU\(s\)/{print $2}'`

#lua下载地址:http://www.lua.org/ftp/lua-5.4.4.tar.gz

LUA_FILE=lua-5.4.4.tar.gz

#haproxy下载地址:https://www.haproxy.org/download/2.6/src/haproxy-2.6.4.tar.gz

HAPROXY_FILE=haproxy-2.6.4.tar.gz

HAPROXY_INSTALL_DIR=/apps/haproxy

STATS_AUTH_USER=admin

STATS_AUTH_PASSWORD=123456

VIP=172.31.3.188

MASTER01=172.31.3.101

MASTER02=172.31.3.102

MASTER03=172.31.3.103

HARBOR01=172.31.3.106

HARBOR02=172.31.3.107

os(){

OS_ID=`sed -rn '/^NAME=/s@.*="([[:alpha:]]+).*"$@\1@p' /etc/os-release`

}

check_file (){

cd ${SRC_DIR}

${COLOR}'检查Haproxy相关源码包'${END}

if [ ! -e ${LUA_FILE} ];then

${COLOR}"缺少${LUA_FILE}文件,请把文件放到${SRC_DIR}目录下"${END}

exit

elif [ ! -e ${HAPROXY_FILE} ];then

${COLOR}"缺少${HAPROXY_FILE}文件,请把文件放到${SRC_DIR}目录下"${END}

exit

else

${COLOR}"相关文件已准备好"${END}

fi

}

install_haproxy(){

[ -d ${HAPROXY_INSTALL_DIR} ] && { ${COLOR}"Haproxy已存在,安装失败"${END};exit; }

${COLOR}"开始安装Haproxy"${END}

${COLOR}"开始安装Haproxy依赖包"${END}

if [ ${OS_ID} == "CentOS" -o ${OS_ID} == "Rocky" ] &> /dev/null;then

yum -y install gcc make gcc-c++ glibc glibc-devel pcre pcre-devel openssl openssl-devel systemd-devel libtermcap-devel ncurses-devel libevent-devel readline-devel &> /dev/null

else

apt update &> /dev/null;apt -y install gcc make openssl libssl-dev libpcre3 libpcre3-dev zlib1g-dev libreadline-dev libsystemd-dev &> /dev/null

fi

tar xf ${LUA_FILE}

LUA_DIR=`echo ${LUA_FILE} | sed -nr 's/^(.*[0-9]).([[:lower:]]).*/\1/p'`

cd ${LUA_DIR}

make all test

cd ${SRC_DIR}

tar xf ${HAPROXY_FILE}

HAPROXY_DIR=`echo ${HAPROXY_FILE} | sed -nr 's/^(.*[0-9]).([[:lower:]]).*/\1/p'`

cd ${HAPROXY_DIR}

make -j ${CPUS} ARCH=x86_64 TARGET=linux-glibc USE_PCRE=1 USE_OPENSSL=1 USE_ZLIB=1 USE_SYSTEMD=1 USE_CPU_AFFINITY=1 USE_LUA=1 LUA_INC=${SRC_DIR}/${LUA_DIR}/src/ LUA_LIB=${SRC_DIR}/${LUA_DIR}/src/ PREFIX=${HAPROXY_INSTALL_DIR}

make install PREFIX=${HAPROXY_INSTALL_DIR}

[ $? -eq 0 ] && $COLOR"Haproxy编译安装成功"$END || { $COLOR"Haproxy编译安装失败,退出!"$END;exit; }

cat > /lib/systemd/system/haproxy.service <<-EOF

[Unit]

Description=HAProxy Load Balancer

After=syslog.target network.target

[Service]

ExecStartPre=/usr/sbin/haproxy -f /etc/haproxy/haproxy.cfg -c -q

ExecStart=/usr/sbin/haproxy -Ws -f /etc/haproxy/haproxy.cfg -p /var/lib/haproxy/haproxy.pid

ExecReload=/bin/kill -USR2 $MAINPID

[Install]

WantedBy=multi-user.target

EOF

[ -L /usr/sbin/haproxy ] || ln -s ../..${HAPROXY_INSTALL_DIR}/sbin/haproxy /usr/sbin/ &> /dev/null

[ -d /etc/haproxy ] || mkdir /etc/haproxy &> /dev/null

[ -d /var/lib/haproxy/ ] || mkdir -p /var/lib/haproxy/ &> /dev/null

cat > /etc/haproxy/haproxy.cfg <<-EOF

global

maxconn 100000

chroot ${HAPROXY_INSTALL_DIR}

stats socket /var/lib/haproxy/haproxy.sock mode 600 level admin

uid 99

gid 99

daemon

pidfile /var/lib/haproxy/haproxy.pid

log 127.0.0.1 local3 info

defaults

option http-keep-alive

option forwardfor

maxconn 100000

mode http

timeout connect 300000ms

timeout client 300000ms

timeout server 300000ms

listen stats

mode http

bind 0.0.0.0:9999

stats enable

log global

stats uri /haproxy-status

stats auth ${STATS_AUTH_USER}:${STATS_AUTH_PASSWORD}

listen kubernetes-6443

bind ${VIP}:6443

mode tcp

log global

server ${MASTER01} ${MASTER01}:6443 check inter 3s fall 2 rise 5

server ${MASTER02} ${MASTER02}:6443 check inter 3s fall 2 rise 5

server ${MASTER03} ${MASTER03}:6443 check inter 3s fall 2 rise 5

listen harbor-80

bind ${VIP}:80

mode http

log global

balance source

server ${HARBOR01} ${HARBOR01}:80 check inter 3s fall 2 rise 5

server ${HARBOR02} ${HARBOR02}:80 check inter 3s fall 2 rise 5

EOF

cat >> /etc/sysctl.conf <<-EOF

net.ipv4.ip_nonlocal_bind = 1

EOF

sysctl -p &> /dev/null

echo "PATH=${HAPROXY_INSTALL_DIR}/sbin:${PATH}" > /etc/profile.d/haproxy.sh

systemctl daemon-reload

systemctl enable --now haproxy &> /dev/null

systemctl is-active haproxy &> /dev/null && ${COLOR}"Haproxy 服务启动成功!"${END} || { ${COLOR}"Haproxy 启动失败,退出!"${END} ; exit; }

${COLOR}"Haproxy安装完成"${END}

}

main(){

os

check_file

install_haproxy

}

main

[root@k8s-ha01 ~]# bash install_haproxy.sh

[root@k8s-ha02 ~]# bash install_haproxy.sh

3.2.2 安装 Keepalived

安装 keepalived 实现 HAProxy的高可用

所有ha节点配置KeepAlived健康检查文件:

[root@k8s-ha01 ~]# cat check_haproxy.sh

#!/bin/bash

err=0

for k in $(seq 1 3);do

check_code=$(pgrep haproxy)

if [[ $check_code == "" ]]; then

err=$(expr $err + 1)

sleep 1

continue

else

err=0

break

fi

done

if [[ $err != "0" ]]; then

echo "systemctl stop keepalived"

/usr/bin/systemctl stop keepalived

exit 1

else

exit 0

fi

在ha01和ha02节点安装KeepAlived,配置不一样,注意区分 [root@k8s-master01 pki]# vim /etc/keepalived/keepalived.conf ,注意每个节点的网卡(interface参数)

在ha01节点上安装keepalived-master:

[root@k8s-ha01 ~]# cat install_keepalived_master.sh

#!/bin/bash

#

#**********************************************************************************************

#Author: Raymond

#QQ: 88563128

#Date: 2021-12-29

#FileName: install_keepalived_master.sh

#URL: raymond.blog.csdn.net

#Description: install_keepalived for CentOS 7/8 & Ubuntu 18.04/20.04 & Rocky 8

#Copyright (C): 2021 All rights reserved

#*********************************************************************************************

SRC_DIR=/usr/local/src

COLOR="echo -e \\033[01;31m"

END='\033[0m'

KEEPALIVED_URL=https://keepalived.org/software/

KEEPALIVED_FILE=keepalived-2.2.7.tar.gz

KEEPALIVED_INSTALL_DIR=/apps/keepalived

CPUS=`lscpu |awk '/^CPU\(s\)/{print $2}'`

NET_NAME=`ip addr |awk -F"[: ]" '/^2: e.*/{print $3}'`

STATE=MASTER

PRIORITY=100

VIP=172.31.3.188

os(){

OS_ID=`sed -rn '/^NAME=/s@.*="([[:alpha:]]+).*"$@\1@p' /etc/os-release`

OS_RELEASE_VERSION=`sed -rn '/^VERSION_ID=/s@.*="?([0-9]+)\.?.*"?@\1@p' /etc/os-release`

}

check_file (){

cd ${SRC_DIR}

if [ ${OS_ID} == "CentOS" -o ${OS_ID} == "Rocky" ] &> /dev/null;then

rpm -q wget &> /dev/null || yum -y install wget &> /dev/null

fi

if [ ! -e ${KEEPALIVED_FILE} ];then

${COLOR}"缺少${KEEPALIVED_FILE}文件,如果是离线包,请放到${SRC_DIR}目录下"${END}

${COLOR}'开始下载Keepalived源码包'${END}

wget ${KEEPALIVED_URL}${KEEPALIVED_FILE} || { ${COLOR}"Keepalived源码包下载失败"${END}; exit; }

elif [ ! -e check_haproxy.sh ];then

${COLOR}"缺少check_haproxy.sh文件,请把文件放到${SRC_DIR}目录下"${END}

exit

else

${COLOR}"相关文件已准备好"${END}

fi

}

install_keepalived(){

[ -d ${KEEPALIVED_INSTALL_DIR} ] && { ${COLOR}"Keepalived已存在,安装失败"${END};exit; }

${COLOR}"开始安装Keepalived"${END}

${COLOR}"开始安装Keepalived依赖包"${END}

if [ ${OS_ID} == "Rocky" -a ${OS_RELEASE_VERSION} == 8 ];then

URL=mirrors.sjtug.sjtu.edu.cn

if [ ! `grep -R "\[PowerTools\]" /etc/yum.repos.d/` ];then

cat > /etc/yum.repos.d/PowerTools.repo <<-EOF

[PowerTools]

name=PowerTools

baseurl=https://${URL}/rocky/\$releasever/PowerTools/\$basearch/os/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-rockyofficial

EOF

fi

fi

if [ ${OS_ID} == "CentOS" -a ${OS_RELEASE_VERSION} == 8 ];then

URL=mirrors.cloud.tencent.com

if [ ! `grep -R "\[PowerTools\]" /etc/yum.repos.d/` ];then

cat > /etc/yum.repos.d/PowerTools.repo <<-EOF

[PowerTools]

name=PowerTools

baseurl=https://${URL}/centos/\$releasever/PowerTools/\$basearch/os/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-centosofficial

EOF

fi

fi

if [[ ${OS_RELEASE_VERSION} == 8 ]] &> /dev/null;then

yum -y install make gcc ipvsadm autoconf automake openssl-devel libnl3-devel iptables-devel ipset-devel file-devel net-snmp-devel glib2-devel pcre2-devel libnftnl-devel libmnl-devel systemd-devel &> /dev/null

elif [[ ${OS_RELEASE_VERSION} == 7 ]] &> /dev/null;then

yum -y install make gcc libnfnetlink-devel libnfnetlink ipvsadm libnl libnl-devel libnl3 libnl3-devel lm_sensors-libs net-snmp-agent-libs net-snmp-libs openssh-server openssh-clients openssl openssl-devel automake iproute &> /dev/null

elif [[ ${OS_RELEASE_VERSION} == 20 ]] &> /dev/null;then

apt update &> /dev/null;apt -y install make gcc ipvsadm build-essential pkg-config automake autoconf libipset-dev libnl-3-dev libnl-genl-3-dev libssl-dev libxtables-dev libip4tc-dev libip6tc-dev libipset-dev libmagic-dev libsnmp-dev libglib2.0-dev libpcre2-dev libnftnl-dev libmnl-dev libsystemd-dev

else

apt update &> /dev/null;apt -y install make gcc ipvsadm build-essential pkg-config automake autoconf iptables-dev libipset-dev libnl-3-dev libnl-genl-3-dev libssl-dev libxtables-dev libip4tc-dev libip6tc-dev libipset-dev libmagic-dev libsnmp-dev libglib2.0-dev libpcre2-dev libnftnl-dev libmnl-dev libsystemd-dev &> /dev/null

fi

tar xf ${KEEPALIVED_FILE}

KEEPALIVED_DIR=`echo ${KEEPALIVED_FILE} | sed -nr 's/^(.*[0-9]).([[:lower:]]).*/\1/p'`

cd ${KEEPALIVED_DIR}

./configure --prefix=${KEEPALIVED_INSTALL_DIR} --disable-fwmark

make -j $CPUS && make install

[ $? -eq 0 ] && ${COLOR}"Keepalived编译安装成功"${END} || { ${COLOR}"Keepalived编译安装失败,退出!"${END};exit; }

[ -d /etc/keepalived ] || mkdir -p /etc/keepalived &> /dev/null

cat > /etc/keepalived/keepalived.conf <<EOF

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

script_user root

enable_script_security

}

vrrp_script check_haoroxy {

script "/etc/keepalived/check_haproxy.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state ${STATE}

interface ${NET_NAME}

virtual_router_id 51

priority ${PRIORITY}

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

${VIP} dev ${NET_NAME} label ${NET_NAME}:1

}

track_script {

check_haproxy

}

}

EOF

cp ./keepalived/keepalived.service /lib/systemd/system/

cd ${SRC_DIR}

mv check_haproxy.sh /etc/keepalived/check_haproxy.sh

chmod +x /etc/keepalived/check_haproxy.sh

echo "PATH=${KEEPALIVED_INSTALL_DIR}/sbin:${PATH}" > /etc/profile.d/keepalived.sh

systemctl daemon-reload

systemctl enable --now keepalived &> /dev/null

systemctl is-active keepalived &> /dev/null && ${COLOR}"Keepalived 服务启动成功!"${END} || { ${COLOR}"Keepalived 启动失败,退出!"${END} ; exit; }

${COLOR}"Keepalived安装完成"${END}

}

main(){

os

check_file

install_keepalived

}

main

[root@k8s-ha01 ~]# bash install_keepalived_master.sh

[root@k8s-ha01 ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP group default qlen 1000

link/ether 00:0c:29:04:fa:9d brd ff:ff:ff:ff:ff:ff

inet 172.31.3.104/21 brd 172.31.7.255 scope global noprefixroute eth0

valid_lft forever preferred_lft forever

inet 172.31.3.188/32 scope global eth0:1

valid_lft forever preferred_lft forever

inet6 fe80::20c:29ff:fe04:fa9d/64 scope link

valid_lft forever preferred_lft forever

在ha02节点上安装keepalived-backup:

[root@k8s-ha02 ~]# cat install_keepalived_backup.sh

#!/bin/bash

#

#**********************************************************************************************

#Author: Raymond

#QQ: 88563128

#Date: 2021-12-29

#FileName: install_keepalived_backup.sh

#URL: raymond.blog.csdn.net

#Description: install_keepalived for CentOS 7/8 & Ubuntu 18.04/20.04 & Rocky 8

#Copyright (C): 2021 All rights reserved

#*********************************************************************************************

SRC_DIR=/usr/local/src

COLOR="echo -e \\033[01;31m"

END='\033[0m'

KEEPALIVED_URL=https://keepalived.org/software/

KEEPALIVED_FILE=keepalived-2.2.7.tar.gz

KEEPALIVED_INSTALL_DIR=/apps/keepalived

CPUS=`lscpu |awk '/^CPU\(s\)/{print $2}'`

NET_NAME=`ip addr |awk -F"[: ]" '/^2: e.*/{print $3}'`

STATE=BACKUP

PRIORITY=90

VIP=172.31.3.188

os(){

OS_ID=`sed -rn '/^NAME=/s@.*="([[:alpha:]]+).*"$@\1@p' /etc/os-release`

OS_RELEASE_VERSION=`sed -rn '/^VERSION_ID=/s@.*="?([0-9]+)\.?.*"?@\1@p' /etc/os-release`

}

check_file (){

cd ${SRC_DIR}

if [ ${OS_ID} == "CentOS" -o ${OS_ID} == "Rocky" ] &> /dev/null;then

rpm -q wget &> /dev/null || yum -y install wget &> /dev/null

fi

if [ ! -e ${KEEPALIVED_FILE} ];then

${COLOR}"缺少${KEEPALIVED_FILE}文件,如果是离线包,请放到${SRC_DIR}目录下"${END}

${COLOR}'开始下载Keepalived源码包'${END}

wget ${KEEPALIVED_URL}${KEEPALIVED_FILE} || { ${COLOR}"Keepalived源码包下载失败"${END}; exit; }

elif [ ! -e check_haproxy.sh ];then

${COLOR}"缺少check_haproxy.sh文件,请把文件放到${SRC_DIR}目录下"${END}

exit

else

${COLOR}"相关文件已准备好"${END}

fi

}

install_keepalived(){

[ -d ${KEEPALIVED_INSTALL_DIR} ] && { ${COLOR}"Keepalived已存在,安装失败"${END};exit; }

${COLOR}"开始安装Keepalived"${END}

${COLOR}"开始安装Keepalived依赖包"${END}

if [ ${OS_ID} == "Rocky" -a ${OS_RELEASE_VERSION} == 8 ];then

URL=mirrors.sjtug.sjtu.edu.cn

if [ ! `grep -R "\[PowerTools\]" /etc/yum.repos.d/` ];then

cat > /etc/yum.repos.d/PowerTools.repo <<-EOF

[PowerTools]

name=PowerTools

baseurl=https://${URL}/rocky/\$releasever/PowerTools/\$basearch/os/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-rockyofficial

EOF

fi

fi

if [ ${OS_ID} == "CentOS" -a ${OS_RELEASE_VERSION} == 8 ];then

URL=mirrors.cloud.tencent.com

if [ ! `grep -R "\[PowerTools\]" /etc/yum.repos.d/` ];then

cat > /etc/yum.repos.d/PowerTools.repo <<-EOF

[PowerTools]

name=PowerTools

baseurl=https://${URL}/centos/\$releasever/PowerTools/\$basearch/os/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-centosofficial

EOF

fi

fi

if [[ ${OS_RELEASE_VERSION} == 8 ]] &> /dev/null;then

yum -y install make gcc ipvsadm autoconf automake openssl-devel libnl3-devel iptables-devel ipset-devel file-devel net-snmp-devel glib2-devel pcre2-devel libnftnl-devel libmnl-devel systemd-devel &> /dev/null

elif [[ ${OS_RELEASE_VERSION} == 7 ]] &> /dev/null;then

yum -y install make gcc libnfnetlink-devel libnfnetlink ipvsadm libnl libnl-devel libnl3 libnl3-devel lm_sensors-libs net-snmp-agent-libs net-snmp-libs openssh-server openssh-clients openssl openssl-devel automake iproute &> /dev/null

elif [[ ${OS_RELEASE_VERSION} == 20 ]] &> /dev/null;then

apt update &> /dev/null;apt -y install make gcc ipvsadm build-essential pkg-config automake autoconf libipset-dev libnl-3-dev libnl-genl-3-dev libssl-dev libxtables-dev libip4tc-dev libip6tc-dev libipset-dev libmagic-dev libsnmp-dev libglib2.0-dev libpcre2-dev libnftnl-dev libmnl-dev libsystemd-dev

else

apt update &> /dev/null;apt -y install make gcc ipvsadm build-essential pkg-config automake autoconf iptables-dev libipset-dev libnl-3-dev libnl-genl-3-dev libssl-dev libxtables-dev libip4tc-dev libip6tc-dev libipset-dev libmagic-dev libsnmp-dev libglib2.0-dev libpcre2-dev libnftnl-dev libmnl-dev libsystemd-dev &> /dev/null

fi

tar xf ${KEEPALIVED_FILE}

KEEPALIVED_DIR=`echo ${KEEPALIVED_FILE} | sed -nr 's/^(.*[0-9]).([[:lower:]]).*/\1/p'`

cd ${KEEPALIVED_DIR}

./configure --prefix=${KEEPALIVED_INSTALL_DIR} --disable-fwmark

make -j $CPUS && make install

[ $? -eq 0 ] && ${COLOR}"Keepalived编译安装成功"${END} || { ${COLOR}"Keepalived编译安装失败,退出!"${END};exit; }

[ -d /etc/keepalived ] || mkdir -p /etc/keepalived &> /dev/null

cat > /etc/keepalived/keepalived.conf <<EOF

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

script_user root

enable_script_security

}

vrrp_script check_haoroxy {

script "/etc/keepalived/check_haproxy.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state ${STATE}

interface ${NET_NAME}

virtual_router_id 51

priority ${PRIORITY}

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

${VIP} dev ${NET_NAME} label ${NET_NAME}:1

}

track_script {

check_haproxy

}

}

EOF

cp ./keepalived/keepalived.service /lib/systemd/system/

cd ${SRC_DIR}

mv check_haproxy.sh /etc/keepalived/check_haproxy.sh

chmod +x /etc/keepalived/check_haproxy.sh

echo "PATH=${KEEPALIVED_INSTALL_DIR}/sbin:${PATH}" > /etc/profile.d/keepalived.sh

systemctl daemon-reload

systemctl enable --now keepalived &> /dev/null

systemctl is-active keepalived &> /dev/null && ${COLOR}"Keepalived 服务启动成功!"${END} || { ${COLOR}"Keepalived 启动失败,退出!"${END} ; exit; }

${COLOR}"Keepalived安装完成"${END}

}

main(){

os

check_file

install_keepalived

}

main

[root@k8s-ha02 ~]# bash install_keepalived_backup.sh

3.2.3 测试访问

172.31.3.188 kubeapi.raymonds.cc

浏览器访问验证,用户名密码: admin:123456

http://kubeapi.raymonds.cc:9999/haproxy-status

3.3 安装harbor

3.3.1 安装harbor

在harbor01和harbor02上安装harbor:

[root@k8s-harbor01 ~]# cat install_docker_compose_harbor.sh

#!/bin/bash

#

#**************************************************************************************************

#Author: Raymond

#QQ: 88563128

#Date: 2021-12-16

#FileName: install_docke_compose_harbor.sh

#URL: raymond.blog.csdn.net

#Description: install_docker_compose_harbor for CentOS 7/8 & Ubuntu 18.04/20.04 & Rocky 8

#Copyright (C): 2021 All rights reserved

#**************************************************************************************************

SRC_DIR=/usr/local/src

COLOR="echo -e \\033[01;31m"

END='\033[0m'

DOCKER_VERSION=20.10.17

URL='mirrors.cloud.tencent.com'

#docker-compose下载地址:https://github.com/docker/compose/releases/download/v2.10.2/docker-compose-linux-x86_64

DOCKER_COMPOSE_FILE=docker-compose-linux-x86_64

#harbor下载地址:https://github.com/goharbor/harbor/releases/download/v2.6.0/harbor-offline-installer-v2.6.0.tgz

HARBOR_FILE=harbor-offline-installer-v

HARBOR_VERSION=2.6.0

TAR=.tgz

HARBOR_INSTALL_DIR=/apps

HARBOR_DOMAIN=harbor.raymonds.cc

NET_NAME=`ip addr |awk -F"[: ]" '/^2: e.*/{print $3}'`

IP=`ip addr show ${NET_NAME}| awk -F" +|/" '/global/{print $3}'`

HARBOR_ADMIN_PASSWORD=123456

os(){

OS_ID=`sed -rn '/^NAME=/s@.*="([[:alpha:]]+).*"$@\1@p' /etc/os-release`

OS_RELEASE_VERSION=`sed -rn '/^VERSION_ID=/s@.*="?([0-9]+)\.?.*"?@\1@p' /etc/os-release`

}

check_file (){

cd ${SRC_DIR}

if [ ! -e ${DOCKER_COMPOSE_FILE} ];then

${COLOR}"缺少${DOCKER_COMPOSE_FILE}文件,请把文件放到${SRC_DIR}目录下"${END}

exit

elif [ ! -e ${HARBOR_FILE}${HARBOR_VERSION}${TAR} ];then

${COLOR}"缺少${HARBOR_FILE}${HARBOR_VERSION}${TAR}文件,请把文件放到${SRC_DIR}目录下"${END}

exit

else

${COLOR}"相关文件已准备好"${END}

fi

}

ubuntu_install_docker(){

${COLOR}"开始安装DOCKER依赖包"${END}

apt update &> /dev/null

apt -y install apt-transport-https ca-certificates curl software-properties-common &> /dev/null

curl -fsSL https://${URL}/docker-ce/linux/ubuntu/gpg | sudo apt-key add - &> /dev/null

add-apt-repository "deb [arch=amd64] https://${URL}/docker-ce/linux/ubuntu $(lsb_release -cs) stable" &> /dev/null

apt update &> /dev/null

${COLOR}"Docker有以下版本"${END}

apt-cache madison docker-ce

${COLOR}"10秒后即将安装:Docker-"${DOCKER_VERSION}"版本......"${END}

${COLOR}"如果想安装其它Docker版本,请按Ctrl+c键退出,修改版本再执行"${END}

sleep 10

${COLOR}"开始安装DOCKER"${END}

apt -y install docker-ce=5:${DOCKER_VERSION}~3-0~ubuntu-$(lsb_release -cs) docker-ce-cli=5:${DOCKER_VERSION}~3-0~ubuntu-$(lsb_release -cs) &> /dev/null || { ${COLOR}"apt源失败,请检查apt配置"${END};exit; }

}

centos_install_docker(){

${COLOR}"开始安装DOCKER依赖包"${END}

yum -y install yum-utils &> /dev/null

yum-config-manager --add-repo https://${URL}/docker-ce/linux/centos/docker-ce.repo &> /dev/null

yum clean all &> /dev/null

yum makecache &> /dev/null

${COLOR}"Docker有以下版本"${END}

yum list docker-ce.x86_64 --showduplicates

${COLOR}"10秒后即将安装:Docker-"${DOCKER_VERSION}"版本......"${END}

${COLOR}"如果想安装其它Docker版本,请按Ctrl+c键退出,修改版本再执行"${END}

sleep 10

${COLOR}"开始安装DOCKER"${END}

yum -y install docker-ce-${DOCKER_VERSION} docker-ce-cli-${DOCKER_VERSION} &> /dev/null || { ${COLOR}"yum源失败,请检查yum配置"${END};exit; }

}

mirror_accelerator(){

mkdir -p /etc/docker

cat > /etc/docker/daemon.json <<-EOF

{

"registry-mirrors": [

"https://registry.docker-cn.com",

"http://hub-mirror.c.163.com",

"https://docker.mirrors.ustc.edu.cn"

],

"insecure-registries": ["${HARBOR_DOMAIN}"],

"exec-opts": ["native.cgroupdriver=systemd"],

"max-concurrent-downloads": 10,

"max-concurrent-uploads": 5,

"log-opts": {

"max-size": "300m",

"max-file": "2"

},

"live-restore": true

}

EOF

systemctl daemon-reload

systemctl enable --now docker

systemctl is-active docker &> /dev/null && ${COLOR}"Docker 服务启动成功"${END} || { ${COLOR}"Docker 启动失败"${END};exit; }

docker version && ${COLOR}"Docker 安装成功"${END} || ${COLOR}"Docker 安装失败"${END}

}

set_alias(){

echo 'alias rmi="docker images -qa|xargs docker rmi -f"' >> ~/.bashrc

echo 'alias rmc="docker ps -qa|xargs docker rm -f"' >> ~/.bashrc

}

install_docker_compose(){

${COLOR}"开始安装 Docker compose....."${END}

sleep 1

mv ${SRC_DIR}/${DOCKER_COMPOSE_FILE} /usr/bin/docker-compose

chmod +x /usr/bin/docker-compose

docker-compose --version && ${COLOR}"Docker Compose 安装完成"${END} || ${COLOR}"Docker compose 安装失败"${END}

}

install_harbor(){

${COLOR}"开始安装 Harbor....."${END}

sleep 1

[ -d ${HARBOR_INSTALL_DIR} ] || mkdir ${HARBOR_INSTALL_DIR}

tar xf ${SRC_DIR}/${HARBOR_FILE}${HARBOR_VERSION}${TAR} -C ${HARBOR_INSTALL_DIR}/

mv ${HARBOR_INSTALL_DIR}/harbor/harbor.yml.tmpl ${HARBOR_INSTALL_DIR}/harbor/harbor.yml

sed -ri.bak -e 's/^(hostname:) .*/\1 '${IP}'/' -e 's/^(harbor_admin_password:) .*/\1 '${HARBOR_ADMIN_PASSWORD}'/' -e 's/^(https:)/#\1/' -e 's/ (port: 443)/# \1/' -e 's@ (certificate: .*)@# \1@' -e 's@ (private_key: .*)@# \1@' ${HARBOR_INSTALL_DIR}/harbor/harbor.yml

if [ ${OS_ID} == "CentOS" -o ${OS_ID} == "Rocky" ] &> /dev/null;then

if [ ${OS_RELEASE_VERSION} == "8" ];then

yum -y install python3 &> /dev/null || { ${COLOR}"安装软件包失败,请检查网络配置"${END}; exit; }

else

yum -y install python &> /dev/null || { ${COLOR}"安装软件包失败,请检查网络配置"${END}; exit; }

fi

else

apt -y install python3 &> /dev/null || { ${COLOR}"安装软件包失败,请检查网络配置"${END}; exit; }

fi

${HARBOR_INSTALL_DIR}/harbor/install.sh && ${COLOR}"Harbor 安装完成"${END} || ${COLOR}"Harbor 安装失败"${END}

cat > /lib/systemd/system/harbor.service <<-EOF

[Unit]

Description=Harbor

After=docker.service systemd-networkd.service systemd-resolved.service

Requires=docker.service

Documentation=http://github.com/vmware/harbor

[Service]

Type=simple

Restart=on-failure

RestartSec=5

ExecStart=/usr/bin/docker-compose -f /apps/harbor/docker-compose.yml up

ExecStop=/usr/bin/docker-compose -f /apps/harbor/docker-compose.yml down

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable harbor &>/dev/null && ${COLOR}"Harbor已配置为开机自动启动"${END}

}

set_swap_limit(){

if [ ${OS_ID} == "Ubuntu" ];then

${COLOR}'设置Docker的"WARNING: No swap limit support"警告'${END}

sed -ri '/^GRUB_CMDLINE_LINUX=/s@"$@ swapaccount=1"@' /etc/default/grub

update-grub &> /dev/null

${COLOR}"10秒后,机器会自动重启"${END}

sleep 10

reboot

fi

}

main(){

os

check_file

if [ ${OS_ID} == "CentOS" -o ${OS_ID} == "Rocky" ] &> /dev/null;then

rpm -q docker-ce &> /dev/null && ${COLOR}"Docker已安装"${END} || centos_install_docker

else

dpkg -s docker-ce &>/dev/null && ${COLOR}"Docker已安装"${END} || ubuntu_install_docker

fi

[ -f /etc/docker/daemon.json ] &>/dev/null && ${COLOR}"Docker镜像加速器已设置"${END} || mirror_accelerator

grep -Eqoi "(.*rmi=|.*rmc=)" ~/.bashrc && ${COLOR}"Docker别名已设置"${END} || set_alias

docker-compose --version &> /dev/null && ${COLOR}"Docker Compose已安装"${END} || install_docker_compose

systemctl is-active harbor &> /dev/null && ${COLOR}"Harbor已安装"${END} || install_harbor

grep -q "swapaccount=1" /etc/default/grub && ${COLOR}'"WARNING: No swap limit support"警告,已设置'${END} || set_swap_limit

}

main

[root@k8s-harbor01 ~]# bash install_docker_compose_harbor.sh

[root@k8s-harbor02 ~]# bash install_docker_compose_harbor.sh

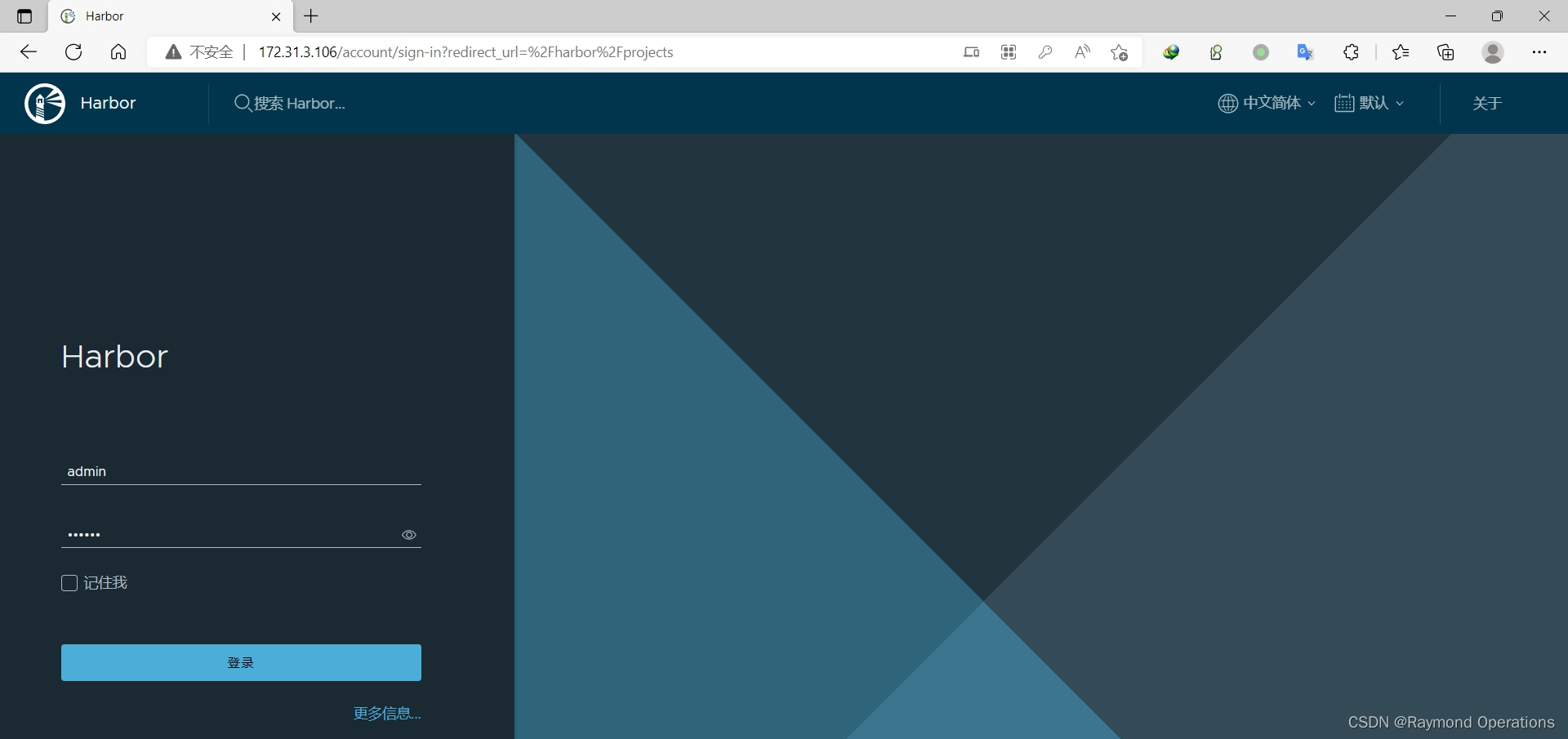

3.3.2 创建harbor仓库

在harbor01新建项目google_containers

http://172.31.3.106/

用户名:admin 密码:123456

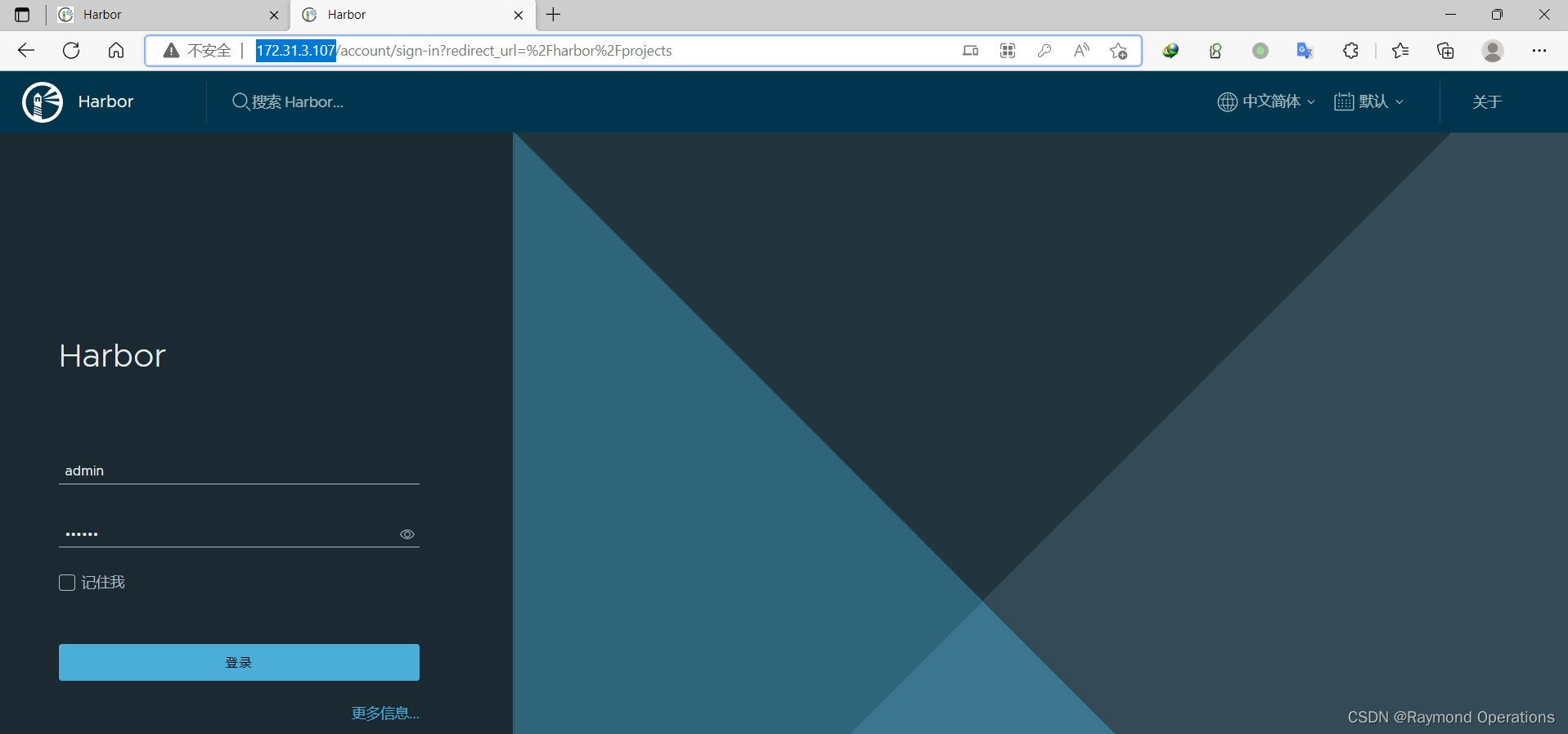

在harbor02新建项目google_containers

http://172.31.3.107/

在harbor02上新建目标

在harbor02上新建规则

在harbor01上新建目标

在harbor01上新建规则

3.4 安装 Containerd

3.4.1 内核参数调整

如果是安装 Docker 会自动配置以下的内核参数,而无需手动实现

但是如果安装Contanerd,还需手动配置

允许 iptables 检查桥接流量,若要显式加载此模块,需运行 modprobe br_netfilter

为了让 Linux 节点的 iptables 能够正确查看桥接流量,还需要确认net.bridge.bridge-nf-call-iptables 设置为 1。

配置Containerd所需的模块:

[root@k8s-master01 ~]# cat <<EOF | sudo tee /etc/modules-load.d/containerd.conf

overlay

br_netfilter

EOF

加载模块:

[root@k8s-master01 ~]# modprobe -- overlay

[root@k8s-master01 ~]# modprobe -- br_netfilter

配置Containerd所需的内核:

[root@k8s-master01 ~]# cat <<EOF | sudo tee /etc/sysctl.d/99-kubernetes-cri.conf

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF

加载内核:

[root@k8s-master01 ~]# sysctl --system

3.4.2 二进制安装 Containerd

官方下载链接:

https://github.com/containerd/containerd

Containerd有三种二进制安装包:

-

containerd-xxx :不包含runC,需要单独安装runC

[root@k8s-master01 ~]# tar tf containerd-1.6.8-linux-amd64.tar.gz bin/ bin/containerd-stress bin/containerd-shim-runc-v2 bin/containerd-shim bin/ctr bin/containerd bin/containerd-shim-runc-v1 -

cri-containerd-xxx:包含runC,ctr、crictl、systemd 配置文件等相关文件,不包含cni插件,k8s不需要containerd的cni插件,所以选择这个二进制包安装

[root@k8s-master01 ~]# tar tf cri-containerd-1.6.8-linux-amd64.tar.gz etc/crictl.yaml etc/systemd/ etc/systemd/system/ etc/systemd/system/containerd.service usr/ usr/local/ usr/local/sbin/ usr/local/sbin/runc usr/local/bin/ usr/local/bin/containerd-stress usr/local/bin/containerd-shim-runc-v2 usr/local/bin/containerd-shim usr/local/bin/ctr usr/local/bin/containerd usr/local/bin/critest usr/local/bin/ctd-decoder usr/local/bin/crictl usr/local/bin/containerd-shim-runc-v1 opt/containerd/ opt/containerd/cluster/ opt/containerd/cluster/gce/ opt/containerd/cluster/gce/env opt/containerd/cluster/gce/cloud-init/ opt/containerd/cluster/gce/cloud-init/master.yaml opt/containerd/cluster/gce/cloud-init/node.yaml opt/containerd/cluster/gce/cni.template opt/containerd/cluster/gce/configure.sh opt/containerd/cluster/version -

cri-containerd-cni-xxx:包含runc、ctr、crictl、cni插件、systemd 配置文件等相关文件

[root@k8s-master01 ~]# tar tf cri-containerd-cni-1.6.8-linux-amd64.tar.gz etc/ etc/crictl.yaml etc/systemd/ etc/systemd/system/ etc/systemd/system/containerd.service etc/cni/ etc/cni/net.d/ etc/cni/net.d/10-containerd-net.conflist usr/ usr/local/ usr/local/sbin/ usr/local/sbin/runc usr/local/bin/ usr/local/bin/containerd-stress usr/local/bin/containerd-shim-runc-v2 usr/local/bin/containerd-shim usr/local/bin/ctr usr/local/bin/containerd usr/local/bin/critest usr/local/bin/ctd-decoder usr/local/bin/crictl usr/local/bin/containerd-shim-runc-v1 opt/ opt/containerd/ opt/containerd/cluster/ opt/containerd/cluster/gce/ opt/containerd/cluster/gce/env opt/containerd/cluster/gce/cloud-init/ opt/containerd/cluster/gce/cloud-init/master.yaml opt/containerd/cluster/gce/cloud-init/node.yaml opt/containerd/cluster/gce/cni.template opt/containerd/cluster/gce/configure.sh opt/containerd/cluster/version opt/cni/ opt/cni/bin/ opt/cni/bin/bandwidth opt/cni/bin/loopback opt/cni/bin/ipvlan opt/cni/bin/host-local opt/cni/bin/static opt/cni/bin/vlan opt/cni/bin/tuning opt/cni/bin/host-device opt/cni/bin/firewall opt/cni/bin/portmap opt/cni/bin/sbr opt/cni/bin/macvlan opt/cni/bin/bridge opt/cni/bin/dhcp opt/cni/bin/ptp opt/cni/bin/vrf

安装Containerd:

[root@k8s-master01 ~]# wget https://github.com/containerd/containerd/releases/download/v1.6.8/cri-containerd-1.6.8-linux-amd64.tar.gz

#cri-containerd-1.6.8-linux-amd64.tar.gz 压缩包中已经按照官方二进制部署推荐的目录结构布局好。 里面包含了 systemd 配置文件,containerd 和ctr、crictl等部署文件。 将解压缩到系统的根目录 / 中:

[root@k8s-master01 ~]# tar xf cri-containerd-1.6.8-linux-amd64.tar.gz -C /

配置Containerd的配置文件:

[root@k8s-master01 ~]# mkdir -p /etc/containerd

[root@k8s-master01 ~]# containerd config default | tee /etc/containerd/config.toml

将Containerd的Cgroup改为Systemd和修改containerd配置sandbox_image 镜像源设置为阿里google_containers镜像源:

[root@k8s-master01 ~]# vim /etc/containerd/config.toml

...

[plugins."io.containerd.grpc.v1.cri".containerd.runtimes.runc.options]

...

SystemdCgroup = true #SystemdCgroup改成true

...

sandbox_image = "harbor.raymonds.cc/google_containers/pause:3.8" #sandbox_image的镜像改为私有仓库“harbor.raymonds.cc/google_containers/pause:3.8”,如果没有私有仓库改为阿里镜像源“registry.aliyuncs.com/google_containers/pause:3.8”

#使用下面命令修改

sed -ri -e 's/(.*SystemdCgroup = ).*/\1true/' -e 's@(.*sandbox_image = ).*@\1\"harbor.raymonds.cc/google_containers/pause:3.8\"@' /etc/containerd/config.toml

#如果没有harbor,请执行下面命令

sed -ri -e 's/(.*SystemdCgroup = ).*/\1true/' -e 's@(.*sandbox_image = ).*@\1\"registry.aliyuncs.com/google_containers/pause:3.8\"@' /etc/containerd/config.toml

配置镜像加速和配置私有镜像仓库:

参考文档:https://github.com/containerd/cri/blob/master/docs/registry.md

[root@k8s-master01 ~]# vim /etc/containerd/config.toml

...

[plugins."io.containerd.grpc.v1.cri".registry]

...

#下面几行是配置私有仓库授权,如果没有私有仓库下面的不用设置

[plugins."io.containerd.grpc.v1.cri".registry.configs]

[plugins."io.containerd.grpc.v1.cri".registry.configs."harbor.raymonds.cc".tls]

insecure_skip_verify = true

[plugins."io.containerd.grpc.v1.cri".registry.configs."harbor.raymonds.cc".auth]

username = "admin"

password = "123456"

...

[plugins."io.containerd.grpc.v1.cri".registry.mirrors]

#下面两行是配置镜像加速

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]

endpoint = ["https://registry.docker-cn.com" ,"http://hub-mirror.c.163.com" ,"https://docker.mirrors.ustc.edu.cn"]

#下面两行是配置私有仓库,如果没有私有仓库下面的不用设置

[plugins."io.containerd.grpc.v1.cri".registry.mirrors."harbor.raymonds.cc"]

endpoint = ["http://harbor.raymonds.cc"]

...

#使用下面命令修改

sed -i -e '/.*registry.mirrors.*/a\ [plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]\n endpoint = ["https://registry.docker-cn.com" ,"http://hub-mirror.c.163.com" ,"https://docker.mirrors.ustc.edu.cn"]\n [plugins."io.containerd.grpc.v1.cri".registry.mirrors."harbor.raymonds.cc"]\n endpoint = ["http://harbor.raymonds.cc"]' -e '/.*registry.configs.*/a\ [plugins."io.containerd.grpc.v1.cri".registry.configs."harbor.raymonds.cc".tls]\n insecure_skip_verify = true\n [plugins."io.containerd.grpc.v1.cri".registry.configs."harbor.raymonds.cc".auth]\n username = "admin"\n password = "123456"' /etc/containerd/config.toml

#如果没有harbor不需要设置私有仓库相关配置,只需要设置镜像加速,请使用下面命令执行

sed -i '/.*registry.mirrors.*/a\ [plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]\n endpoint = ["https://registry.docker-cn.com" ,"http://hub-mirror.c.163.com" ,"https://docker.mirrors.ustc.edu.cn"]' /etc/containerd/config.toml

配置crictl客户端连接的运行时位置:

[root@k8s-master01 ~]# cat > /etc/crictl.yaml <<EOF

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

EOF

启动Containerd,并配置开机自启动:

[root@k8s-master01 ~]# systemctl daemon-reload && systemctl enable --now containerd

查看信息:

[root@k8s-master01 ~]# ctr version

Client:

Version: v1.6.8

Revision: 9cd3357b7fd7218e4aec3eae239db1f68a5a6ec6

Go version: go1.17.13

Server:

Version: v1.6.8

Revision: 9cd3357b7fd7218e4aec3eae239db1f68a5a6ec6

UUID: 18d9c9c1-27cc-4883-be10-baf17a186aad

[root@k8s-master01 ~]# crictl version

Version: 0.1.0

RuntimeName: containerd

RuntimeVersion: v1.6.8

RuntimeApiVersion: v1

[root@k8s-master01 ~]# crictl info

...

},

...

"lastCNILoadStatus": "cni config load failed: no network config found in /etc/cni/net.d: cni plugin not initialized: failed to load cni config",

"lastCNILoadStatus.default": "cni config load failed: no network config found in /etc/cni/net.d: cni plugin not initialized: failed to load cni config"

}

#这里cni插件报错,不用管,因为没有装containerd的CNI插件,kubernetes里不需要containerd的CNI插件,装上了还会冲突,后边安装flannel或calico的CNI插件

3.4.3 containerd 客户端工具 nerdctl

推荐使用 nerdctl,使用效果与 docker 命令的语法一致,github 下载链接:

https://github.com/containerd/nerdctl/releases

- 精简 (nerdctl–linux-amd64.tar.gz): 只包含 nerdctl

- 完整 (nerdctl-full–linux-amd64.tar.gz): 包含 containerd, runc, and CNI 等依赖

nerdctl 的目标并不是单纯地复制 docker 的功能,它还实现了很多 docker 不具备的功能,例如延迟拉取镜像(lazy-pulling)、镜像加密(imgcrypt)等。具体看 nerdctl。

延迟拉取镜像功能可以参考这篇文章:Containerd 使用 Stargz Snapshotter 延迟拉取镜像

https://icloudnative.io/posts/startup-containers-in-lightning-speed-with-lazy-image-distribution-on-containerd/

1)安装 nerdctl(精简版):

[root@k8s-master01 ~]# wget https://github.com/containerd/nerdctl/releases/download/v0.23.0/nerdctl-0.23.0-linux-amd64.tar.gz

[root@k8s-master01 ~]# tar xf nerdctl-0.23.0-linux-amd64.tar.gz -C /usr/local/bin/

#配置nerdctl

[root@k8s-master01 ~]# mkdir -p /etc/nerdctl/

[root@k8s-master01 ~]# cat > /etc/nerdctl/nerdctl.toml <<EOF

namespace = "k8s.io" #设置nerdctl工具默认namespace

insecure_registry = true #跳过安全镜像仓库检测

EOF

2)安装 buildkit 支持构建镜像:

buildkit GitHub 地址:

https://github.com/moby/buildkit

使用精简版 nerdctl 无法直接通过 containerd 构建镜像,需要与 buildkit 组全使用以实现镜像构建。当然你也可以安装上面的完整 nerdctl;buildkit 项目是 Docker 公司开源出来的一个构建工具包,支持 OCI 标准的镜像构建。它主要包含以下部分:

- 服务端 buildkitd,当前支持 runc 和 containerd 作为 worker,默认是 runc;

- 客户端 buildctl,负责解析 Dockerfile,并向服务端 buildkitd 发出构建请求。

buildkit 是典型的C/S 架构,client 和 server 可以不在一台服务器上。而 nerdctl 在构建镜像方面也可以作为 buildkitd 的客户端。

[root@k8s-master01 ~]# wget https://github.com/moby/buildkit/releases/download/v0.10.4/buildkit-v0.10.4.linux-amd64.tar.gz

[root@k8s-master01 ~]# tar xf buildkit-v0.10.4.linux-amd64.tar.gz -C /usr/local/

配置 buildkit 的启动文件,可以从这里下载:

https://github.com/moby/buildkit/tree/master/examples/systemd

buildkit 需要配置两个文件

/usr/lib/systemd/system/buildkit.socket

[root@k8s-master01 ~]# cat > /usr/lib/systemd/system/buildkit.socket <<EOF

[Unit]

Description=BuildKit

Documentation=https://github.com/moby/buildkit

[Socket]

ListenStream=%t/buildkit/buildkitd.sock

SocketMode=0660

[Install]

WantedBy=sockets.target

EOF

/usr/lib/systemd/system/buildkit.service

[root@k8s-master01 ~]# cat > /usr/lib/systemd/system/buildkit.service << EOF

[Unit]

Description=BuildKit

Requires=buildkit.socket

After=buildkit.socket

Documentation=https://github.com/moby/buildkit

[Service]

Type=notify

ExecStart=/usr/local/bin/buildkitd --addr fd://

[Install]

WantedBy=multi-user.target

EOF

启动 buildkit:

[root@k8s-master01 ~]# systemctl daemon-reload && systemctl enable --now buildkit

root@k8s-master01:~# systemctl status buildkit

● buildkit.service - BuildKit

Loaded: loaded (/lib/systemd/system/buildkit.service; enabled; vendor preset: enabled)

Active: active (running) since Tue 2022-09-13 16:47:14 CST; 21s ago

TriggeredBy: ● buildkit.socket

Docs: https://github.com/moby/buildkit

Main PID: 3303 (buildkitd)

Tasks: 7 (limit: 4575)

Memory: 14.5M

CGroup: /system.slice/buildkit.service

└─3303 /usr/local/bin/buildkitd --addr fd://

Sep 13 16:47:14 k8s-master01.example.local systemd[1]: Started BuildKit.

Sep 13 16:47:14 k8s-master01.example.local buildkitd[3303]: time="2022-09-13T16:47:14+08:00" level=info msg="auto snapshotter: using overlayfs"

Sep 13 16:47:14 k8s-master01.example.local buildkitd[3303]: time="2022-09-13T16:47:14+08:00" level=warning msg="using host network as the defa>

Sep 13 16:47:14 k8s-master01.example.local buildkitd[3303]: time="2022-09-13T16:47:14+08:00" level=info msg="found worker \"sgqr1t2c81tj7ec7w3>

Sep 13 16:47:14 k8s-master01.example.local buildkitd[3303]: time="2022-09-13T16:47:14+08:00" level=warning msg="using host network as the defa>

Sep 13 16:47:14 k8s-master01.example.local buildkitd[3303]: time="2022-09-13T16:47:14+08:00" level=info msg="found worker \"w4fzprdjtuqtj3f3wd>

Sep 13 16:47:14 k8s-master01.example.local buildkitd[3303]: time="2022-09-13T16:47:14+08:00" level=info msg="found 2 workers, default=\"sgqr1t>

Sep 13 16:47:14 k8s-master01.example.local buildkitd[3303]: time="2022-09-13T16:47:14+08:00" level=warning msg="currently, only the default wo>

Sep 13 16:47:14 k8s-master01.example.local buildkitd[3303]: time="2022-09-13T16:47:14+08:00" level=info msg="running server on /run/buildkit/b>

[root@k8s-master01 ~]# nerdctl version

Client:

Version: v0.23.0

OS/Arch: linux/amd64

Git commit: 660680b7ddfde1d38a66ec1c7f08f8d89ab92c68

buildctl:

Version: v0.10.4

GitCommit: a2ba6869363812a210fcc3ded6926757ab780b5f

Server:

containerd:

Version: v1.6.8

GitCommit: 9cd3357b7fd7218e4aec3eae239db1f68a5a6ec6

runc:

Version: 1.1.3

GitCommit: v1.1.3-0-g6724737f

[root@k8s-master01 ~]# buildctl --version

buildctl github.com/moby/buildkit v0.10.4 a2ba6869363812a210fcc3ded6926757ab780b5f

[root@k8s-master01 ~]# nerdctl info

Client:

Namespace: default

Debug Mode: false

Server:

Server Version: v1.6.8

Storage Driver: overlayfs

Logging Driver: json-file

Cgroup Driver: cgroupfs

Cgroup Version: 1

Plugins:

Log: fluentd journald json-file

Storage: aufs native overlayfs

Security Options:

apparmor

seccomp

Profile: default

Kernel Version: 5.4.0-107-generic

Operating System: Ubuntu 20.04.4 LTS

OSType: linux

Architecture: x86_64

CPUs: 2

Total Memory: 3.81GiB

Name: k8s-master01.example.local

ID: ab901e55-fa37-496e-9920-ee6eff687687

WARNING: No swap limit support #系统警告信息 (没有开启 swap 资源限制 )

解决上述SWAP报警提示:

#SWAP报警提示,只有在ubuntu系统有,centos系统里没有不用设置

root@k8s-master01:~# sed -ri '/^GRUB_CMDLINE_LINUX=/s@"$@ swapaccount=1"@' /etc/default/grub

root@k8s-master01:~# update-grub

root@k8s-master01:~# reboot

root@k8s-master01:~# nerdctl info

Client:

Namespace: default

Debug Mode: false

Server:

Server Version: v1.6.8

Storage Driver: overlayfs

Logging Driver: json-file

Cgroup Driver: cgroupfs

Cgroup Version: 1

Plugins:

Log: fluentd journald json-file

Storage: aufs native overlayfs

Security Options:

apparmor

seccomp

Profile: default

Kernel Version: 5.4.0-125-generic

Operating System: Ubuntu 20.04.4 LTS

OSType: linux

Architecture: x86_64

CPUs: 2

Total Memory: 3.81GiB

Name: k8s-master01.example.local

ID: ab901e55-fa37-496e-9920-ee6eff687687

#现在就没有SWAP报警提示

nerdctl命令补全:

#CentOS

[root@k8s-master01 ~]# yum -y install bash-completion

#Ubuntu

[root@k8s-master01 ~]# apt -y install bash-completion

[root@k8s-master01 ~]# echo "source <(nerdctl completion bash)" >> ~/.bashrc

master02、master03和node安装containerd:

[root@k8s-master02 ~]# cat install_containerd_binary.sh

#!/bin/bash

#

#**********************************************************************************************

#Author: Raymond

#QQ: 88563128

#Date: 2022-09-13

#FileName: install_containerd_binary.sh

#URL: raymond.blog.csdn.net

#Description: install_containerd_binary for centos 7/8 & ubuntu 18.04/20.04 & Rocky 8

#Copyright (C): 2022 All rights reserved

#*********************************************************************************************

SRC_DIR=/usr/local/src

COLOR="echo -e \\033[01;31m"

END='\033[0m'

#Containerd下载地址:https://github.com/containerd/containerd/releases/download/v1.6.8/cri-containerd-1.6.8-linux-amd64.tar.gz

CONTAINERD_FILE=cri-containerd-1.6.8-linux-amd64.tar.gz

PAUSE_VERSION=3.8

HARBOR_DOMAIN=harbor.raymonds.cc

USERNAME=admin

PASSWORD=123456

#Netdctl下载地址:https://github.com/containerd/nerdctl/releases/download/v0.23.0/nerdctl-0.23.0-linux-amd64.tar.gz

NETDCTL_FILE=nerdctl-0.23.0-linux-amd64.tar.gz

#Buildkit下载地址:https://github.com/moby/buildkit/releases/download/v0.10.4/buildkit-v0.10.4.linux-amd64.tar.gz

BUILDKIT_FILE=buildkit-v0.10.4.linux-amd64.tar.gz

os(){

OS_ID=`sed -rn '/^NAME=/s@.*="([[:alpha:]]+).*"$@\1@p' /etc/os-release`

}

check_file (){

cd ${SRC_DIR}

if [ ! -e ${CONTAINERD_FILE} ];then

${COLOR}"缺少${CONTAINERD_FILE}文件,请把文件放到${SRC_DIR}目录下"${END}

exit

elif [ ! -e ${NETDCTL_FILE} ];then

${COLOR}"缺少${NETDCTL_FILE}文件,请把文件放到${SRC_DIR}目录下"${END}

exit

elif [ ! -e ${BUILDKIT_FILE} ];then

${COLOR}"缺少${BUILDKIT_FILE}文件,请把文件放到${SRC_DIR}目录下"${END}

exit

else

${COLOR}"相关文件已准备好"${END}

fi

}

install_containerd(){

[ -f /usr/local/bin/containerd ] && { ${COLOR}"Containerd已存在,安装失败"${END};exit; }

cat > /etc/modules-load.d/containerd.conf <<-EOF

overlay

br_netfilter

EOF

modprobe -- overlay

modprobe -- br_netfilter

cat > /etc/sysctl.d/99-kubernetes-cri.conf <<-EOF

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF

sysctl --system &> /dev/null

${COLOR}"开始安装Containerd..."${END}

tar xf ${CONTAINERD_FILE} -C /

mkdir -p /etc/containerd

containerd config default | tee /etc/containerd/config.toml &> /dev/null

sed -ri -e 's/(.*SystemdCgroup = ).*/\1true/' -e 's@(.*sandbox_image = ).*@\1\"'''${HARBOR_DOMAIN}'''/google_containers/pause:'''${PAUSE_VERSION}'''\"@' -e '/.*registry.mirrors.*/a\ [plugins."io.containerd.grpc.v1.cri".registry.mirrors."docker.io"]\n endpoint = ["https://registry.docker-cn.com" ,"http://hub-mirror.c.163.com" ,"https://docker.mirrors.ustc.edu.cn"]\n [plugins."io.containerd.grpc.v1.cri".registry.mirrors."'''${HARBOR_DOMAIN}'''"]\n endpoint = ["http://'''${HARBOR_DOMAIN}'''"]' -e '/.*registry.configs.*/a\ [plugins."io.containerd.grpc.v1.cri".registry.configs."'''${HARBOR_DOMAIN}'''".tls]\n insecure_skip_verify = true\n [plugins."io.containerd.grpc.v1.cri".registry.configs."'''${HARBOR_DOMAIN}'''".auth]\n username = "'''${USERNAME}'''"\n password = "'''${PASSWORD}'''"' /etc/containerd/config.toml

cat > /etc/crictl.yaml <<-EOF

runtime-endpoint: unix:///run/containerd/containerd.sock

image-endpoint: unix:///run/containerd/containerd.sock

timeout: 10

debug: false

EOF

systemctl daemon-reload && systemctl enable --now containerd &> /dev/null

systemctl is-active containerd &> /dev/null && ${COLOR}"Containerd 服务启动成功"${END} || { ${COLOR}"Containerd 启动失败"${END};exit; }

ctr version && ${COLOR}"Containerd 安装成功"${END} || ${COLOR}"Containerd 安装失败"${END}

}

set_alias(){

echo 'alias rmi="nerdctl images -qa|xargs nerdctl rmi -f"' >> ~/.bashrc

echo 'alias rmc="nerdctl ps -qa|xargs nerdctl rm -f"' >> ~/.bashrc

}

install_netdctl_buildkit(){

${COLOR}"开始安装Netdctl..."${END}

tar xf ${NETDCTL_FILE} -C /usr/local/bin/

mkdir -p /etc/nerdctl/

cat > /etc/nerdctl/nerdctl.toml <<-EOF

namespace = "k8s.io"

insecure_registry = true

EOF

${COLOR}"开始安装Buildkit..."${END}

tar xf ${BUILDKIT_FILE} -C /usr/local/

cat > /usr/lib/systemd/system/buildkit.socket <<-EOF

[Unit]

Description=BuildKit

Documentation=https://github.com/moby/buildkit

[Socket]

ListenStream=%t/buildkit/buildkitd.sock

SocketMode=0660

[Install]

WantedBy=sockets.target

EOF

cat > /usr/lib/systemd/system/buildkit.service <<-EOF

[Unit]

Description=BuildKit

Requires=buildkit.socket

After=buildkit.socket

Documentation=https://github.com/moby/buildkit

[Service]

Type=notify

ExecStart=/usr/local/bin/buildkitd --addr fd://

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload && systemctl enable --now buildkit &> /dev/null

systemctl is-active buildkit &> /dev/null && ${COLOR}"Buildkit 服务启动成功"${END} || { ${COLOR}"Buildkit 启动失败"${END};exit; }

buildctl --version && ${COLOR}"Buildkit 安装成功"${END} || ${COLOR}"Buildkit 安装失败"${END}

}

nerdctl_command_completion(){

if [ ${OS_ID} == "CentOS" -o ${OS_ID} == "Rocky" ];then

yum -y install bash-completion

else

apt -y install bash-completion

fi

echo "source <(nerdctl completion bash)" >> ~/.bashrc

. ~/.bashrc

}

set_swap_limit(){

if [ ${OS_ID} == "Ubuntu" ];then

${COLOR}'设置Docker的"WARNING: No swap limit support"警告'${END}

sed -ri '/^GRUB_CMDLINE_LINUX=/s@"$@ swapaccount=1"@' /etc/default/grub

update-grub &> /dev/null

${COLOR}"10秒后,机器会自动重启"${END}

sleep 10

reboot

fi

}

main(){

os

check_file

install_containerd

set_alias

install_netdctl_buildkit

nerdctl_command_completion

set_swap_limit

}

main

[root@k8s-master02 ~]# bash install_containerd_binary.sh

[root@k8s-master03 ~]# bash install_containerd_binary.sh

[root@k8s-node01 ~]# bash install_containerd_binary.sh

[root@k8s-node02 ~]# bash install_containerd_binary.sh

[root@k8s-node03 ~]# bash install_containerd_binary.sh

3.5 安装kubeadm等组件

CentOS 配置k8s镜像仓库和安装k8s组件:

[root@k8s-master01 ~]# cat > /etc/yum.repos.d/kubernetes.repo <<-EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-\$basearch

enabled=1

gpgcheck=1

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

[root@k8s-master01 ~]# yum list kubeadm.x86_64 --showduplicates | sort -r|grep 1.25

kubeadm.x86_64 1.25.0-0 kubernetes

[root@k8s-master01 ~]# yum -y install kubeadm-1.25.0 kubelet-1.25.0 kubectl-1.25.0

Ubuntu:

root@k8s-master01:~# apt update

root@k8s-master01:~# apt install -y apt-transport-https

root@k8s-master01:~# curl -fsSL https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | apt-key add -

OK

root@k8s-master01:~# echo "deb https://mirrors.aliyun.com/kubernetes/apt kubernetes-xenial main" > /etc/apt/sources.list.d/kubernetes.list

root@k8s-master01:~# apt update

root@k8s-master01:~# apt-cache madison kubeadm | grep 1.25

kubeadm | 1.25.0-00 | https://mirrors.aliyun.com/kubernetes/apt kubernetes-xenial/main amd64 Packages

root@k8s-master01:~# apt -y install kubelet=1.25.0-00 kubeadm=1.25.0-00 kubectl=1.25.0-00

设置Kubelet开机自启动:

[root@k8s-master01 ~]# systemctl daemon-reload

[root@k8s-master01 ~]# systemctl enable --now kubelet

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

在master02和master03执行脚本安装:

[root@k8s-master02 ~]# cat install_kubeadm_for_master.sh

#!/bin/bash

#

#**********************************************************************************************

#Author: Raymond

#QQ: 88563128

#Date: 2022-01-11

#FileName: install_kubeadm_for_master.sh

#URL: raymond.blog.csdn.net

#Description: The test script

#Copyright (C): 2022 All rights reserved

#*********************************************************************************************

COLOR="echo -e \\033[01;31m"

END='\033[0m'

KUBEADM_MIRRORS=mirrors.aliyun.com

KUBEADM_VERSION=1.25.0

HARBOR_DOMAIN=harbor.raymonds.cc

os(){

OS_ID=`sed -rn '/^NAME=/s@.*="([[:alpha:]]+).*"$@\1@p' /etc/os-release`

}

install_ubuntu_kubeadm(){

${COLOR}"开始安装Kubeadm依赖包"${END}

apt update &> /dev/null && apt install -y apt-transport-https &> /dev/null

curl -fsSL https://${KUBEADM_MIRRORS}/kubernetes/apt/doc/apt-key.gpg | apt-key add - &> /dev/null

echo "deb https://"${KUBEADM_MIRRORS}"/kubernetes/apt kubernetes-xenial main" >> /etc/apt/sources.list.d/kubernetes.list

apt update &> /dev/null

${COLOR}"Kubeadm有以下版本"${END}

apt-cache madison kubeadm

${COLOR}"10秒后即将安装:Kubeadm-"${KUBEADM_VERSION}"版本......"${END}

${COLOR}"如果想安装其它Kubeadm版本,请按Ctrl+c键退出,修改版本再执行"${END}

sleep 10

${COLOR}"开始安装Kubeadm"${END}

apt -y install kubelet=${KUBEADM_VERSION}-00 kubeadm=${KUBEADM_VERSION}-00 kubectl=${KUBEADM_VERSION}-00 &> /dev/null

${COLOR}"Kubeadm安装完成"${END}

}

install_centos_kubeadm(){

cat > /etc/yum.repos.d/kubernetes.repo <<-EOF

[kubernetes]

name=Kubernetes

baseurl=https://${KUBEADM_MIRRORS}/kubernetes/yum/repos/kubernetes-el7-\$basearch

enabled=1

gpgcheck=1

repo_gpgcheck=0

gpgkey=https://${KUBEADM_MIRRORS}/kubernetes/yum/doc/yum-key.gpg https://${KUBEADM_MIRRORS}/kubernetes/yum/doc/rpm-package-key.gpg

EOF

${COLOR}"Kubeadm有以下版本"${END}

yum list kubeadm.x86_64 --showduplicates | sort -r

${COLOR}"10秒后即将安装:Kubeadm-"${KUBEADM_VERSION}"版本......"${END}

${COLOR}"如果想安装其它Kubeadm版本,请按Ctrl+c键退出,修改版本再执行"${END}

sleep 10

${COLOR}"开始安装Kubeadm"${END}

yum -y install kubelet-${KUBEADM_VERSION} kubeadm-${KUBEADM_VERSION} kubectl-${KUBEADM_VERSION} &> /dev/null

${COLOR}"Kubeadm安装完成"${END}

}

start_service(){

systemctl daemon-reload

systemctl enable --now kubelet

systemctl is-active kubelet &> /dev/null && ${COLOR}"Kubelet 服务启动成功"${END} || { ${COLOR}"Kubelet 启动失败"${END};exit; }

kubelet --version && ${COLOR}"Kubelet 安装成功"${END} || ${COLOR}"Kubelet 安装失败"${END}

}

main(){

os

if [ ${OS_ID} == "CentOS" -o ${OS_ID} == "Rocky" ] &> /dev/null;then

install_centos_kubeadm

else

install_ubuntu_kubeadm

fi

start_service

}

main

[root@k8s-master02 ~]# bash install_kubeadm_for_master.sh

[root@k8s-master03 ~]# bash install_kubeadm_for_master.sh

node上安装kubeadm:

[root@k8s-node01 ~]# cat install_kubeadm_for_node.sh

#!/bin/bash

#

#**********************************************************************************************

#Author: Raymond

#QQ: 88563128

#Date: 2022-01-11