Datax 往 hdfs 写数据配置 HA 高可用

2023-09-14 09:14:50 时间

配置脚本

vim gen_import_config_ha.py

内容 :

# coding=utf-8

import json

import getopt

import os

import sys

import MySQLdb

#MySQL相关配置,需根据实际情况作出修改

mysql_host = "cpu102"

mysql_port = "3306"

mysql_user = "root"

mysql_passwd = "xxxxx"

#HDFS HA 相关配置,需根据实际情况作出修改

my_nameservices = "mycluster"

my_namenodes_1 = "nn1"

my_namenodes_2 = "nn2"

my_rpc_address_1 = "cpu101:8020"

my_rpc_address_2 = "cpu102:8020"

#生成配置文件的目标路径,可根据实际情况作出修改

output_path = "/opt/module/datax/job/import"

#获取mysql连接

def get_connection():

return MySQLdb.connect(host=mysql_host, port=int(mysql_port), user=mysql_user, passwd=mysql_passwd)

#获取表格的元数据 包含列名和数据类型

def get_mysql_meta(database, table):

connection = get_connection()

cursor = connection.cursor()

sql = "SELECT COLUMN_NAME,DATA_TYPE from information_schema.COLUMNS WHERE TABLE_SCHEMA=%s AND TABLE_NAME=%s ORDER BY ORDINAL_POSITION"

cursor.execute(sql, [database, table])

fetchall = cursor.fetchall()

cursor.close()

connection.close()

return fetchall

#获取mysql表的列名

def get_mysql_columns(database, table):

return map(lambda x: x[0], get_mysql_meta(database, table))

#将获取的元数据中 mysql 的数据类型转换为 hive 的数据类型 写入到 hdfswriter 中

def get_hive_columns(database, table):

def type_mapping(mysql_type):

mappings = {

"bigint": "bigint",

"int": "bigint",

"smallint": "bigint",

"tinyint": "bigint",

"decimal": "string",

"double": "double",

"float": "float",

"binary": "string",

"char": "string",

"varchar": "string",

"datetime": "string",

"time": "string",

"timestamp": "string",

"date": "string",

"text": "string"

}

return mappings[mysql_type]

meta = get_mysql_meta(database, table)

return map(lambda x: {"name": x[0], "type": type_mapping(x[1].lower())}, meta)

#生成json文件

def generate_json(source_database, source_table):

job = {

"job": {

"setting": {

"speed": {

"channel": 3

},

"errorLimit": {

"record": 0,

"percentage": 0.02

}

},

"content": [{

"reader": {

"name": "mysqlreader",

"parameter": {

"username": mysql_user,

"password": mysql_passwd,

"column": get_mysql_columns(source_database, source_table),

"splitPk": "",

"connection": [{

"table": [source_table],

"jdbcUrl": ["jdbc:mysql://" + mysql_host + ":" + mysql_port + "/" + source_database]

}]

}

},

"writer": {

"name": "hdfswriter",

"parameter": {

"defaultFS":"hdfs://" + my_nameservices,

"hadoopConfig":{

"dfs.nameservices": my_nameservices,

"dfs.ha.namenodes." + my_nameservices: my_namenodes_1 + "," + my_namenodes_2,

"dfs.namenode.rpc-address." + my_nameservices + "." + my_namenodes_1 : my_rpc_address_1,

"dfs.namenode.rpc-address." + my_nameservices + "." + my_namenodes_2: my_rpc_address_2,

"dfs.client.failover.proxy.provider." + my_nameservices: "org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider"

},

"fileType": "text",

"path": "${targetdir}",

"fileName": source_table,

"column": get_hive_columns(source_database, source_table),

"writeMode": "append",

"fieldDelimiter": "\t",

"compress": "gzip"

}

}

}]

}

}

if not os.path.exists(output_path):

os.makedirs(output_path)

with open(os.path.join(output_path, ".".join([source_database, source_table, "json"])), "w") as f:

json.dump(job, f)

def main(args):

source_database = ""

source_table = ""

options, arguments = getopt.getopt(args, '-d:-t:', ['sourcedb=', 'sourcetbl='])

for opt_name, opt_value in options:

if opt_name in ('-d', '--sourcedb'):

source_database = opt_value

if opt_name in ('-t', '--sourcetbl'):

source_table = opt_value

generate_json(source_database, source_table)

if __name__ == '__main__':

main(sys.argv[1:])

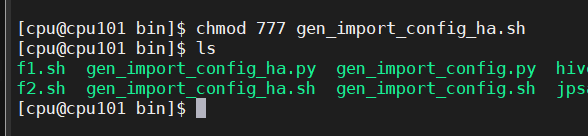

权限 :

chmod 777 gen_import_config_ha.py

配置全表生成脚本

vim gen_import_config_ha.sh

#!/bin/bash

python ~/bin/gen_import_config_ha.py -d gmall -t activity_info

python ~/bin/gen_import_config_ha.py -d gmall -t activity_rule

python ~/bin/gen_import_config_ha.py -d gmall -t base_category1

python ~/bin/gen_import_config_ha.py -d gmall -t base_category2

python ~/bin/gen_import_config_ha.py -d gmall -t base_category3

python ~/bin/gen_import_config_ha.py -d gmall -t base_dic

python ~/bin/gen_import_config_ha.py -d gmall -t base_province

python ~/bin/gen_import_config_ha.py -d gmall -t base_region

python ~/bin/gen_import_config_ha.py -d gmall -t base_trademark

python ~/bin/gen_import_config_ha.py -d gmall -t cart_info

python ~/bin/gen_import_config_ha.py -d gmall -t coupon_info

python ~/bin/gen_import_config_ha.py -d gmall -t sku_attr_value

python ~/bin/gen_import_config_ha.py -d gmall -t sku_info

python ~/bin/gen_import_config_ha.py -d gmall -t sku_sale_attr_value

python ~/bin/gen_import_config_ha.py -d gmall -t spu_info

权限

chmod 777 gen_import_config_ha.sh

相关文章

- Windows下memcached.exe的安装与配置

- FlashChart json数据配置 中文文档

- 使用Docker安装mysql,挂载外部配置和数据

- springboot学习章节-spring常用配置

- MySQL写入插入数据优化配置

- Linux理论04:CentOS6.6的网络配置

- echart图表控件配置入门(二)常用图表数据动态绑定

- 根据XML配置规则导入Excel数据(一)定义XML规则

- CentOS5.6下配置rsync内网同步数据到外网

- Python Django 配置静态资源访问(settings配置)

- docker配置数据默认存储路径:--graph已过时,新版本中使用--data-root代替

- spring boot同时启动多个服务副本(同一服务启动在不同端口)配置方法

- storm集群部署和配置过程详解

- 【BSP视频教程】BSP视频教程第17期:单片机bootloader专题,启动,跳转配置和调试下载的各种用法(2022-06-10)

- [ASP.NET Core 3框架揭秘] 配置[2]:读取配置数据[下篇]

- atitit.手动配置列表文件的选择and 数据的层次结构 attilax总结最佳实践--yaml

- 【Linux 内核】编译 Linux 内核 ④ ( 打开 Linux 内核编译 菜单配置 |菜单配置中的光标移动与选中状态 | 保存配置 | 配置项帮助文档 )

- SpringBoot动态配置加载

- 002-JVM运行时数据区【内存模型、jvm参数配置】

- Nginx 服务器伪静态配置不当造成 Access denied

- RFSoC应用笔记 - RF数据转换器 -21- API使用指南之配置ADC相关工作状态

- RFSoC应用笔记 - RF数据转换器 -03- RFSoC关键配置之RF-ADC内部解析(一)

- Docker重学系列之高级数据卷配置

- CentOS6安装大数据软件(七):Hue大数据可视化工具安装和配置

- CentOS6安装大数据软件(三):Kafka集群的配置

- Ubuntu18配置dns

- 【大数据开发运维解决方案】超级详细的VMware16安装Redhat8&挂载镜像配置本地yum源&安装unixODBC教程