Kubersphere----基于现有Kubernetes集群安装最小化Kubersphere

2023-09-14 09:12:51 时间

【原文链接】Kubersphere----基于现有Kubernetes集群安装最小化Kubersphere

一、创建StorageClass存储

1.1 创建kubesphere-system命名空间

编辑文件 ks-namespace.yaml

apiVersion: v1

kind: Namespace

metadata:

name: kubesphere-system

labels:

name: kubesphere-system

然后执行如下命令创建

kubectl apply -f ks-namespace.yaml

1.2 创建rbac授权

编辑sc-rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

namespace: kubesphere-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: nfs-client-provisioner-cr

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get","list","watch","create","delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get","list","watch","update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get","list","watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["list","watch","create","update","patch"]

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["create","delete","get","list","watch","patch","update"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: nfs-client-provisioner-crb

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

namespace: kubesphere-system

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-cr

apiGroup: rbac.authorization.k8s.io

执行如下命令创建

kubectl apply -f sc-rbac.yaml

1.3 创建storageclass的deployment

编辑 sc-deployment.yaml 文件,内容如下,这里主要需要注意设置如下位置

- NFS_SERVER 设置为安装nfs服务的服务器ip地址

- NFS_PATH 即安装nfs服务的时候创建的挂载目录

- volumes中的nfs同样需要配置对应的nfs环境信息

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

namespace: kubesphere-system

spec:

replicas: 1

selector:

matchLabels:

app: nfs-client-provisioner

strategy:

type: Recreate

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: registry.cn-beijing.aliyuncs.com/xngczl/nfs-subdir-external-provisione:v4.0.0

env:

- name: PROVISIONER_NAME

value: nfs-storage-class

- name: NFS_SERVER

value: 192.168.16.40

- name: NFS_PATH

value: /root/data/nfs/storageclass

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

volumes:

- name: nfs-client-root

nfs:

server: 192.168.16.40

path: /root/data/nfs/storageclass

然后执行如下命令部署

kubectl apply -f sc-deployment.yaml

1.4 创建StorageClass

编辑 sc-resource.yaml,内容如下,这里需要注意的是provisioner对应的值应该是步骤1.3中eenv中PROVISIONER_NAME变量设置的值

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-storageclass

provisioner: nfs-storage-class

allowVolumeExpansion: true

reclaimPolicy: Retain

然后执行如下命令创建

kubectl apply -f sc-resource.yaml

1.5 查看StorageClass

[root@master kubersphere]# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

nfs-storageclass nfs-storage-class Retain Immediate true 33s

sc-nexus3 kubernetes.io/no-provisioner Delete WaitForFirstConsumer false 88d

[root@master kubersphere]#

1.6 设置默认的StorageClass

kubectl patch storageclass nfs-storageclass -p '{"metadata": {"annotations":{"storageclass.kubernetes.io/is-default-class":"true"}}}'

再次查看,可以看到此时已经设置好默认的StorageClass了

[root@master kubersphere]# kubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

nfs-storageclass (default) nfs-storage-class Retain Immediate true 2m6s

sc-nexus3 kubernetes.io/no-provisioner Delete WaitForFirstConsumer false 88d

[root@master kubersphere]#

二、部署最小化Kubersphere

2.1 下载kubersphere的配控文件

curl -L -O https://github.com/kubesphere/ks-installer/releases/download/v3.3.1/cluster-configuration.yaml

curl -L -O https://github.com/kubesphere/ks-installer/releases/download/v3.3.1/kubesphere-installer.yaml

2.2 kubersphere-installer.yaml 配置文件

注意这里不需要修改

---

apiVersion: apiextensions.k8s.io/v1

kind: CustomResourceDefinition

metadata:

name: clusterconfigurations.installer.kubesphere.io

spec:

group: installer.kubesphere.io

versions:

- name: v1alpha1

served: true

storage: true

schema:

openAPIV3Schema:

type: object

properties:

spec:

type: object

x-kubernetes-preserve-unknown-fields: true

status:

type: object

x-kubernetes-preserve-unknown-fields: true

scope: Namespaced

names:

plural: clusterconfigurations

singular: clusterconfiguration

kind: ClusterConfiguration

shortNames:

- cc

---

apiVersion: v1

kind: Namespace

metadata:

name: kubesphere-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: ks-installer

namespace: kubesphere-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: ks-installer

rules:

- apiGroups:

- ""

resources:

- '*'

verbs:

- '*'

- apiGroups:

- apps

resources:

- '*'

verbs:

- '*'

- apiGroups:

- extensions

resources:

- '*'

verbs:

- '*'

- apiGroups:

- batch

resources:

- '*'

verbs:

- '*'

- apiGroups:

- rbac.authorization.k8s.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- apiregistration.k8s.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- apiextensions.k8s.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- tenant.kubesphere.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- certificates.k8s.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- devops.kubesphere.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- monitoring.coreos.com

resources:

- '*'

verbs:

- '*'

- apiGroups:

- logging.kubesphere.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- jaegertracing.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- storage.k8s.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- admissionregistration.k8s.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- policy

resources:

- '*'

verbs:

- '*'

- apiGroups:

- autoscaling

resources:

- '*'

verbs:

- '*'

- apiGroups:

- networking.istio.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- config.istio.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- iam.kubesphere.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- notification.kubesphere.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- auditing.kubesphere.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- events.kubesphere.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- core.kubefed.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- installer.kubesphere.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- storage.kubesphere.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- security.istio.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- monitoring.kiali.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- kiali.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- networking.k8s.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- edgeruntime.kubesphere.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- types.kubefed.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- monitoring.kubesphere.io

resources:

- '*'

verbs:

- '*'

- apiGroups:

- application.kubesphere.io

resources:

- '*'

verbs:

- '*'

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: ks-installer

subjects:

- kind: ServiceAccount

name: ks-installer

namespace: kubesphere-system

roleRef:

kind: ClusterRole

name: ks-installer

apiGroup: rbac.authorization.k8s.io

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: ks-installer

namespace: kubesphere-system

labels:

app: ks-installer

spec:

replicas: 1

selector:

matchLabels:

app: ks-installer

template:

metadata:

labels:

app: ks-installer

spec:

serviceAccountName: ks-installer

containers:

- name: installer

image: kubesphere/ks-installer:v3.3.1

imagePullPolicy: "Always"

resources:

limits:

cpu: "1"

memory: 1Gi

requests:

cpu: 20m

memory: 100Mi

volumeMounts:

- mountPath: /etc/localtime

name: host-time

readOnly: true

volumes:

- hostPath:

path: /etc/localtime

type: ""

name: host-time

2.3 cluster-configuration.yaml 配置文件

注意这里同样不需要做任何修改

---

apiVersion: installer.kubesphere.io/v1alpha1

kind: ClusterConfiguration

metadata:

name: ks-installer

namespace: kubesphere-system

labels:

version: v3.3.1

spec:

persistence:

storageClass: "nfs-storageclass" # If there is no default StorageClass in your cluster, you need to specify an existing StorageClass here.

authentication:

# adminPassword: "" # Custom password of the admin user. If the parameter exists but the value is empty, a random password is generated. If the parameter does not exist, P@88w0rd is used.

jwtSecret: "" # Keep the jwtSecret consistent with the Host Cluster. Retrieve the jwtSecret by executing "kubectl -n kubesphere-system get cm kubesphere-config -o yaml | grep -v "apiVersion" | grep jwtSecret" on the Host Cluster.

local_registry: "" # Add your private registry address if it is needed.

# dev_tag: "" # Add your kubesphere image tag you want to install, by default it's same as ks-installer release version.

etcd:

monitoring: false # Enable or disable etcd monitoring dashboard installation. You have to create a Secret for etcd before you enable it.

endpointIps: localhost # etcd cluster EndpointIps. It can be a bunch of IPs here.

port: 2379 # etcd port.

tlsEnable: true

common:

core:

console:

enableMultiLogin: true # Enable or disable simultaneous logins. It allows different users to log in with the same account at the same time.

port: 30880

type: NodePort

# apiserver: # Enlarge the apiserver and controller manager's resource requests and limits for the large cluster

# resources: {}

# controllerManager:

# resources: {}

redis:

enabled: false

enableHA: false

volumeSize: 2Gi # Redis PVC size.

openldap:

enabled: false

volumeSize: 2Gi # openldap PVC size.

minio:

volumeSize: 20Gi # Minio PVC size.

monitoring:

# type: external # Whether to specify the external prometheus stack, and need to modify the endpoint at the next line.

endpoint: http://prometheus-operated.kubesphere-monitoring-system.svc:9090 # Prometheus endpoint to get metrics data.

GPUMonitoring: # Enable or disable the GPU-related metrics. If you enable this switch but have no GPU resources, Kubesphere will set it to zero.

enabled: false

gpu: # Install GPUKinds. The default GPU kind is nvidia.com/gpu. Other GPU kinds can be added here according to your needs.

kinds:

- resourceName: "nvidia.com/gpu"

resourceType: "GPU"

default: true

es: # Storage backend for logging, events and auditing.

# master:

# volumeSize: 4Gi # The volume size of Elasticsearch master nodes.

# replicas: 1 # The total number of master nodes. Even numbers are not allowed.

# resources: {}

# data:

# volumeSize: 20Gi # The volume size of Elasticsearch data nodes.

# replicas: 1 # The total number of data nodes.

# resources: {}

logMaxAge: 7 # Log retention time in built-in Elasticsearch. It is 7 days by default.

elkPrefix: logstash # The string making up index names. The index name will be formatted as ks-<elk_prefix>-log.

basicAuth:

enabled: false

username: ""

password: ""

externalElasticsearchHost: ""

externalElasticsearchPort: ""

alerting: # (CPU: 0.1 Core, Memory: 100 MiB) It enables users to customize alerting policies to send messages to receivers in time with different time intervals and alerting levels to choose from.

enabled: false # Enable or disable the KubeSphere Alerting System.

# thanosruler:

# replicas: 1

# resources: {}

auditing: # Provide a security-relevant chronological set of records,recording the sequence of activities happening on the platform, initiated by different tenants.

enabled: false # Enable or disable the KubeSphere Auditing Log System.

# operator:

# resources: {}

# webhook:

# resources: {}

devops: # (CPU: 0.47 Core, Memory: 8.6 G) Provide an out-of-the-box CI/CD system based on Jenkins, and automated workflow tools including Source-to-Image & Binary-to-Image.

enabled: false # Enable or disable the KubeSphere DevOps System.

# resources: {}

jenkinsMemoryLim: 8Gi # Jenkins memory limit.

jenkinsMemoryReq: 4Gi # Jenkins memory request.

jenkinsVolumeSize: 8Gi # Jenkins volume size.

events: # Provide a graphical web console for Kubernetes Events exporting, filtering and alerting in multi-tenant Kubernetes clusters.

enabled: false # Enable or disable the KubeSphere Events System.

# operator:

# resources: {}

# exporter:

# resources: {}

# ruler:

# enabled: true

# replicas: 2

# resources: {}

logging: # (CPU: 57 m, Memory: 2.76 G) Flexible logging functions are provided for log query, collection and management in a unified console. Additional log collectors can be added, such as Elasticsearch, Kafka and Fluentd.

enabled: false # Enable or disable the KubeSphere Logging System.

logsidecar:

enabled: true

replicas: 2

# resources: {}

metrics_server: # (CPU: 56 m, Memory: 44.35 MiB) It enables HPA (Horizontal Pod Autoscaler).

enabled: false # Enable or disable metrics-server.

monitoring:

storageClass: "" # If there is an independent StorageClass you need for Prometheus, you can specify it here. The default StorageClass is used by default.

node_exporter:

port: 9100

# resources: {}

# kube_rbac_proxy:

# resources: {}

# kube_state_metrics:

# resources: {}

# prometheus:

# replicas: 1 # Prometheus replicas are responsible for monitoring different segments of data source and providing high availability.

# volumeSize: 20Gi # Prometheus PVC size.

# resources: {}

# operator:

# resources: {}

# alertmanager:

# replicas: 1 # AlertManager Replicas.

# resources: {}

# notification_manager:

# resources: {}

# operator:

# resources: {}

# proxy:

# resources: {}

gpu: # GPU monitoring-related plug-in installation.

nvidia_dcgm_exporter: # Ensure that gpu resources on your hosts can be used normally, otherwise this plug-in will not work properly.

enabled: false # Check whether the labels on the GPU hosts contain "nvidia.com/gpu.present=true" to ensure that the DCGM pod is scheduled to these nodes.

# resources: {}

multicluster:

clusterRole: none # host | member | none # You can install a solo cluster, or specify it as the Host or Member Cluster.

network:

networkpolicy: # Network policies allow network isolation within the same cluster, which means firewalls can be set up between certain instances (Pods).

# Make sure that the CNI network plugin used by the cluster supports NetworkPolicy. There are a number of CNI network plugins that support NetworkPolicy, including Calico, Cilium, Kube-router, Romana and Weave Net.

enabled: false # Enable or disable network policies.

ippool: # Use Pod IP Pools to manage the Pod network address space. Pods to be created can be assigned IP addresses from a Pod IP Pool.

type: none # Specify "calico" for this field if Calico is used as your CNI plugin. "none" means that Pod IP Pools are disabled.

topology: # Use Service Topology to view Service-to-Service communication based on Weave Scope.

type: none # Specify "weave-scope" for this field to enable Service Topology. "none" means that Service Topology is disabled.

openpitrix: # An App Store that is accessible to all platform tenants. You can use it to manage apps across their entire lifecycle.

store:

enabled: false # Enable or disable the KubeSphere App Store.

servicemesh: # (0.3 Core, 300 MiB) Provide fine-grained traffic management, observability and tracing, and visualized traffic topology.

enabled: false # Base component (pilot). Enable or disable KubeSphere Service Mesh (Istio-based).

istio: # Customizing the istio installation configuration, refer to https://istio.io/latest/docs/setup/additional-setup/customize-installation/

components:

ingressGateways:

- name: istio-ingressgateway

enabled: false

cni:

enabled: false

edgeruntime: # Add edge nodes to your cluster and deploy workloads on edge nodes.

enabled: false

kubeedge: # kubeedge configurations

enabled: false

cloudCore:

cloudHub:

advertiseAddress: # At least a public IP address or an IP address which can be accessed by edge nodes must be provided.

- "" # Note that once KubeEdge is enabled, CloudCore will malfunction if the address is not provided.

service:

cloudhubNodePort: "30000"

cloudhubQuicNodePort: "30001"

cloudhubHttpsNodePort: "30002"

cloudstreamNodePort: "30003"

tunnelNodePort: "30004"

# resources: {}

# hostNetWork: false

iptables-manager:

enabled: true

mode: "external"

# resources: {}

# edgeService:

# resources: {}

gatekeeper: # Provide admission policy and rule management, A validating (mutating TBA) webhook that enforces CRD-based policies executed by Open Policy Agent.

enabled: false # Enable or disable Gatekeeper.

# controller_manager:

# resources: {}

# audit:

# resources: {}

terminal:

# image: 'alpine:3.15' # There must be an nsenter program in the image

timeout: 600 # Container timeout, if set to 0, no timeout will be used. The unit is seconds

2.4 执行如下命令启动

kubectl apply -f kubesphere-installer.yaml

kubectl apply -f cluster-configuration.yaml

2.5 查看安装日志

然后可通过如下命令查看安装日志

kubectl logs -n kubesphere-system $(kubectl get pod -n kubesphere-system -l 'app in (ks-install, ks-installer)' -o jsonpath='{.items[0].metadata.name}') -f

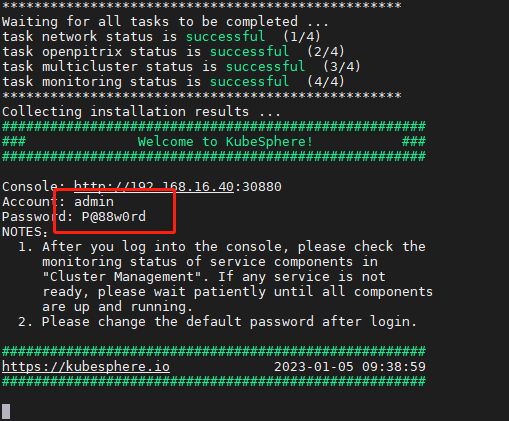

如下,表示已经安装成功,而且这里也提示了admin用户以及默认密码

2.6 浏览器访问

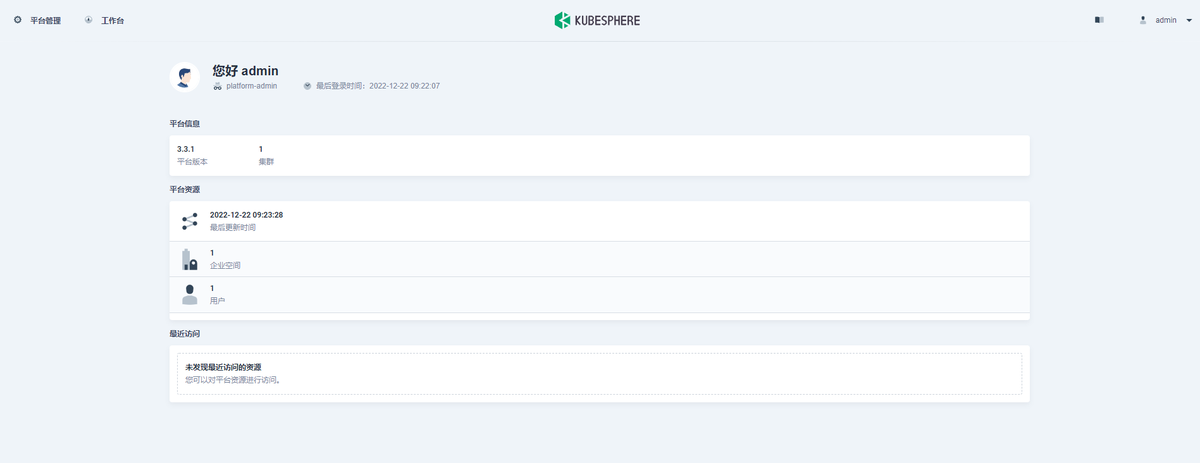

浏览器打开 IP:30880 即可,如下,默认情况下用户名密码:admin/P@88w0rd

2.7 首次登录修改密码

然后会提示修改密码,此时设置为自己的密码即可

2.8 登录成功

此时即登录进来了,如下表示已经搭建成功

相关文章

- 通过kubeadm安装kubernetes 1.13.2

- linux(centos8):kubeadm单机安装kubernetes(kubernetes 1.18.3)

- 【云原生 | Kubernetes 系列】---Ceph集群安装部署

- k59.第二章 基于二进制包安装kubernetes v1.23 --集群部署

- k53.第二章 基于二进制包安装kubernetes v1.22 --集群部署

- k51.第十九章 K8s运维篇-集群升级 -- kubernetes v1.21 二进制包安装方式升级(二)

- k7.第二章 基于二进制包安装kubernetes v1.21 --集群部署

- k6.第一章 基于kubeadm安装kubernetes v1.21 -- 集群部署

- Kubernetes集群Coredns组件的妙处(四十五)

- 【云原生 • Kubernetes】kubernetes 核心技术 - Service

- 【云原生 • Kubernetes】kubernetes 核心技术 - Label 和 Selector

- MySQL----MySQL环境搭建即MySQL在Windows、Centos、Docker、Kubernetes环境下的安装部署

- Kubernetes(k8s)包管理器Helm(Helm3)介绍&Helm3安装Harbor

- Kubernetes Loki安装部署并收集日志

- 【云原生 | Kubernetes 系列】---Ceph集群安装部署

- a37.ansible 生产实战案例 -- 基于二进制包安装kubernetes v1.23 -- 集群部署(一)

- a30.ansible 生产实战案例 -- 基于kubeadm安装kubernetes v1.21 -- 集群升级(二)

- Kubernetes 单节点安装Clickhouse