六种PyTorch学习率调整策略(含代码)

目录

摘要

PyTorch学习率调整策略通过torch.optim.lr_scheduler接口实现。PyTorch提供的学习率调整策略分为三大类,分别是

- 有序调整:等间隔调整(Step),按需调整学习率(MultiStep),指数衰减调整(Exponential)和 余弦退火CosineAnnealing。

- 自适应调整:自适应调整学习率 ReduceLROnPlateau。

- 自定义调整:自定义调整学习率 LambdaLR。

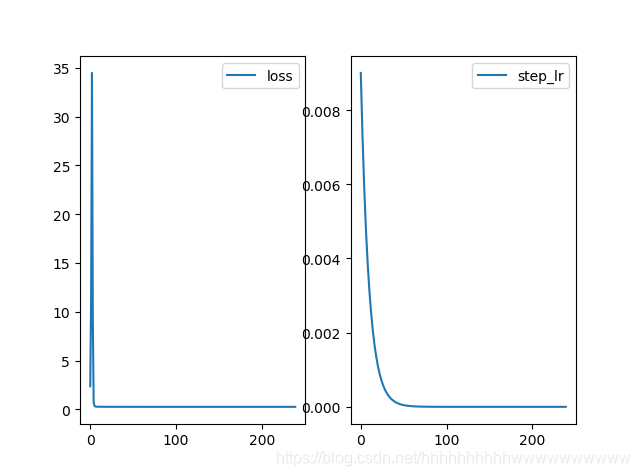

1、等间隔调整学习率 StepLR

等间隔调整学习率,调整倍数为 gamma 倍,调整间隔为 step_size。间隔单位是step。需要注意的是, step 通常是指 epoch,不要弄成 iteration 了。

torch.optim.lr_scheduler.StepLR(optimizer, step_size, gamma=0.1, last_epoch=-1)

参数设置

step_size(int)- 学习率下降间隔数,若为 30,则会在 30、 60、 90…个 step 时,将学习率调整为 lr*gamma。

gamma(float)- 学习率调整倍数,默认为 0.1 倍,即下降 10 倍。

last_epoch(int)- 上一个 epoch 数,这个变量用来指示学习率是否需要调整。当last_epoch 符合设定的间隔时,就会对学习率进行调整。当为-1 时,学习率设置为初始值。

举例

import torch

from torch import nn, optim

from torch.autograd import Variable

import numpy as np

import matplotlib.pyplot as plt

x_train = np.array([[3.3], [4.4], [5.5], [6.71], [6.93], [4.168],

[9.779], [6.182], [7.59], [2.167], [7.042],

[10.791], [5.313], [7.997], [3.1]], dtype=np.float32)

y_train = np.array([[1.7], [2.76], [2.09], [3.19], [1.694], [1.573],

[3.366], [2.596], [2.53], [1.221], [2.827],

[3.465], [1.65], [2.904], [1.3]], dtype=np.float32)

x_train = torch.from_numpy(x_train)

y_train = torch.from_numpy(y_train)

# Linear Regression Model

class LinearRegression(nn.Module):

def __init__(self):

super(LinearRegression, self).__init__()

self.linear1 = nn.Linear(1, 5) # input and output is 1 dimension

self.linear2 = nn.Linear(5, 1)

def forward(self, x):

out = self.linear1(x)

out = self.linear2(out)

return out

model = LinearRegression()

print(model.linear1)

# 定义loss和优化函数

criterion = nn.MSELoss()

optimizer = optim.SGD(

[{"params": model.linear1.parameters(), "lr": 0.01},

{"params": model.linear2.parameters()}],

lr=0.02)

step_schedule = optim.lr_scheduler.StepLR(step_size=20, gamma=0.9, optimizer=optimizer)

step_lr_list = []

loss_list = []

# 开始训练

num_epochs = 240

for epoch in range(num_epochs):

inputs = Variable(x_train)

target = Variable(y_train)

# forward

out = model(inputs)

loss = criterion(out, target)

# backward

optimizer.zero_grad()

loss.backward()

optimizer.step()

step_schedule.step()

step_lr_list.append(step_schedule.get_last_lr()[0])

loss_list.append(loss.item())

plt.subplot(121)

plt.plot(range(len(loss_list)), loss_list, label="loss")

plt.legend()

plt.subplot(122)

plt.plot(range(len(step_lr_list)), step_lr_list, label="step_lr")

plt.legend()

plt.show()

运行结果

注:

学习率调整要放在optimizer更新之后。如果scheduler.step()放在optimizer.update()的前面,将会跳过学习率更新的第一个值。

2、按需调整学习率 MultiStepLR

按设定的间隔调整学习率。这个方法适合后期调试使用,观察 loss 曲线,通过参数milestones给定衰减的epoch列表,可以在指定的epoch时期进行衰减。

torch.optim.lr_scheduler.MultiStepLR(optimizer, milestones, gamma=0.1, last_epoch=-1)

参数设置:

milestones(list)- 一个 list,每一个元素代表何时调整学习率, list 元素必须是递增的。如 milestones=[30,80,120]

gamma(float)- 学习率调整倍数,默认为 0.1 倍,即下降 10 倍。

举例:

import torch

from torch import nn, optim

from torch.autograd import Variable

import numpy as np

import matplotlib.pyplot as plt

x_train = np.array([[3.3], [4.4], [5.5], [6.71], [6.93], [4.168],

[9.779], [6.182], [7.59], [2.167], [7.042],

[10.791], [5.313], [7.997], [3.1]], dtype=np.float32)

y_train = np.array([[1.7], [2.76], [2.09], [3.19], [1.694], [1.573],

[3.366], [2.596], [2.53], [1.221], [2.827],

[3.465], [1.65], [2.904], [1.3]], dtype=np.float32)

x_train = torch.from_numpy(x_train)

y_train = torch.from_numpy(y_train)

# Linear Regression Model

class LinearRegression(nn.Module):

def __init__(self):

super(LinearRegression, self).__init__()

self.linear1 = nn.Linear(1, 5) # input and output is 1 dimension

self.linear2 = nn.Linear(5, 1)

def forward(self, x):

out = self.linear1(x)

out = self.linear2(out)

return out

model = LinearRegression()

print(model.linear1)

# 定义loss和优化函数

criterion = nn.MSELoss()

optimizer = optim.SGD(

[{"params": model.linear1.parameters(), "lr": 0.01},

{"params": model.linear2.parameters()}],

lr=0.02)

multi_schedule = optim.lr_scheduler.MultiStepLR(optimizer=optimizer,milestones=[120,180])

multi_list = []

loss_list = []

# 开始训练

num_epochs = 240

for epoch in range(num_epochs):

inputs = Variable(x_train)

target = Variable(y_train)

# forward

out = model(inputs)

loss = criterion(out, target)

# backward

optimizer.zero_grad()

loss.backward()

optimizer.step()

multi_schedule.step()

multi_list.append(multi_schedule.get_last_lr()[0])

loss_list.append(loss.item())

plt.subplot(121)

plt.plot(range(len(loss_list)), loss_list, label="loss")

plt.legend()

plt.subplot(122)

plt.plot(range(len(multi_list)), multi_list, label="step_lr")

plt.legend()

plt.show()

运行结果:

3、指数衰减调整学习率 ExponentialLR

按指数衰减调整学习率,调整公式:,e代表epoch。

torch.optim.lr_scheduler.ExponentialLR(optimizer, gamma, last_epoch=-1)

参数设置:

gamma- 学习率调整倍数的底,指数为 epoch。

举例

import torch

from torch import nn, optim

from torch.autograd import Variable

import numpy as np

import matplotlib.pyplot as plt

x_train = np.array([[3.3], [4.4], [5.5], [6.71], [6.93], [4.168],

[9.779], [6.182], [7.59], [2.167], [7.042],

[10.791], [5.313], [7.997], [3.1]], dtype=np.float32)

y_train = np.array([[1.7], [2.76], [2.09], [3.19], [1.694], [1.573],

[3.366], [2.596], [2.53], [1.221], [2.827],

[3.465], [1.65], [2.904], [1.3]], dtype=np.float32)

x_train = torch.from_numpy(x_train)

y_train = torch.from_numpy(y_train)

# Linear Regression Model

class LinearRegression(nn.Module):

def __init__(self):

super(LinearRegression, self).__init__()

self.linear1 = nn.Linear(1, 5) # input and output is 1 dimension

self.linear2 = nn.Linear(5, 1)

def forward(self, x):

out = self.linear1(x)

out = self.linear2(out)

return out

model = LinearRegression()

print(model.linear1)

# 定义loss和优化函数

criterion = nn.MSELoss()

optimizer = optim.SGD(

[{"params": model.linear1.parameters(), "lr": 0.01},

{"params": model.linear2.parameters()}],

lr=0.02)

exponent_schedule = optim.lr_scheduler.ExponentialLR(optimizer=optimizer,gamma=0.9)

multi_list = []

loss_list = []

# 开始训练

num_epochs = 240

for epoch in range(num_epochs):

inputs = Variable(x_train)

target = Variable(y_train)

# forward

out = model(inputs)

loss = criterion(out, target)

# backward

optimizer.zero_grad()

loss.backward()

optimizer.step()

exponent_schedule.step()

multi_list.append(exponent_schedule.get_last_lr()[0])

loss_list.append(loss.item())

plt.subplot(121)

plt.plot(range(len(loss_list)), loss_list, label="loss")

plt.legend()

plt.subplot(122)

plt.plot(range(len(multi_list)), multi_list, label="step_lr")

plt.legend()

plt.show()

运行结果

4、余弦退火调整学习率 CosineAnnealingLR

以余弦函数为周期,并在每个周期最大值时重新设置学习率。以初始学习率为最大学习率,以 2∗Tmax为周期,在一个周期内先下降,后上升。

torch.optim.lr_scheduler.CosineAnnealingLR(optimizer, T_max, eta_min=0, last_epoch=-1)

参数设置

T_max(int)- 一次学习率周期的迭代次数,即 T_max 个 epoch 之后重新设置学习率。

eta_min(float)- 最小学习率,即在一个周期中,学习率最小会下降到 eta_min,默认值为 0。

举例

import torch

from torch import nn, optim

from torch.autograd import Variable

import numpy as np

import matplotlib.pyplot as plt

x_train = np.array([[3.3], [4.4], [5.5], [6.71], [6.93], [4.168],

[9.779], [6.182], [7.59], [2.167], [7.042],

[10.791], [5.313], [7.997], [3.1]], dtype=np.float32)

y_train = np.array([[1.7], [2.76], [2.09], [3.19], [1.694], [1.573],

[3.366], [2.596], [2.53], [1.221], [2.827],

[3.465], [1.65], [2.904], [1.3]], dtype=np.float32)

x_train = torch.from_numpy(x_train)

y_train = torch.from_numpy(y_train)

# Linear Regression Model

class LinearRegression(nn.Module):

def __init__(self):

super(LinearRegression, self).__init__()

self.linear1 = nn.Linear(1, 5) # input and output is 1 dimension

self.linear2 = nn.Linear(5, 1)

def forward(self, x):

out = self.linear1(x)

out = self.linear2(out)

return out

model = LinearRegression()

print(model.linear1)

# 定义loss和优化函数

criterion = nn.MSELoss()

optimizer = optim.SGD(

[{"params": model.linear1.parameters(), "lr": 0.01},

{"params": model.linear2.parameters()}],

lr=0.02)

cosine_schedule = optim.lr_scheduler.CosineAnnealingLR(optimizer=optimizer,T_max=20,eta_min=0.0004)

multi_list = []

loss_list = []

# 开始训练

num_epochs = 240

for epoch in range(num_epochs):

inputs = Variable(x_train)

target = Variable(y_train)

# forward

out = model(inputs)

loss = criterion(out, target)

# backward

optimizer.zero_grad()

loss.backward()

optimizer.step()

cosine_schedule.step()

multi_list.append(cosine_schedule.get_last_lr()[0])

loss_list.append(loss.item())

plt.subplot(121)

plt.plot(range(len(loss_list)), loss_list, label="loss")

plt.legend()

plt.subplot(122)

plt.plot(range(len(multi_list)), multi_list, label="cosine_lr")

plt.legend()

plt.show()

运行结果

5、自适应调整学习率 ReduceLROnPlateau

当某指标不再变化(下降或升高),调整学习率,这是非常实用的学习率调整策略。

例如,当验证集的 loss 不再下降时,进行学习率调整;或者监测验证集的 accuracy,当accuracy 不再上升时,则调整学习率。

torch.optim.lr_scheduler.ReduceLROnPlateau(optimizer, mode='min', factor=0.1, patience=10, verbose=False, threshold=0.0001, threshold_mode='rel', cooldown=0, min_lr=0, eps=1e-08)

参数设置

mode(str)- 模式选择,有 min 和 max 两种模式, min 表示当指标不再降低(如监测loss), max 表示当指标不再升高(如监测 accuracy)。

factor(float)- 学习率调整倍数(等同于其它方法的 gamma),即学习率更新为 lr = lr * factor

patience(int)- 忍受该指标多少个 step 不变化,当忍无可忍时,调整学习率。

verbose(bool)- 是否打印学习率信息, print(‘Epoch {:5d}: reducing learning rate of group {} to {:.4e}.’.format(epoch, i, new_lr))

threshold_mode(str)- 选择判断指标是否达最优的模式,有两种模式, rel 和 abs。

当 threshold_mode == rel,并且 mode == max 时, dynamic_threshold = best * ( 1 +threshold );

当 threshold_mode == rel,并且 mode == min 时, dynamic_threshold = best * ( 1 -threshold );

当 threshold_mode == abs,并且 mode== max 时, dynamic_threshold = best + threshold ;

当 threshold_mode == rel,并且 mode == max 时, dynamic_threshold = best - threshold;

threshold(float)- 配合 threshold_mode 使用。

cooldown(int)- “冷却时间“,当调整学习率之后,让学习率调整策略冷静一下,让模型再训练一段时间,再重启监测模式。

min_lr(float or list)- 学习率下限,可为 float,或者 list,当有多个参数组时,可用 list 进行设置。

eps(float)- 学习率衰减的最小值,当学习率变化小于 eps 时,则不调整学习率。

举例

import torch

from torch import nn, optim

from torch.autograd import Variable

import numpy as np

import matplotlib.pyplot as plt

x_train = np.array([[3.3], [4.4], [5.5], [6.71], [6.93], [4.168],

[9.779], [6.182], [7.59], [2.167], [7.042],

[10.791], [5.313], [7.997], [3.1]], dtype=np.float32)

y_train = np.array([[1.7], [2.76], [2.09], [3.19], [1.694], [1.573],

[3.366], [2.596], [2.53], [1.221], [2.827],

[3.465], [1.65], [2.904], [1.3]], dtype=np.float32)

x_train = torch.from_numpy(x_train)

y_train = torch.from_numpy(y_train)

# Linear Regression Model

class LinearRegression(nn.Module):

def __init__(self):

super(LinearRegression, self).__init__()

self.linear1 = nn.Linear(1, 5) # input and output is 1 dimension

self.linear2 = nn.Linear(5, 1)

def forward(self, x):

out = self.linear1(x)

out = self.linear2(out)

return out

model = LinearRegression()

print(model.linear1)

# 定义loss和优化函数

criterion = nn.MSELoss()

optimizer = optim.SGD(

[{"params": model.linear1.parameters(), "lr": 0.01},

{"params": model.linear2.parameters()}],

lr=0.02)

reduce_schedule = optim.lr_scheduler.ReduceLROnPlateau(optimizer, mode='min', factor=0.1, patience=10,

verbose=False, threshold=1e-4, threshold_mode='rel',

cooldown=0, min_lr=0, eps=1e-8)

multi_list = []

loss_list = []

# 开始训练

num_epochs = 240

for epoch in range(num_epochs):

inputs = Variable(x_train)

target = Variable(y_train)

# forward

out = model(inputs)

loss = criterion(out, target)

# backward

optimizer.zero_grad()

loss.backward()

optimizer.step()

reduce_schedule.step(loss)

multi_list.append(optimizer.param_groups[0]["lr"])

loss_list.append(loss.item())

plt.subplot(121)

plt.plot(range(len(loss_list)), loss_list, label="loss")

plt.legend()

plt.subplot(122)

plt.plot(range(len(multi_list)), multi_list, label="reduce_lr")

plt.legend()

plt.show()

运行结果

6、自定义调整学习率 LambdaLR

为不同参数组设定不同学习率调整策略。将每一个参数组的学习率设置为初始学习率lr的某个函数倍.

torch.optim.lr_scheduler.LambdaLR(optimizer, lr_lambda, last_epoch=-1)

参数设置

lr_lambda(是一个函数,或者列表(list))--当是一个函数时,需要给其一个整数参数,使其计算出一个乘数因子,用于调整学习率,通常该输入参数是epoch数目或者是一组上面的函数组成的列表。

举例

import torch

from torch import nn, optim

from torch.autograd import Variable

import numpy as np

import matplotlib.pyplot as plt

x_train = np.array([[3.3], [4.4], [5.5], [6.71], [6.93], [4.168],

[9.779], [6.182], [7.59], [2.167], [7.042],

[10.791], [5.313], [7.997], [3.1]], dtype=np.float32)

y_train = np.array([[1.7], [2.76], [2.09], [3.19], [1.694], [1.573],

[3.366], [2.596], [2.53], [1.221], [2.827],

[3.465], [1.65], [2.904], [1.3]], dtype=np.float32)

x_train = torch.from_numpy(x_train)

y_train = torch.from_numpy(y_train)

# Linear Regression Model

class LinearRegression(nn.Module):

def __init__(self):

super(LinearRegression, self).__init__()

self.linear1 = nn.Linear(1, 5) # input and output is 1 dimension

self.linear2 = nn.Linear(5, 1)

def forward(self, x):

out = self.linear1(x)

out = self.linear2(out)

return out

lambda1 = lambda epoch: epoch//20

lambda2 = lambda epoch: 0.95**epoch

model = LinearRegression()

print(model.linear1)

# 定义loss和优化函数

criterion = nn.MSELoss()

optimizer = optim.SGD(

[{"params": model.linear1.parameters(), "lr": 0.01},

{"params": model.linear2.parameters()}],

lr=0.02)

lambda_schedule = optim.lr_scheduler.LambdaLR(optimizer=optimizer,lr_lambda=[lambda1,lambda2])

lambda1_list = []

lambda2_list = []

loss_list = []

# 开始训练

num_epochs = 240

for epoch in range(num_epochs):

inputs = Variable(x_train)

target = Variable(y_train)

# forward

out = model(inputs)

loss = criterion(out, target)

# backward

optimizer.zero_grad()

loss.backward()

optimizer.step()

lambda_schedule.step()

lambda1_list.append(optimizer.param_groups[0]["lr"])

lambda2_list.append(optimizer.param_groups[1]["lr"])

loss_list.append(loss.item())

plt.subplot(121)

plt.plot(range(len(loss_list)), loss_list, label="loss")

plt.legend()

plt.subplot(122)

plt.plot(range(len(lambda1_list)),lambda1_list,label="lambda1_lr")

plt.plot(range(len(lambda2_list)),lambda2_list,label="lambda2_lr")

plt.legend()

plt.show()

运行结果

总结

介绍完上面的学习率设置后,你肯定会问哪个更管用呢? 其实这要根据实际情况选择不同的策略。一般来说等间隔调整学习率、自适应调整学习率、余弦退火调整学习率。祝各位Loss收敛!!!!

如果给打赏可以扫描下方的二维码!!!

参考文章:

https://blog.csdn.net/shanglianlm/article/details/85143614

https://blog.csdn.net/weixin_42662358/article/details/93732852

相关文章

- 深度学习小白实现残差网络resnet18 ——pytorch「建议收藏」

- batchnorm pytorch_Pytorch中的BatchNorm

- 利用Anaconda安装pytorch和paddle深度学习环境+pycharm安装—免额外安装CUDA和cudnn(适合小白的保姆级教学)[通俗易懂]

- python2.7安装pytorch_PyTorch安装「建议收藏」

- Pytorch模型训练实用教程学习笔记:一、数据加载和transforms方法总结

- 猿创征文|在校大学生学习UI设计必备工具及日常生活中使用的软件

- 数据化人才盘点九宫格 plus版本(附学习视频)

- Ingress-Nginx 服务暴露基础学习与实践

- Hinton等谈深度学习十年;PyTorch落地Linux基金会的影响;机器学习界的“GitHub”|AI系统前沿动态

- 「趣学前端」元编程,翻书学习时发现的陌生词汇,当然是记个笔记

- Python中用PyTorch机器学习神经网络分类预测银行客户流失模型|附代码数据

- PyTorch-24h 01_PyTorch深度学习流程

- PyTorch深度学习领域框架

- 李想2022年的反思,对品牌、文化、组织的思考,对微软和丰田的学习,新能源商业模式和技术路线的分析,对苹果造车的判断,对蔚来、小

- AMD的PyTorch机器学习工具,现在是一个Python包了

- 纯Rust编写的机器学习框架Neuronika,速度堪比PyTorch

- Linux系统学习资料汇集(linux资料)

- 我是如何学习 Linux 的

- 机器学习:统计与计算之恋

- 学习Linux AR指令,了解程序库管理和静态链接原理(linuxar指令)

- 学习 Linux 服务器配置:如何设置 A 记录(a记录linux)

- 快速学习MYSQL关键词下载视频软件教程(MYSQL下载视频软件)

- ASP.NETMVC学习笔记