CV之FR:基于Keras框架利用训练好的hdf5模型直接进行人脸识别推理(cv2自带两步检测法)实现对《跑男第六季第五期》之如花视频片段(或调用摄像头)进行实时性别、脸部表情识别

2023-09-14 09:04:44 时间

CV之FR:基于Keras框架利用训练好的hdf5模型直接进行人脸识别推理(cv2自带两步检测法)实现对《跑男第六季第五期》之如花视频片段(或调用摄像头)进行实时性别、脸部表情识别

目录

基于Keras框架利用训练好的hdf5模型直接进行人脸识别推理(cv2自带两步检测法)实现对《跑男第六季第五期》之如花视频片段(或调用摄像头)进行实时性别、脸部表情识别

基于Keras框架利用训练好的hdf5模型直接进行人脸识别推理(cv2自带两步检测法)实现对《跑男第六季第五期》之如花视频片段(或调用摄像头)进行实时性别、脸部表情识别

输出结果

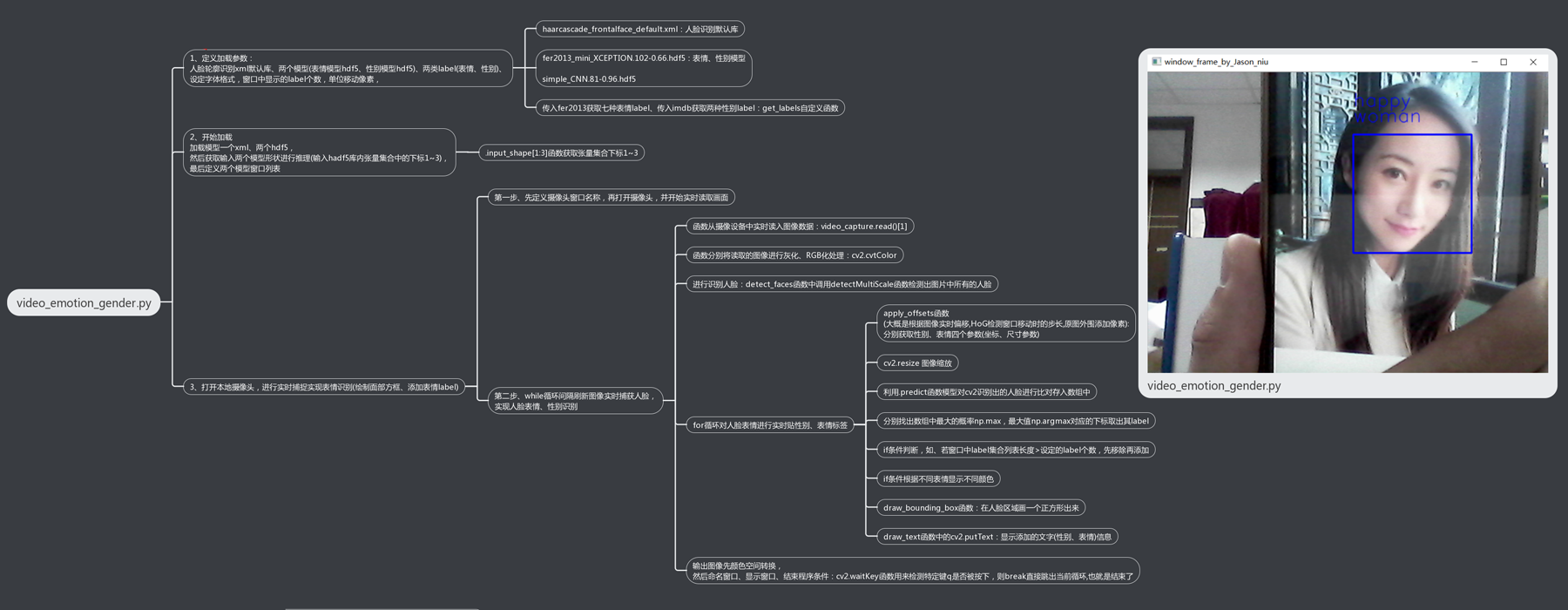

设计思路

核心代码

from statistics import mode

import cv2

from keras.models import load_model

import numpy as np

detection_model_path = '../trained_models/detection_models/haarcascade_frontalface_default.xml'

emotion_model_path = '../trained_models/emotion_models/fer2013_mini_XCEPTION.102-0.66.hdf5'

gender_model_path = '../trained_models/gender_models/simple_CNN.81-0.96.hdf5'

emotion_labels = get_labels('fer2013')

gender_labels = get_labels('imdb')

font = cv2.FONT_HERSHEY_SIMPLEX

frame_window = 10

gender_offsets = (30, 60)

emotion_offsets = (20, 40)

face_detection = load_detection_model(detection_model_path)

emotion_classifier = load_model(emotion_model_path, compile=False)

gender_classifier = load_model(gender_model_path, compile=False)

emotion_target_size = emotion_classifier.input_shape[1:3]

gender_target_size = gender_classifier.input_shape[1:3]

gender_window = []

emotion_window = []

cv2.namedWindow('window_frame_by_Jason_Niu')

# video_capture = cv2.VideoCapture(0)

video_capture = cv2.VideoCapture("F:\File_Python\Python_example\YOLOv3_use_TF\RunMan5.mp4")

while True:

bgr_image = video_capture.read()[1]

gray_image = cv2.cvtColor(bgr_image, cv2.COLOR_BGR2GRAY) #分别将读取的图像进行灰化、RGB化处理

rgb_image = cv2.cvtColor(bgr_image, cv2.COLOR_BGR2RGB)

faces = detect_faces(face_detection, gray_image)

for face_coordinates in faces:

x1, x2, y1, y2 = apply_offsets(face_coordinates, gender_offsets)

rgb_face = rgb_image[y1:y2, x1:x2]

x1, x2, y1, y2 = apply_offsets(face_coordinates, emotion_offsets)

gray_face = gray_image[y1:y2, x1:x2]

try:

rgb_face = cv2.resize(rgb_face, (gender_target_size))

gray_face = cv2.resize(gray_face, (emotion_target_size))

except:

continue

gray_face = preprocess_input(gray_face, False)

gray_face = np.expand_dims(gray_face, 0)

gray_face = np.expand_dims(gray_face, -1)

emotion_label_arg = np.argmax(emotion_classifier.predict(gray_face))

emotion_text = emotion_labels[emotion_label_arg]

emotion_window.append(emotion_text)

rgb_face = np.expand_dims(rgb_face, 0)

rgb_face = preprocess_input(rgb_face, False)

gender_prediction = gender_classifier.predict(rgb_face)

gender_label_arg = np.argmax(gender_prediction)

gender_text = gender_labels[gender_label_arg]

gender_window.append(gender_text)

if len(gender_window) > frame_window:

emotion_window.pop(0)

gender_window.pop(0)

try:

emotion_mode = mode(emotion_window)

gender_mode = mode(gender_window)

except:

continue

if gender_text == gender_labels[0]:

color = (0, 0, 255)

else:

color = (255, 0, 0)

draw_bounding_box(face_coordinates, rgb_image, color)

draw_text(face_coordinates, rgb_image, gender_mode,

color, 0, -20, 1, 4)

draw_text(face_coordinates, rgb_image, emotion_mode,

color, 0, -45, 1, 4)

bgr_image = cv2.cvtColor(rgb_image, cv2.COLOR_RGB2BGR)

cv2.namedWindow("window_frame_by_Jason_Niu",0);

cv2.resizeWindow("window_frame_by_Jason_Niu", 640, 380);

cv2.imshow('window_frame_by_Jason_Niu', bgr_image)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

相关文章

- 一起Polyfill系列:让Date识别ISO 8601日期时间格式

- 仿百度壁纸客户端(五)——实现搜索动画GestureDetector手势识别,动态更新搜索关键字

- Android OCR文字识别 实时扫描手机号(极速扫描单行文本方案)

- Ubuntu下Android Studio连接手机无法识别

- CV之FR:基于Keras框架利用训练好的hdf5模型直接进行人脸识别推理(cv2自带两步检测法)实现对《跑男第六季第五期》之如花视频片段(或调用摄像头)进行实时脸部表情识别

- ML之SVM:利用SVM算法对手写数字图片识别数据集(PCA降维处理)进行预测并评估模型(两种算法)性能

- NLP之ASR:基于python和机器学习算法带你玩转的语音实时识别技术

- TF之LSTM:利用多层LSTM算法对MNIST手写数字识别数据集进行多分类

- 使用基于非支配排序的鲸鱼优化算法的生产过程中关键质量特征识别的多目标特征选择(Matlab代码实现)

- 语音识别端到端模型解读:FSMN及其变体模型

- Modelarts与无感识别技术生态总结(浅出版)

- 人体姿态识别代码

- 基于OpenCV性别识别

- 使用金额差来识别商品订单

- 手写汉字笔迹识别模型汇总

- 【2021 第五届“达观杯” 基于大规模预训练模型的风险事件标签识别】1 初赛Rank12的总结与分析

- CV之FR:基于Keras框架利用训练好的hdf5模型直接进行人脸识别推理(cv2自带两步检测法)实现对《跑男第六季第五期》之如花视频片段(或调用摄像头)进行实时性别、脸部表情识别